- 1. Welcome

- 2. Installation and Setup (Core and IDE)

- 3. Getting Involved

- 4. Drools Release Notes

- 4.1. What is New and Noteworthy in Drools 6.0.0

- 4.2. What is New and Noteworthy in Drools 5.5.0

- 4.3. What is New and Noteworthy in Drools 5.4.0

- 4.4. What is New and Noteworthy in Drools 5.3.0

- 4.5. What is New and Noteworthy in Drools 5.2.0

- 4.6. What is New and Noteworthy in Drools 5.1.0

- 4.7. What is New and Noteworthy in Drools 5.0.0

- 4.8. What is new in Drools 4.0

- 4.9. Upgrade tips from Drools 3.0.x to Drools 4.0.x

- 5. Drools compatibility matrix

I've always stated that end business users struggle understanding the differences between rules and processes, and more recently rules and event processing. For them they have this problem in their mind and they just want to model it using some software. The traditional way of using two vendor offerings forces the business user to work with a process oriented or rules oriented approach which just gets in the way, often with great confusion over which tool they should be using to model which bit.

Existing products have done a great job of showing that the two can be combined and a behavioural modelling approach can be used. This allows the business user to work more naturally where the full range of approaches is available to them, without the tools getting in the way. From being process oriented to rule oriented or shades of grey in the middle - whatever suites the problem being modelled at that time.

Drools 5.0 takes this one step further by not only adding BPMN2 based workflow with Drools Flow but also adding event processing with Drools Fusion, creating a more holistic approach to software development. Where the term holistic is used for emphasizing the importance of the whole and the interdependence of its parts.

Drools 5.0 is now split into 5 modules, each with their own manual - Guvnor (BRMS/BPMS), Expert (Rules), Fusion (CEP), Flow (Process/Workflow) and Planner. Guvnor is our web based governance system, traditionally referred to in the rules world as a BRMS. We decided to move away from the BRMS term to a play on governance as it's not rules specific. Expert is the traditional rules engine. Fusion is the event processing side, it's a play on data/sensor fusion terminology. Flow is our workflow module, Kris Verlaenen leads this and has done some amazing work; he's currently moving flow to be incorporated into jBPM 5. The fith module called Planner, authored by Geoffrey De Smet, solves allocation and scheduling type problem and while still in the early stage of development is showing a lot of promise. We hope to add Semantics for 2011, based around description logc, and that is being work on as part of the next generaion Drools designs.

I've been working in the rules field now for around 7 years and I finally feel like I'm getting to grips with things and ideas are starting to gel and the real innovation is starting to happen. To me It feels like we actually know what we are doing now, compared to the past where there was a lot of wild guessing and exploration. I've been working hard on the next generation Drools Expert design document with Edson Tirelli and Davide Sottara. I invite you to read the document and get involved, http://community.jboss.org/wiki/DroolsLanguageEnhancements. The document takes things to the next level pushing Drools forward as a hybrid engine, not just a capable production rule system, but also melding in logic programming (prolog) with functional programming and description logic along with a host of other ideas for a more expressive and modern feeling language.

I hope you can feel the passion that my team and I have while working on Drools, and that some of it rubs off on you during your adventures.

Drools provides an Eclipse-based IDE (which is optional), but at its core only Java 1.5 (Java SE) is required.

A simple way to get started is to download and install the Eclipse plug-in - this will also require the Eclipse GEF framework to be installed (see below, if you don't have it installed already). This will provide you with all the dependencies you need to get going: you can simply create a new rule project and everything will be done for you. Refer to the chapter on the Rule Workbench and IDE for detailed instructions on this. Installing the Eclipse plug-in is generally as simple as unzipping a file into your Eclipse plug-in directory.

Use of the Eclipse plug-in is not required. Rule files are just textual input (or spreadsheets as the case may be) and the IDE (also known as the Rule Workbench) is just a convenience. People have integrated the rule engine in many ways, there is no "one size fits all".

Alternatively, you can download the binary distribution, and include the relevant jars in your projects classpath.

Drools is broken down into a few modules, some are required during rule development/compiling, and some are required at runtime. In many cases, people will simply want to include all the dependencies at runtime, and this is fine. It allows you to have the most flexibility. However, some may prefer to have their "runtime" stripped down to the bare minimum, as they will be deploying rules in binary form - this is also possible. The core runtime engine can be quite compact, and only requires a few 100 kilobytes across 3 jar files.

The following is a description of the important libraries that make up JBoss Drools

knowledge-api.jar - this provides the interfaces and factories. It also helps clearly show what is intended as a user api and what is just an engine api.

knowledge-internal-api.jar - this provides internal interfaces and factories.

drools-core.jar - this is the core engine, runtime component. Contains both the RETE engine and the LEAPS engine. This is the only runtime dependency if you are pre-compiling rules (and deploying via Package or RuleBase objects).

drools-compiler.jar - this contains the compiler/builder components to take rule source, and build executable rule bases. This is often a runtime dependency of your application, but it need not be if you are pre-compiling your rules. This depends on drools-core.

drools-jsr94.jar - this is the JSR-94 compliant implementation, this is essentially a layer over the drools-compiler component. Note that due to the nature of the JSR-94 specification, not all features are easily exposed via this interface. In some cases, it will be easier to go direct to the Drools API, but in some environments the JSR-94 is mandated.

drools-decisiontables.jar - this is the decision tables 'compiler' component, which uses the drools-compiler component. This supports both excel and CSV input formats.

There are quite a few other dependencies which the above components require, most of which are for the drools-compiler, drools-jsr94 or drools-decisiontables module. Some key ones to note are "POI" which provides the spreadsheet parsing ability, and "antlr" which provides the parsing for the rule language itself.

NOTE: if you are using Drools in J2EE or servlet containers and you come across classpath issues with "JDT", then you can switch to the janino compiler. Set the system property "drools.compiler": For example: -Ddrools.compiler=JANINO.

For up to date info on dependencies in a release, consult the released poms, which can be found on the maven repository.

The jars are also available in the central maven repository (and also in the JBoss maven repository).

If you use Maven, add KIE and Drools dependencies in your project's pom.xml like

this:

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.drools</groupId>

<artifactId>drools-bom</artifactId>

<type>pom</type>

<version>...</version>

<scope>import</scope>

</dependency>

...

</dependencies>

</dependencyManagement>

<dependencies>

<dependency>

<groupId>org.kie</groupId>

<artifactId>kie-api</artifactId>

</dependency>

<dependency>

<groupId>org.drools</groupId>

<artifactId>drools-compiler</artifactId>

<scope>runtime</scope>

</dependency>

...

<dependencies>

This is similar for Gradle, Ivy and Buildr. To identify the latest version, check the maven repository.

If you're still using ANT (without Ivy), copy all the jars from the download zip's

binaries directory and manually verify that your classpath doesn't contain duplicate

jars.

The "runtime" requirements mentioned here are if you are deploying rules as their binary form (either as KnowledgePackage objects, or KnowledgeBase objects etc). This is an optional feature that allows you to keep your runtime very light. You may use drools-compiler to produce rule packages "out of process", and then deploy them to a runtime system. This runtime system only requires drools-core.jar and knowledge-api for execution. This is an optional deployment pattern, and many people do not need to "trim" their application this much, but it is an ideal option for certain environments.

The rule workbench (for Eclipse) requires that you have Eclipse 3.4 or greater, as well as Eclipse GEF 3.4 or greater. You can install it either by downloading the plug-in or, or using the update site.

Another option is to use the JBoss IDE, which comes with all the plug-in requirements pre packaged, as well as a choice of other tools separate to rules. You can choose just to install rules from the "bundle" that JBoss IDE ships with.

GEF is the Eclipse Graphical Editing Framework, which is used for graph viewing components in the plug-in.

If you don't have GEF installed, you can install it using the built in update mechanism (or downloading GEF from the Eclipse.org website not recommended). JBoss IDE has GEF already, as do many other "distributions" of Eclipse, so this step may be redundant for some people.

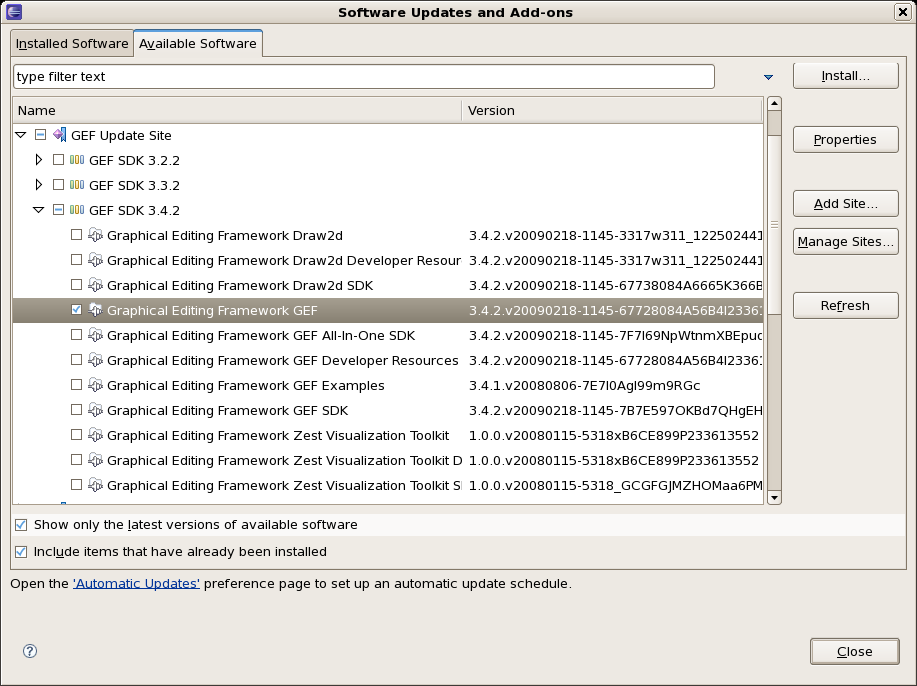

Open the Help->Software updates...->Available Software->Add Site... from the help menu. Location is:

http://download.eclipse.org/tools/gef/updates/releases/

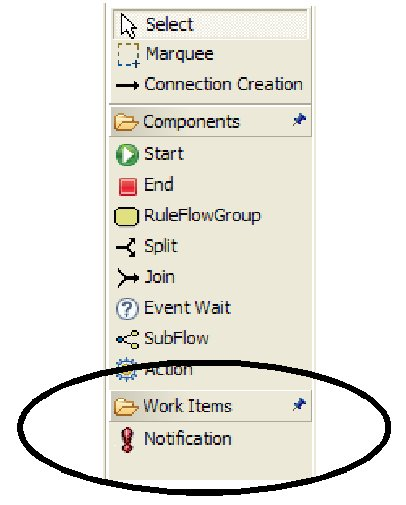

Next you choose the GEF plug-in:

Press next, and agree to install the plug-in (an Eclipse restart may be required). Once this is completed, then you can continue on installing the rules plug-in.

To install from the zip file, download and unzip the file. Inside the zip you will see a plug-in directory, and the plug-in jar itself. You place the plug-in jar into your Eclipse applications plug-in directory, and restart Eclipse.

Download the Drools Eclipse IDE plugin from the link below. Unzip the downloaded file in your main eclipse folder (do not just copy the file there, extract it so that the feature and plugin jars end up in the features and plugin directory of eclipse) and (re)start Eclipse.

http://www.jboss.org/drools/downloads.html

To check that the installation was successful, try opening the Drools perspective: Click the 'Open Perspective' button in the top right corner of your Eclipse window, select 'Other...' and pick the Drools perspective. If you cannot find the Drools perspective as one of the possible perspectives, the installation probably was unsuccessful. Check whether you executed each of the required steps correctly: Do you have the right version of Eclipse (3.4.x)? Do you have Eclipse GEF installed (check whether the org.eclipse.gef_3.4.*.jar exists in the plugins directory in your eclipse root folder)? Did you extract the Drools Eclipse plugin correctly (check whether the org.drools.eclipse_*.jar exists in the plugins directory in your eclipse root folder)? If you cannot find the problem, try contacting us (e.g. on irc or on the user mailing list), more info can be found no our homepage here:

A Drools runtime is a collection of jars on your file system that represent one specific release of the Drools project jars. To create a runtime, you must point the IDE to the release of your choice. If you want to create a new runtime based on the latest Drools project jars included in the plugin itself, you can also easily do that. You are required to specify a default Drools runtime for your Eclipse workspace, but each individual project can override the default and select the appropriate runtime for that project specifically.

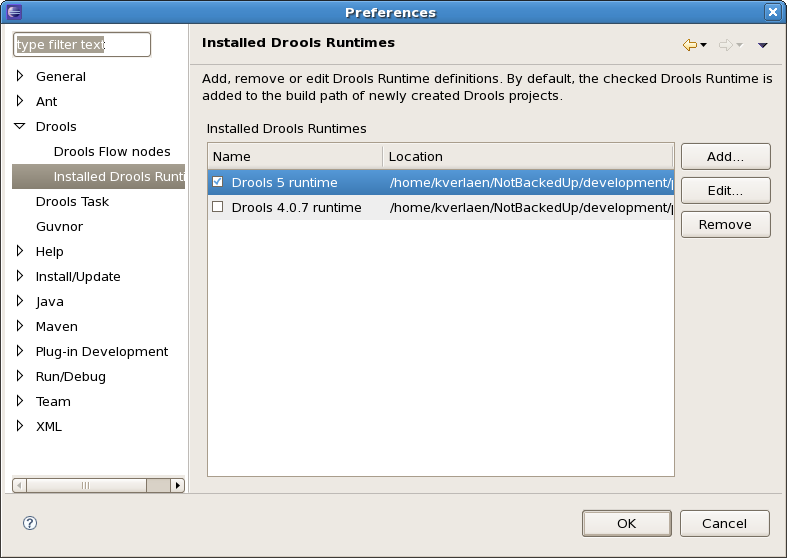

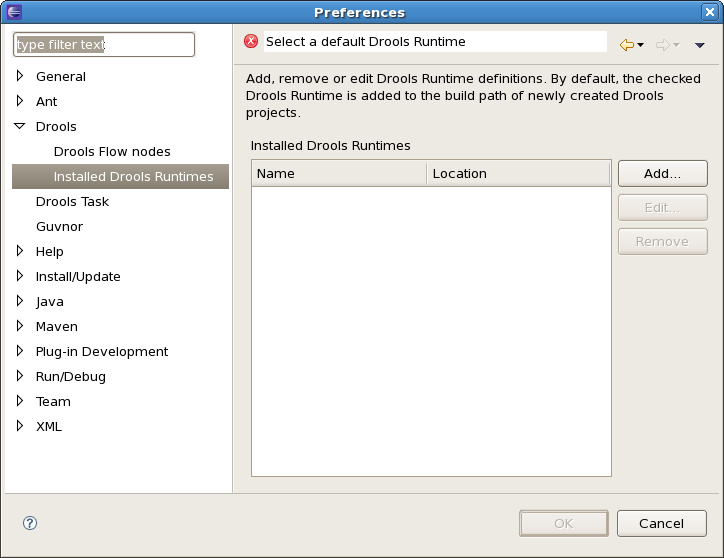

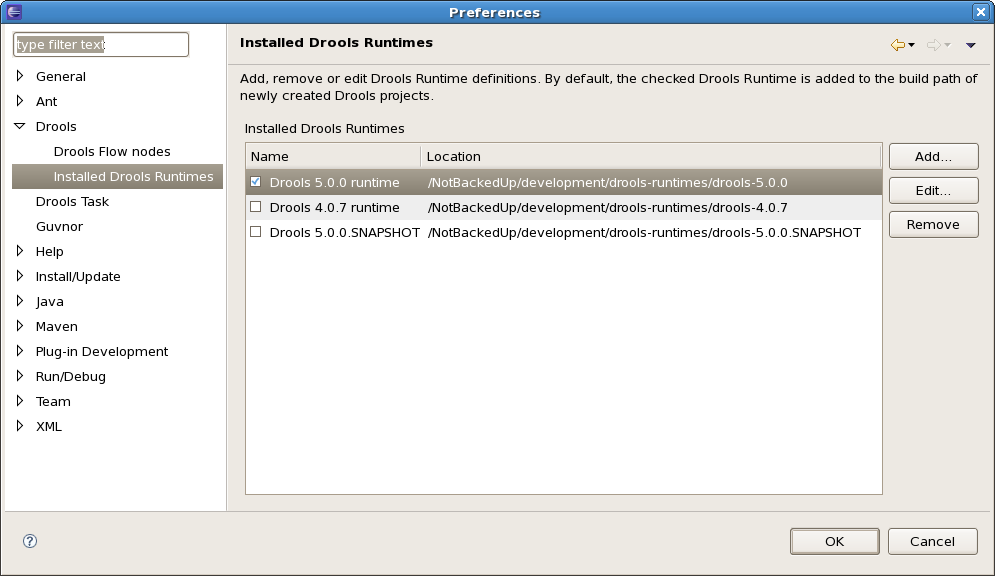

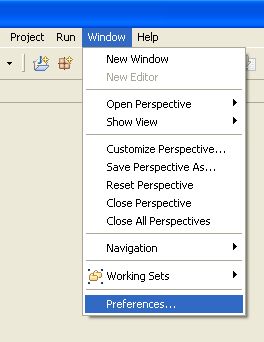

You are required to define one or more Drools runtimes using the Eclipse preferences view. To open up your preferences, in the menu Window select the Preferences menu item. A new preferences dialog should show all your preferences. On the left side of this dialog, under the Drools category, select "Installed Drools runtimes". The panel on the right should then show the currently defined Drools runtimes. If you have not yet defined any runtimes, it should like something like the figure below.

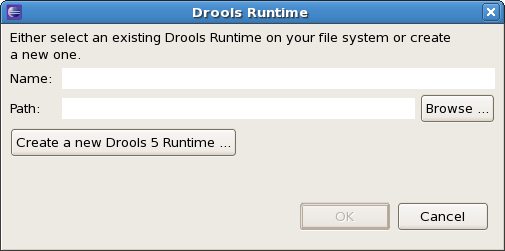

To define a new Drools runtime, click on the add button. A dialog as shown below should pop up, requiring the name for your runtime and the location on your file system where it can be found.

In general, you have two options:

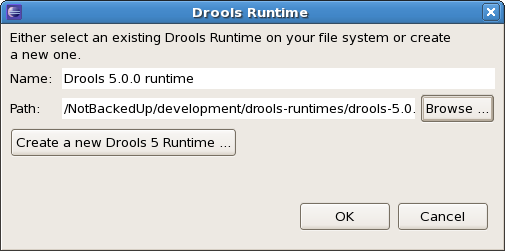

If you simply want to use the default jars as included in the Drools Eclipse plugin, you can create a new Drools runtime automatically by clicking the "Create a new Drools 5 runtime ..." button. A file browser will show up, asking you to select the folder on your file system where you want this runtime to be created. The plugin will then automatically copy all required dependencies to the specified folder. After selecting this folder, the dialog should look like the figure shown below.

If you want to use one specific release of the Drools project, you should create a folder on your file system that contains all the necessary Drools libraries and dependencies. Instead of creating a new Drools runtime as explained above, give your runtime a name and select the location of this folder containing all the required jars.

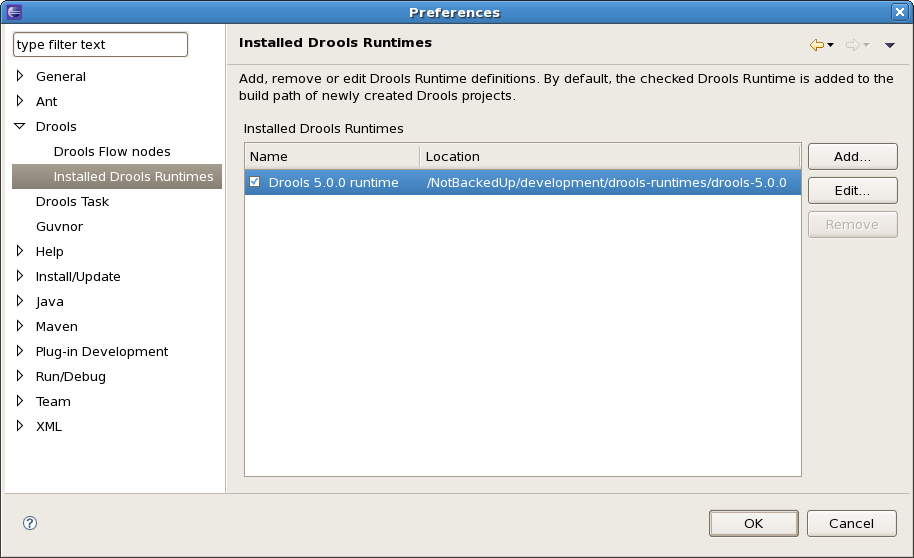

After clicking the OK button, the runtime should show up in your table of installed Drools runtimes, as shown below. Click on checkbox in front of the newly created runtime to make it the default Drools runtime. The default Drools runtime will be used as the runtime of all your Drools project that have not selected a project-specific runtime.

You can add as many Drools runtimes as you need. For example, the screenshot below shows a configuration where three runtimes have been defined: a Drools 4.0.7 runtime, a Drools 5.0.0 runtime and a Drools 5.0.0.SNAPSHOT runtime. The Drools 5.0.0 runtime is selected as the default one.

Note that you will need to restart Eclipse if you changed the default runtime and you want to make sure that all the projects that are using the default runtime update their classpath accordingly.

Whenever you create a Drools project (using the New Drools Project wizard or by converting an existing Java project to a Drools project using the "Convert to Drools Project" action that is shown when you are in the Drools perspective and you right-click an existing Java project), the plugin will automatically add all the required jars to the classpath of your project.

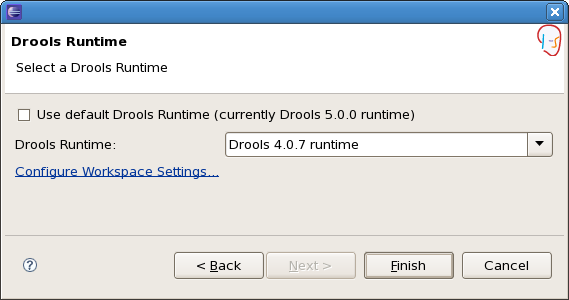

When creating a new Drools project, the plugin will automatically use the default Drools runtime for that project, unless you specify a project-specific one. You can do this in the final step of the New Drools Project wizard, as shown below, by deselecting the "Use default Drools runtime" checkbox and selecting the appropriate runtime in the drop-down box. If you click the "Configure workspace settings ..." link, the workspace preferences showing the currently installed Drools runtimes will be opened, so you can add new runtimes there.

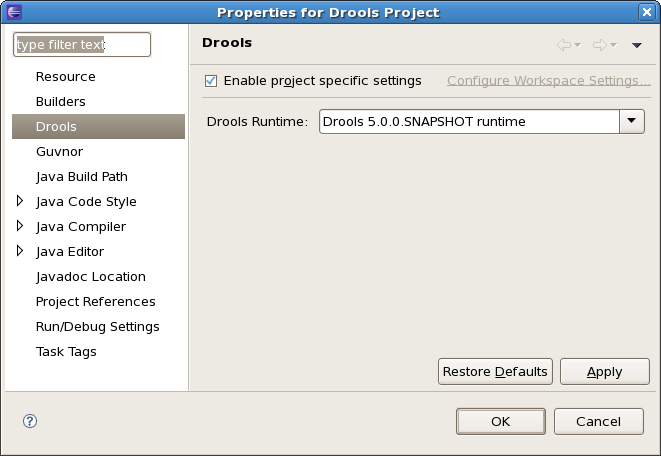

You can change the runtime of a Drools project at any time by opening the project properties (right-click the project and select Properties) and selecting the Drools category, as shown below. Check the "Enable project specific settings" checkbox and select the appropriate runtime from the drop-down box. If you click the "Configure workspace settings ..." link, the workspace preferences showing the currently installed Drools runtimes will be opened, so you can add new runtimes there. If you deselect the "Enable project specific settings" checkbox, it will use the default runtime as defined in your global preferences.

The source code of each maven artifact is available in the JBoss maven repository as a source jar. The same source jars are also included in the download zips. However, if you want to build from source, it's highly recommended to get our sources from our source control.

Drools and jBPM use Git for source control. The blessed git repositories are hosted on Github:

Git allows you to fork our code, independently make personal changes on it, yet still merge in our latest changes regularly and optionally share your changes with us. To learn more about git, read the free book Git Pro.

In essense, building from source is very easy, for example if you want to build the guvnor project:

$ git clone git@github.com:droolsjbpm/guvnor.git ... $ cd guvnor $ mvn clean install -DskipTests -Dfull ...

However, there are a lot potential pitfalls, so if you're serious about building from source and possibly contributing to the project, follow the instructions in the README file in droolsjbpm-build-bootstrap.

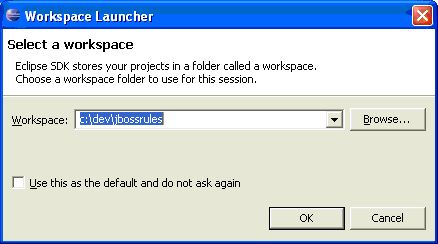

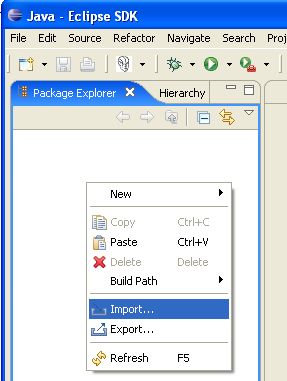

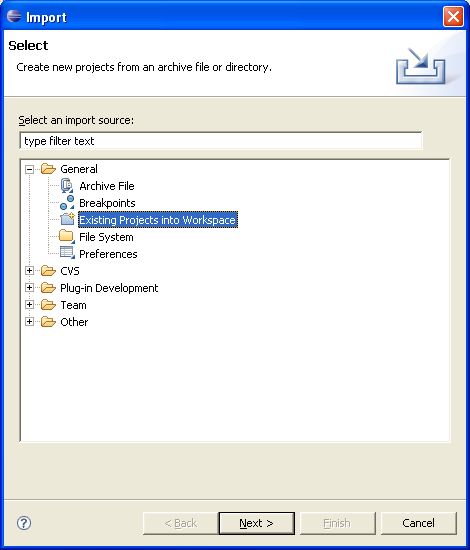

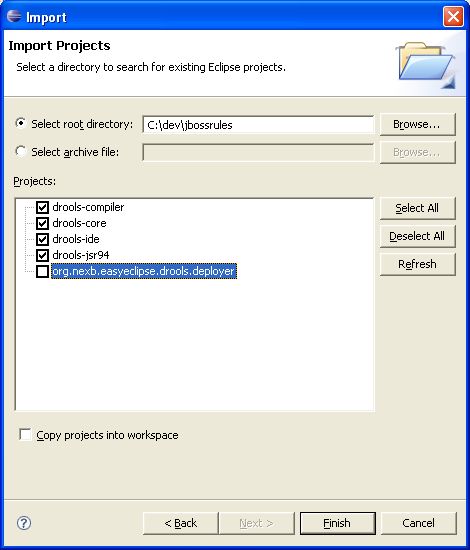

With the Eclipse project files generated they can now be imported into Eclipse. When starting Eclipse open the workspace in the root of your subversion checkout.

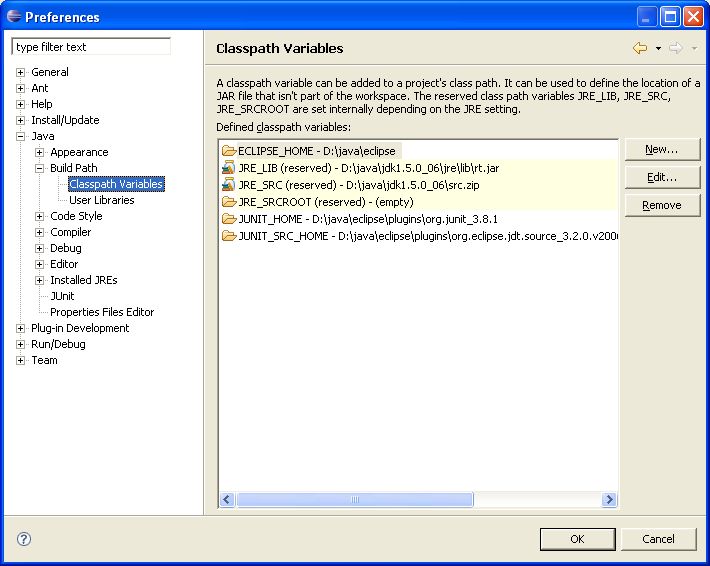

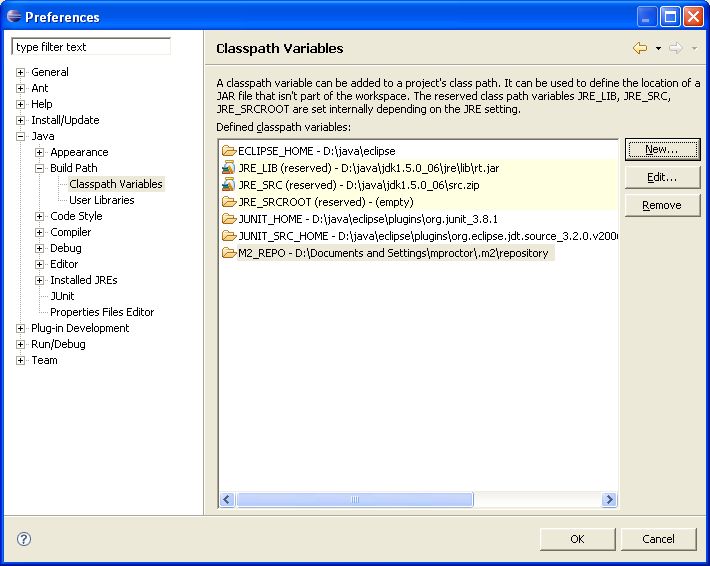

When calling mvn install all the project dependencies were downloaded and added to the

local Maven repository. Eclipse cannot find those dependencies unless you tell it where that repository is. To do

this setup an M2_REPO classpath variable.

We are often asked "How do I get involved". Luckily the answer is simple, just write some code and submit it :) There are no hoops you have to jump through or secret handshakes. We have a very minimal "overhead" that we do request to allow for scalable project development. Below we provide a general overview of the tools and "workflow" we request, along with some general advice.

If you contribute some good work, don't forget to blog about it :)

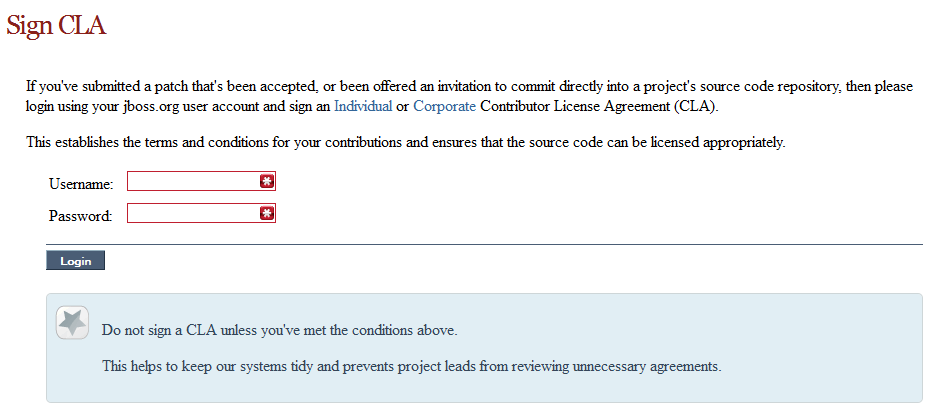

Signing to jboss.org will give you access to the JBoss wiki, forums and Jira. Go to http://www.jboss.org/ and click "Register".

The only form you need to sign is the contributor agreement, which is fully automated via the web. As the image below says "This establishes the terms and conditions for your contributions and ensures that source code can be licensed appropriately"

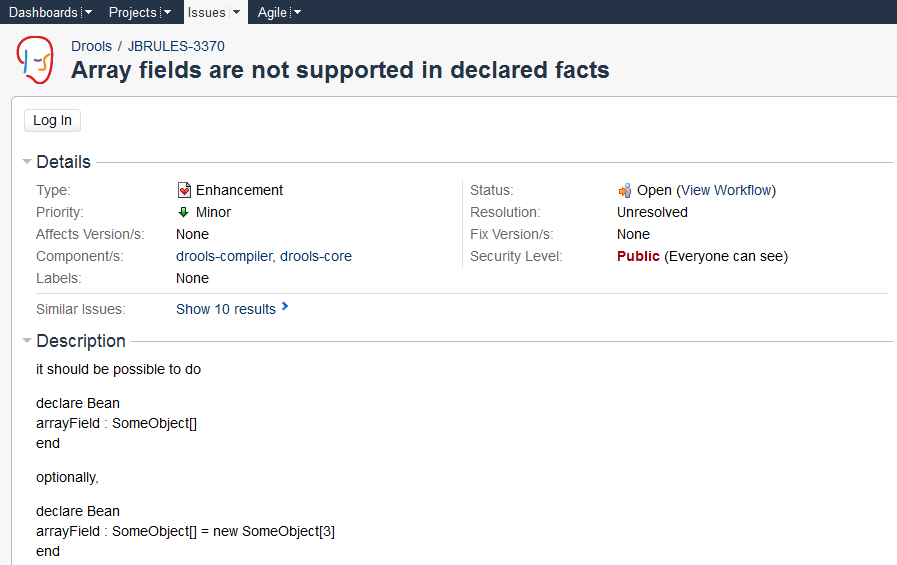

To be able to interact with the core development team you will need to use JIRA, the issue tracker. This ensures that all requests are logged and allocated to a release schedule and all discussions captured in one place. Bug reports, bug fixes, feature requests and feature submissions should all go here. General questions should be undertaken at the mailing lists.

Minor code submissions, like format or documentation fixes do not need an associated JIRA issue created.

https://issues.jboss.org/browse/JBRULES (Drools)

https://issues.jboss.org/browse/JBPM

https://issues.jboss.org/browse/GUVNOR

With the contributor agreement signed and your requests submitted to jira you should now be ready to code :) Create a github account and fork any of the drools, jbpm or guvnor sub modules. The fork will create a copy in your own github space which you can work on at your own pace. If you make a mistake, don't worry blow it away and fork again. Note each github repository provides you the clone (checkout) URL, github will provide you URLs specific to your fork.

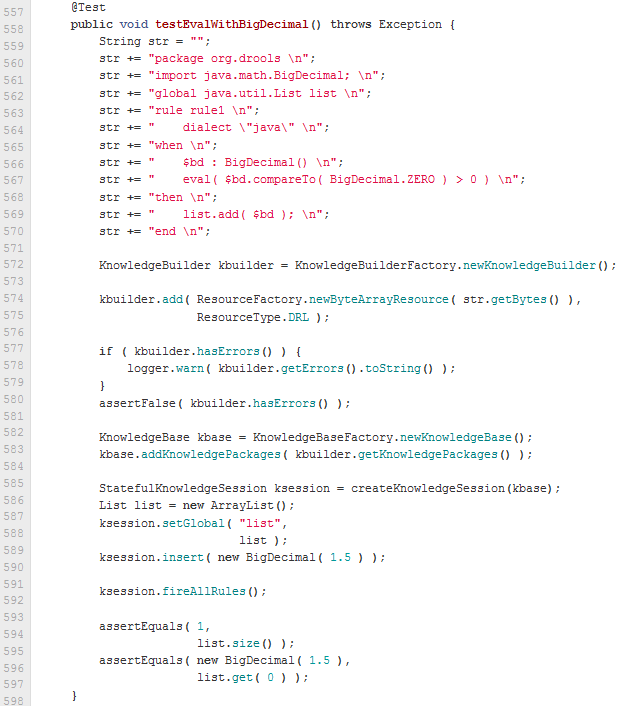

When writing tests, try and keep them minimal and self contained. We prefer to keep the drl fragments within the test, as it makes for quicker reviewing. If their are a large number of rules then using a String is not practical so then by all means place them in separate drl files instead to be loaded from the classpath. If your tests need to use a model, please try to use those that already exist for other unit tests; such as Person, Cheese or Order. If no classes exist that have the fields you need, try and update fields of existing classes before adding a new class.

There are a vast number of tests to look over to get an idea, MiscTest is a good place to start.

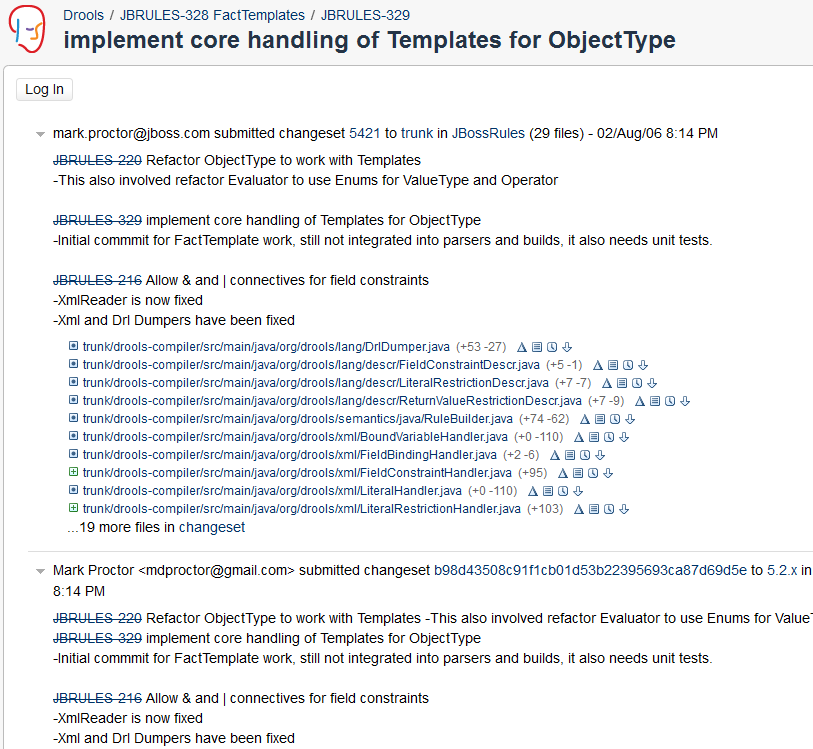

When you commit, make sure you use the correct conventions. The commit must start with the JIRA issue id, such as JBRULES-220. This ensures the commits are cross referenced via JIRA, so we can see all commits for a given issue in the same place. After the id the title of the issue should come next. Then use a newline, indented with a dash, to provide additional information related to this commit. Use an additional new line and dash for each separate point you wish to make. You may add additional JIRA cross references to the same commit, if it's appropriate. In general try to avoid combining unrelated issues in the same commit.

Don't forget to rebase your local fork from the original master and then push your commits back to your fork.

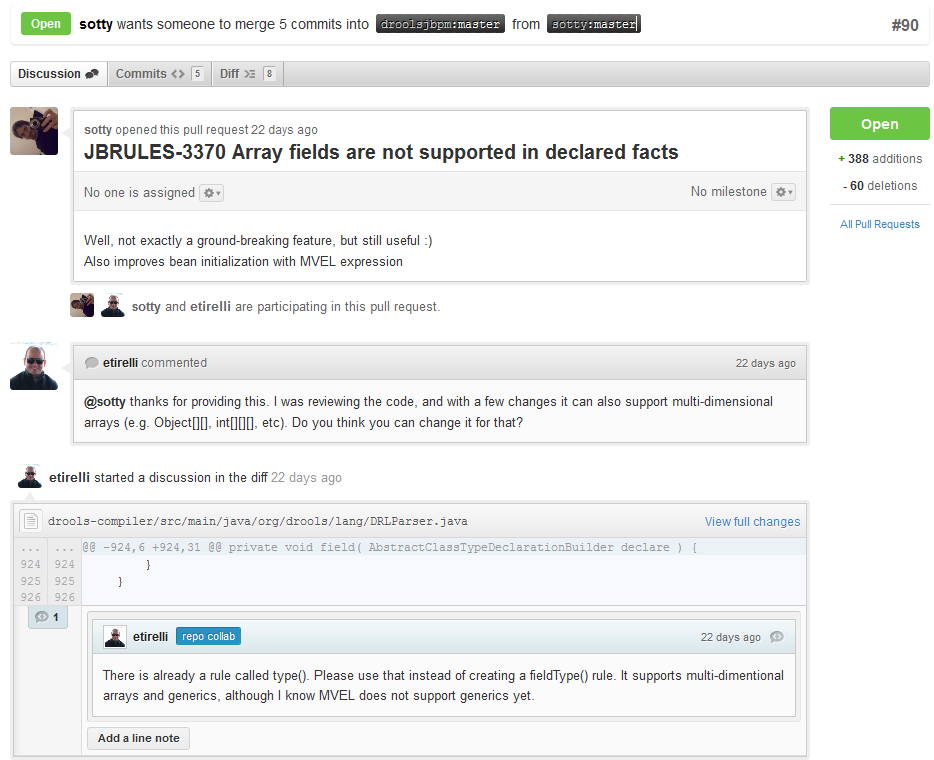

With your code rebased from original master and pushed to your personal github area, you can now submit your work as a pull request. If you look at the top of the page in github for your work area their will be a "Pull Request" button. Selecting this will then provide a gui to automate the submission of your pull request.

The pull request then goes into a queue for everyone to see and comment on. Below you can see a typical pull request. The pull requests allow for discussions and it shows all associated commits and the diffs for each commit. The discussions typically involve code reviews which provide helpful suggestions for improvements, and allows for us to leave inline comments on specific parts of the code. Don't be disheartened if we don't merge straight away, it can often take several revisions before we accept a pull request. Luckily github makes it very trivial to go back to your code, do some more commits and then update your pull request to your latest and greatest.

It can take time for us to get round to responding to pull requests, so please be patient. Submitted tests that come with a fix will generally be applied quite quickly, where as just tests will often way until we get time to also submit that with a fix. Don't forget to rebase and resubmit your request from time to time, otherwise over time it will have merge conflicts and core developers will general ignore those.

- 4.1. What is New and Noteworthy in Drools 6.0.0

- 4.2. What is New and Noteworthy in Drools 5.5.0

- 4.3. What is New and Noteworthy in Drools 5.4.0

- 4.4. What is New and Noteworthy in Drools 5.3.0

- 4.5. What is New and Noteworthy in Drools 5.2.0

- 4.6. What is New and Noteworthy in Drools 5.1.0

- 4.7. What is New and Noteworthy in Drools 5.0.0

- 4.8. What is new in Drools 4.0

- 4.9. Upgrade tips from Drools 3.0.x to Drools 4.0.x

Many things are changing for Drools 6.0.

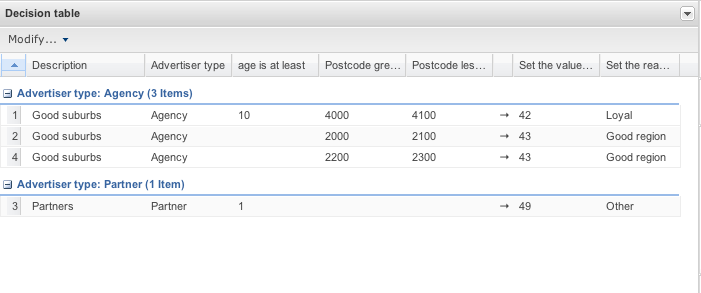

Along with the functional and feature changes we have restructured the Guvnor github repository to better reflect our new architecture. Guvnor has historically been the web application for Drools. It was a composition of editors specific to Drools, a back-end repository and a simplistic asset management system.

Things are now different.

For Drools 6.0 the web application has been extensively re-written to use UberFire that provides a generic Workbench environment, a Metadata Engine, Security Framework, a VFS API and clustering support.

Guvnor has become a generic asset management framework providing common services for generic projects and their dependencies. Drools use of both UberFire and Guvnor has born the Drools Workbench.

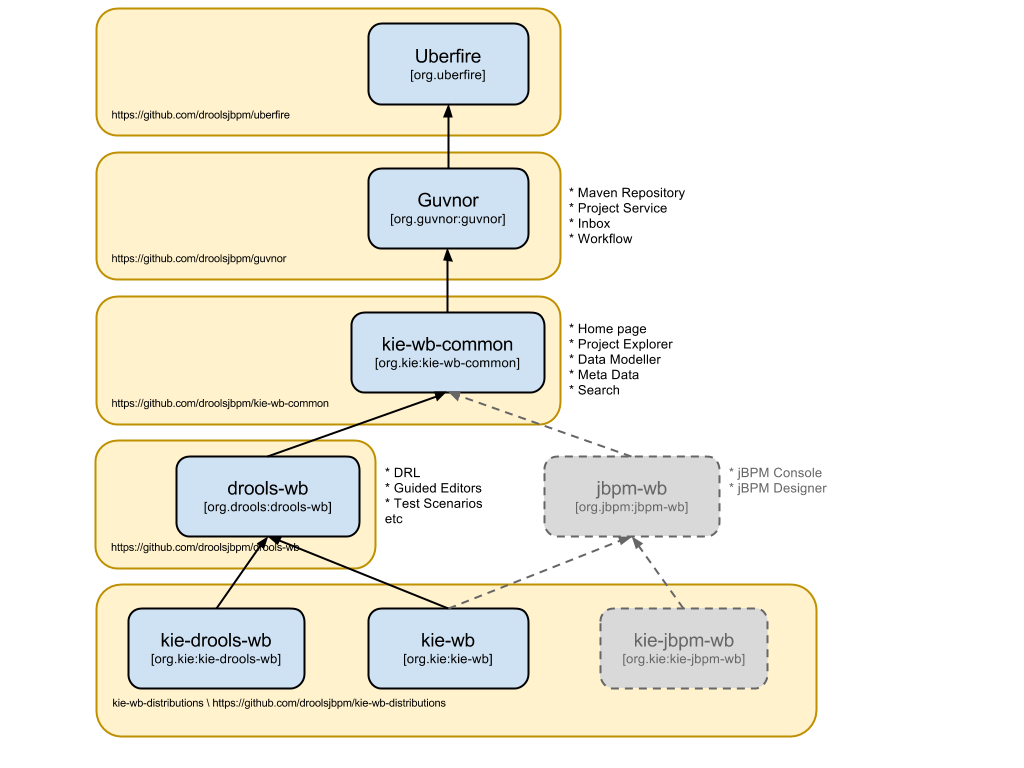

A picture always helps:

4.1.3.1.1. uberfire

UberFire is the foundation of all components for both Drools and jBPM. Every editor and service leverages UberFire. Components can be mixed and matched into either a full featured application of used in isolation.

4.1.3.1.2. guvnor

Guvnor adds project services and dependency management to the mix.

At present Guvnor consists of few parts; being principally a port of common project services that existed in the old Guvnor. As things settle down and the module matures pluggable workflow will be supported allowing for sensitive operations to be controlled by jBPM processes and rules. Work is already underway to include this for 6.0.

4.1.3.1.3. kie-wb-common

Both Drools and jBPM editors and services share the need for a common set of re-usable screens, services and widgets.

Rather than pollute Guvnor with screens and services needed only by Drools and jBPM this module contains such common dependencies.

It is possible to just re-use the UberFire and Guvnor stack to create your own project-based workbench type application and take advantage of the underlying services.

4.1.3.1.4. Drools Workbench (drools-wb)

Drools Workbench is the end product for people looking for a web application that is composed of all Drools related editors, screens and services. It is equivalent to the old Guvnor.

Looking for the web application to accompany Drools Expert and Drools Fusion; an environment to author, test and deploy rules. This is what you're looking for.

4.1.3.1.5. KIE Drools Workbench (kie-drools-wb)

KIE Drools Workbench (for want of a better name - it's amazing how difficult names can be) is an extension of Drools Workbench including jBPM Designer to support Rule Flow.

jBPM Designer, now being an UberFire compatible component, does not need to be deployed as a separate web application. We bundle it here, along with Drools as a convenience for people looking to author Rule Flows along side their rules.

4.1.3.1.6. KIE Workbench (kie-wb)

This is the daddy of them all.

KIE Workbench is the composition of everything known to man; from both the Drools and jBPM worlds. It provides for authoring of projects, data models, guided rules, decision tables etc, test services, process authoring, a process run-time execution environment and human task interaction.

KIE Workbench is the old Guvnor, jBPM Designer and jBPM Console applications combined. On steroids.

You may have noticed; KIE Drools Workbench and KIE Workbench are in the same repository. This highlights a great thing about the new module design we have with UberFire. Web applications are just a composition of dependencies.

You want your own web application that consists of just the Guided Rule Editor and jBPM Designer? You want your own web application that has the the Data Modeller and some of your own screens?

Pick your dependencies and add them to your own UberFire compliant web application and, as the saying goes, the world is your oyster.

UberFire VFS is a NIO.2 based API that provides a unified interface to access different file systems - by default it uses Git as its primary backend implematation. Resources (ie. files and directories) are represented, as proposed by NIO.2, as URIs. Here are some examples:

file:///path/to/some/file.txt

git://repository-name/path/to/file.txt

git://repository-name/path/to/dir/

git://my_branch@repository-name/path/to/file.txt

By using Git as its default implementation, UberFire VFS provides out-of-box a versioned system, which means that every information stored/deleted has its history preserved.

Important

Your system doesn't need to have GIT installed, UberFire uses JGIT internally, a 100% Java based Git implementation.

Metadata engine provides two complementary services: Indexing and Searching. Both services were designed to be as minimum invasive as possible and, in the same time, providing all the complex features needed by the subject.

Indexing is automatically handled on VFS layer in completely transparent way. In order to agregate metadata to a VFS resource using the NIO.2 based API, all you need to do is set a resource attributes.

Important

All metadata are stored on dot files alongside its resource. So if anything bad happens to your index and it get corrupted, you can always recreate the index based on those persistent dot files.

The metadata engine is not only for VFS resources, behind the scenes there's a powerfull metadata API that you can define your own MetaModel with custom MetaTypes, MetaObjects and MetaProperties.

The Metadata Search API is very simple and yet powerful. It provides basically two methods, one that allows a full text search (including wildcards and boolean operations) or by a set of predefined attributes.

For those curious to know what is under the hood, Metadata engine uses Apache Lucene 4 as its default backend, but was designed to be able to have different and eventually mixed/composed backends.

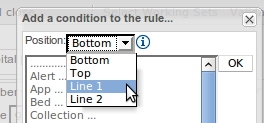

Drools Workbench is the corner stone of the new function and features for 6.0.

Many of the new concepts and functions are described in more detail in the following sections.

Important

Remember Drools Workbench is integrated into other distributions. Therefore many of the core concepts and functions are also within the other distributions. Furthemore jBPM web-based Tooling extends from UberFire and re-use features from Drools Workbench that are common across the KIE platform; such as Project Explorer, Project Editor and the M2 Repository.

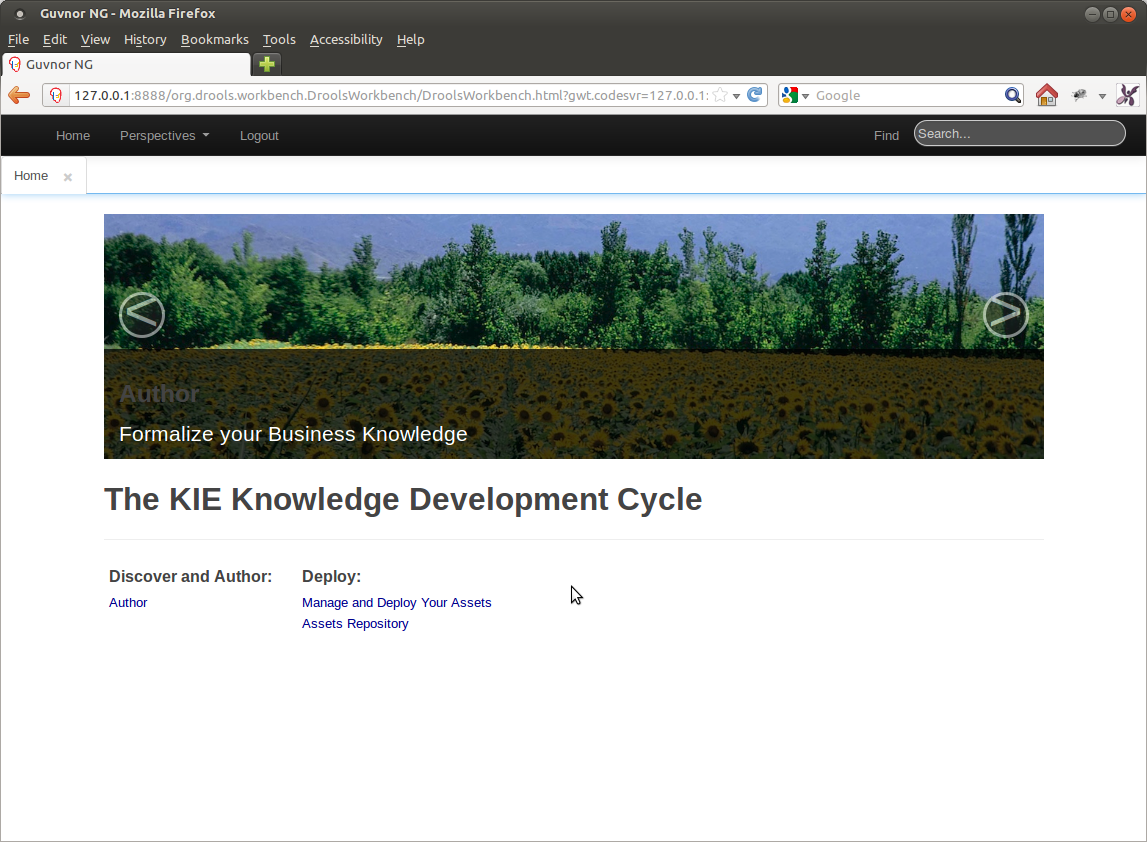

Home is where the heart is.

Drools Workbench starts with a Home Page that will provides short-cut navigation to common tasks.

What is provided in this release is minimal and largely a cosmetic exercise to hopefully increase usability for non-technical people.

The appearance and content of the Home is likely to change as we approach a final release.

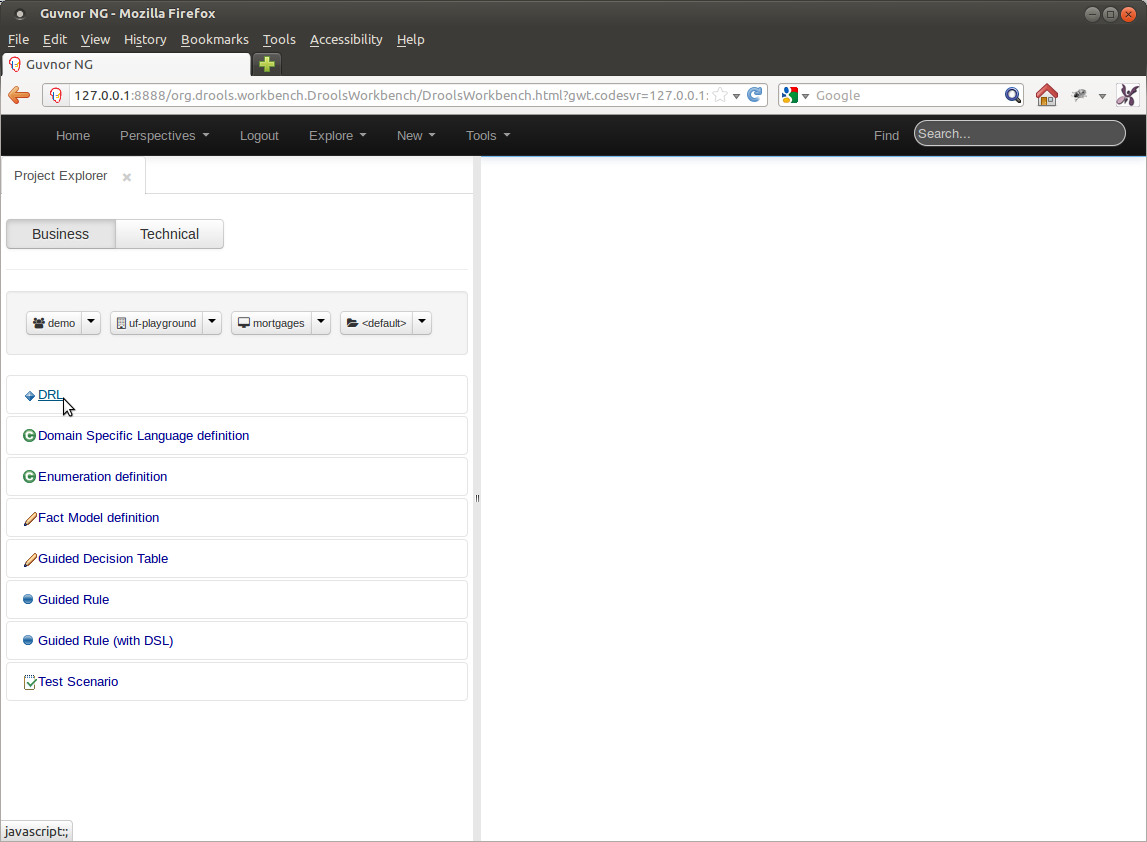

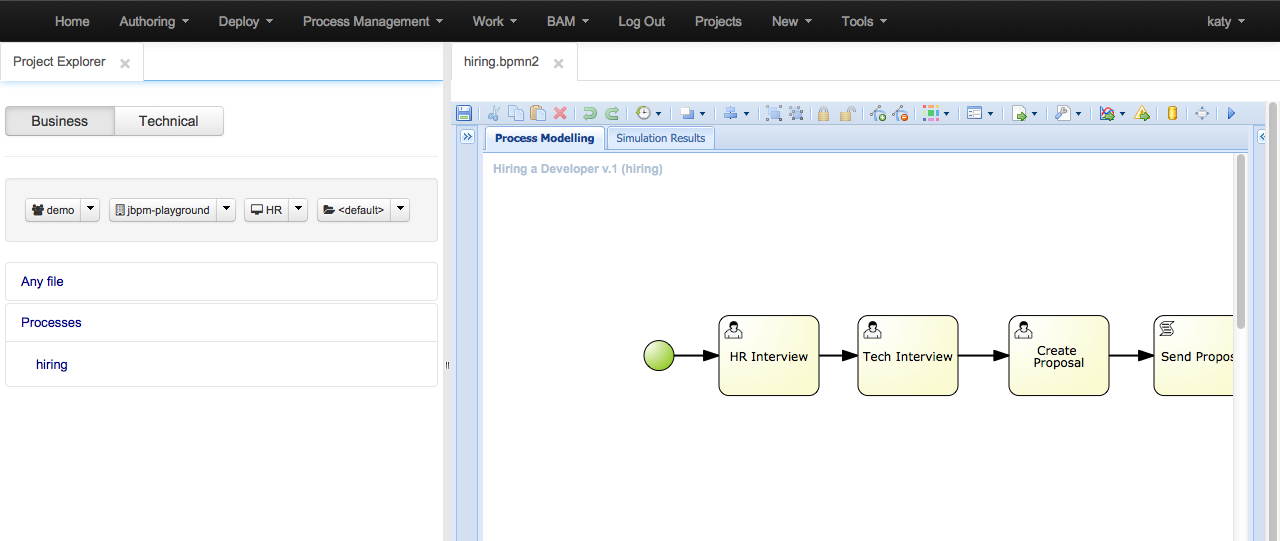

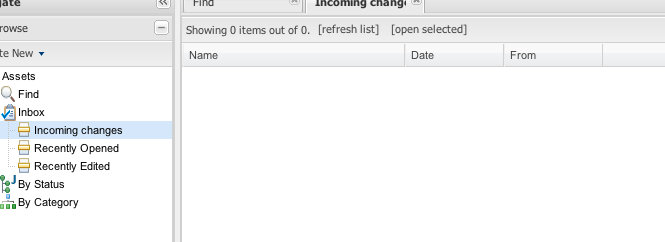

The Project Explorer is where most users will find themselves when authoring projects.

The Project Explorer shows four drop-down selectors that are driven by Group, Repository, Project and Package.

Group : The top level logical entitiy within the KIE world. This can represent a Company, Organization, Department etc.

Repository : Groups have access to one or more repositories. Default repositories are setup; but you can create or clone your own.

Project : Repositories contain one or more KIE projects. New Projects can be created using the "New" Menu option, provided a target repository is selected.

Package : The package concept is no different to Guvnor 5.5. Projects contain packages.

Once a Group, Repository, Project and Package are selected the Project Explorer shows the items within that context.

Warning

The Project Explorer will support both a Business and Technical views. The Technical view will probably not make it into 6.0.0.Beta4.

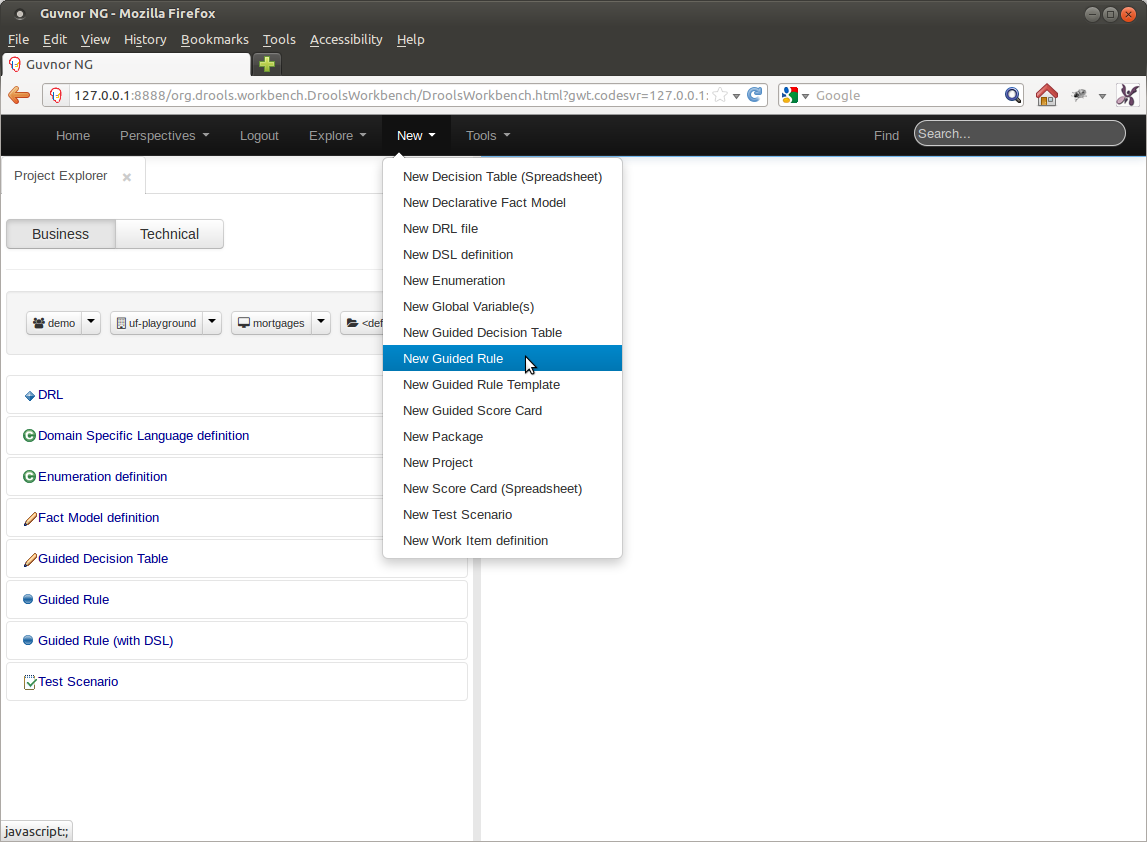

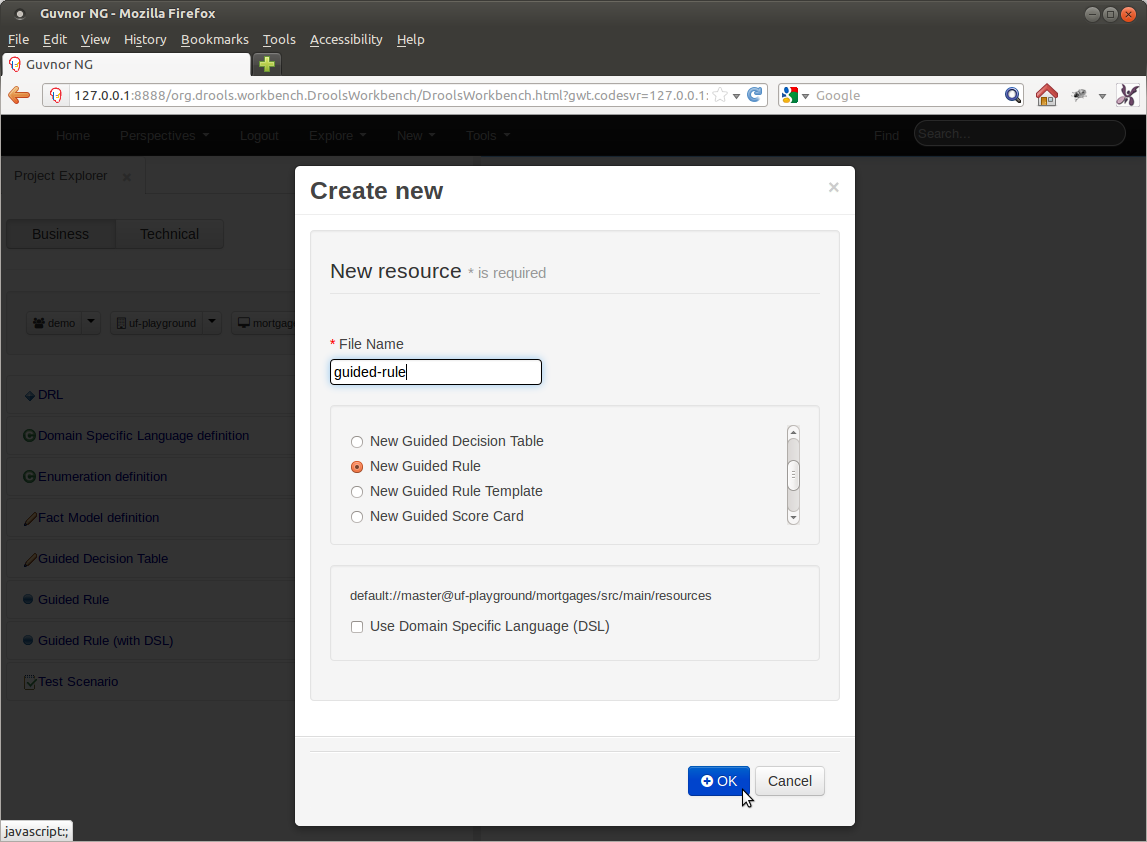

Creation of anything "new" is accomplished using the "New" menu option.

The types of thing that can be created from the "New" menu option depends upon the selected context. Projects require a Repository; rules, tests etc require a Package within a Project. New things are created in the context selected in the Project Explorer. I.E. if Repository 1, Project X is selected new items will be created in Project X.

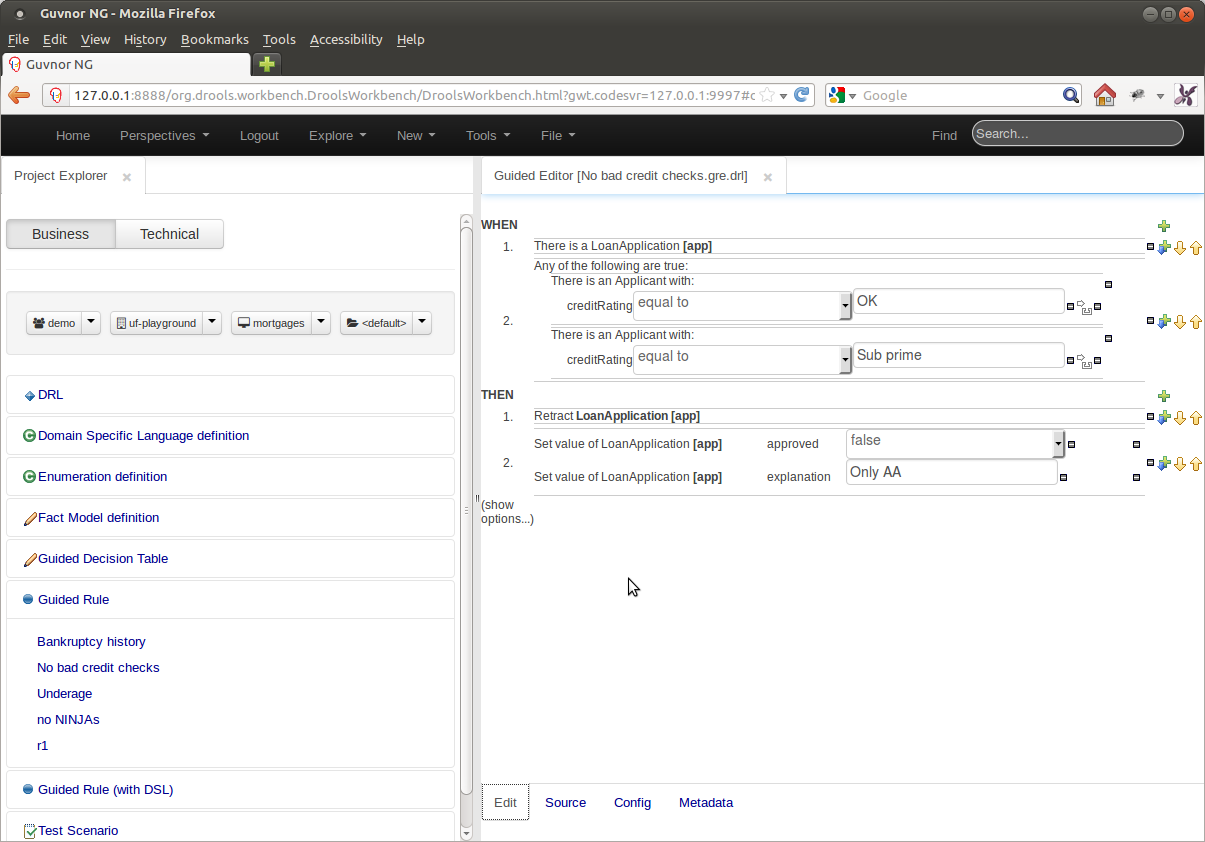

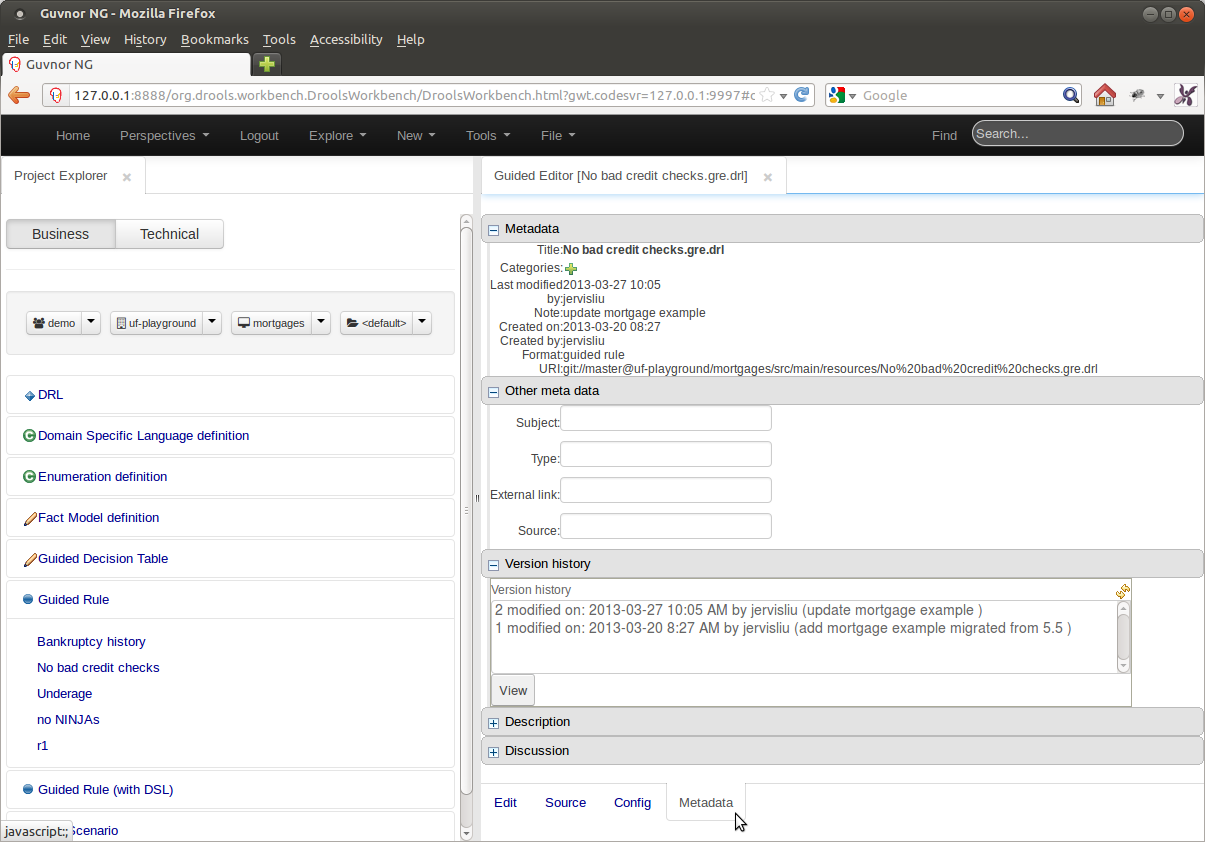

By and large, other than the specific changes mentioned in this document, all of the old Guvnor asset editors have been ported to 6.0 with no or little change.

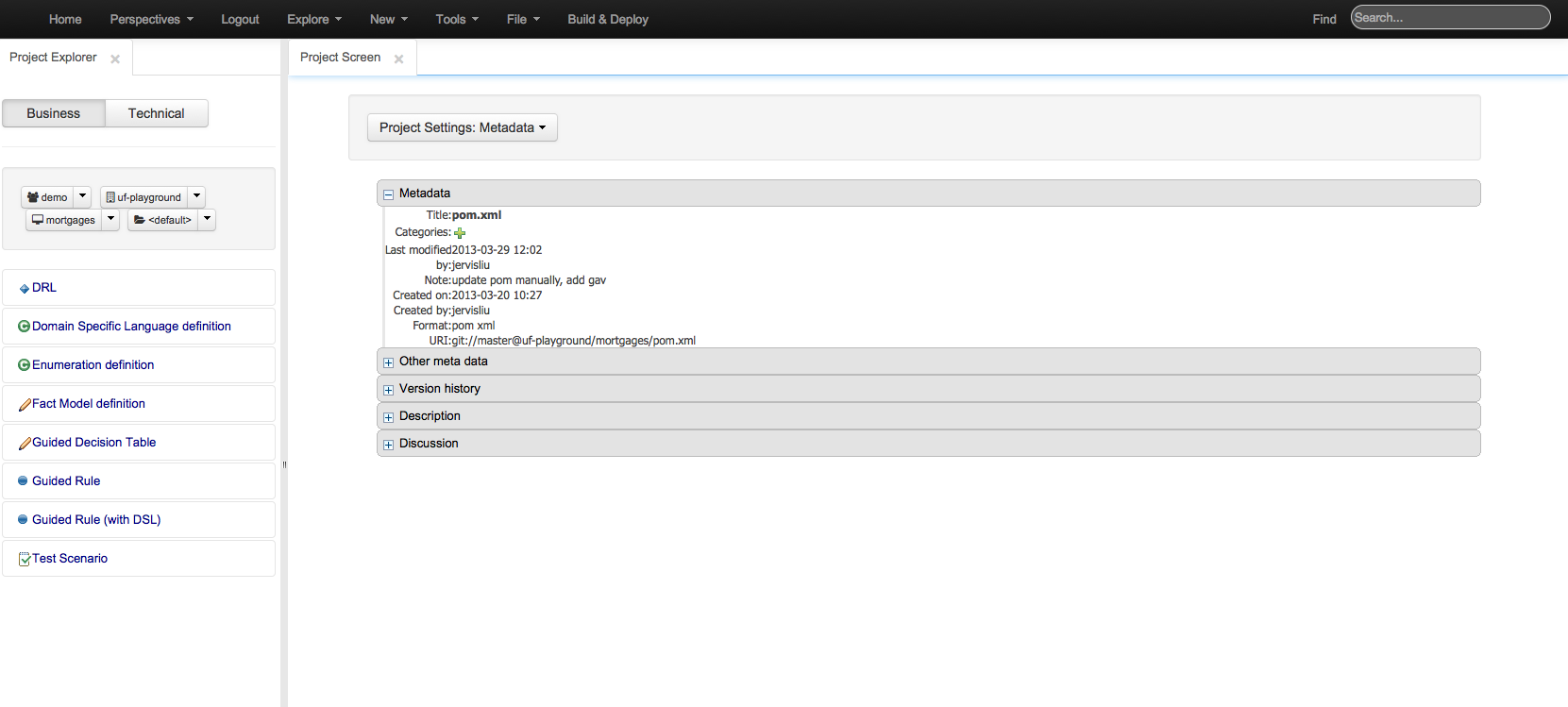

The layout of the workbench has however changed and the following screen-shots give an example of the new, common layout for most editors.

Asset editors have been ported to 6.0 with no or little change.

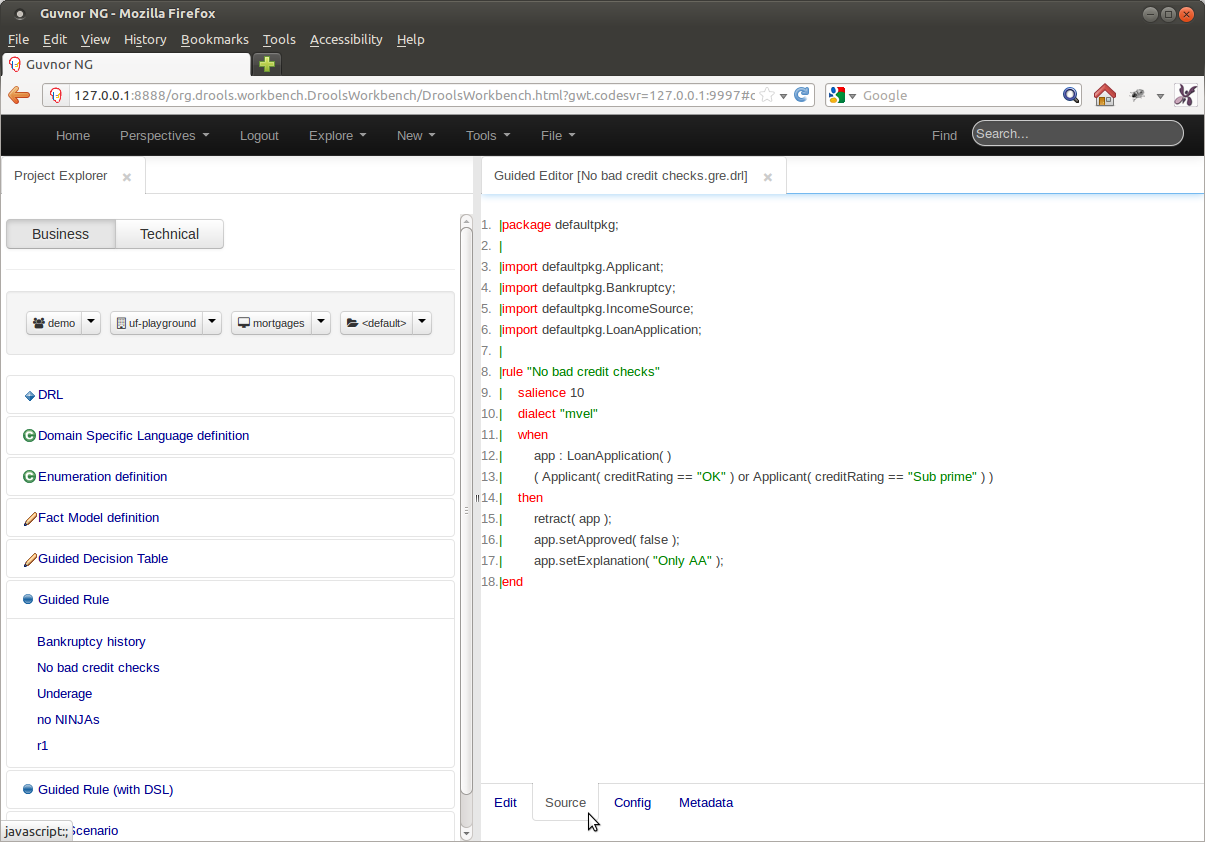

The (DRL) Source View of the asset has been moved to a mini-tab within the editor.

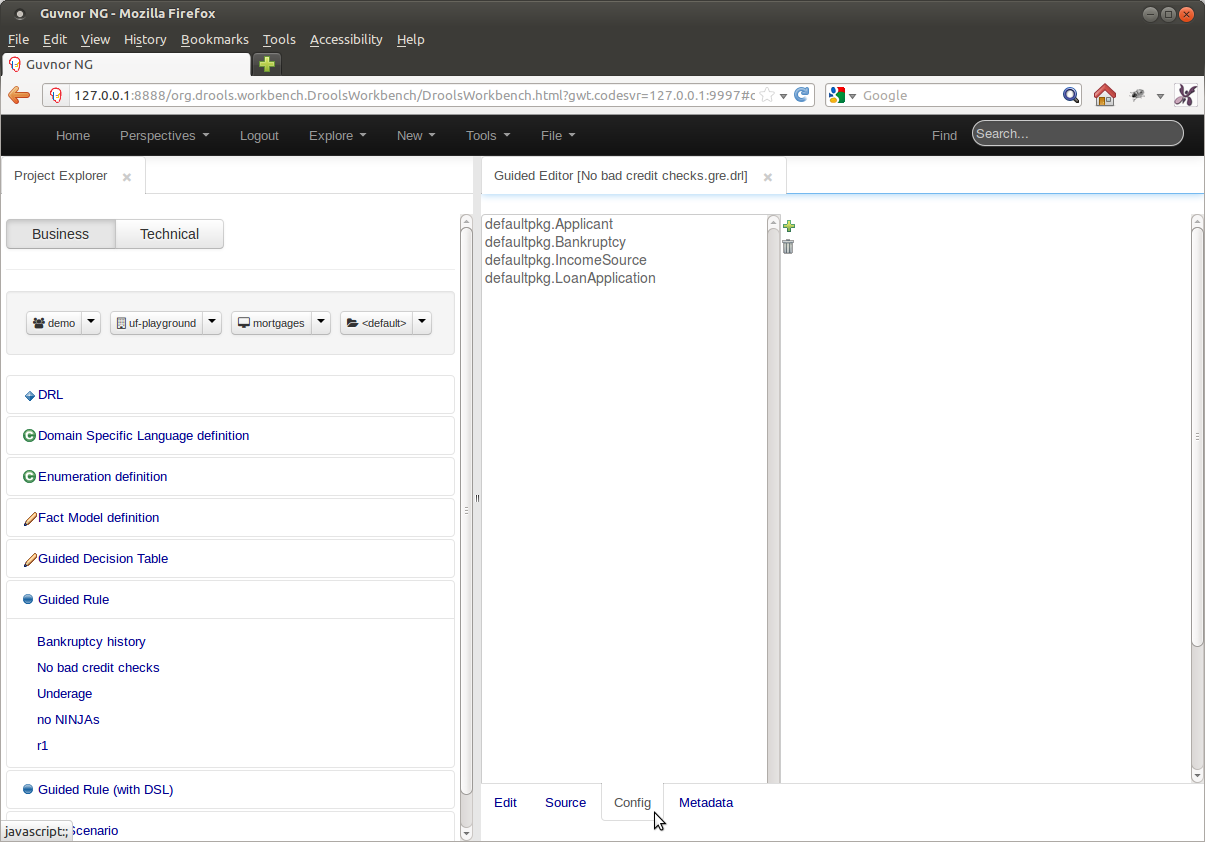

Types not within the package that contains the asset being authored need to be imported before they can be used.

Guvnor 5.5 had the user define package-wide imports from the Package Screen. Drools Workbench 6.0 has moved the selection of imports from the Package level to the Asset level; positioning the facility on a mini-tab within the editor.

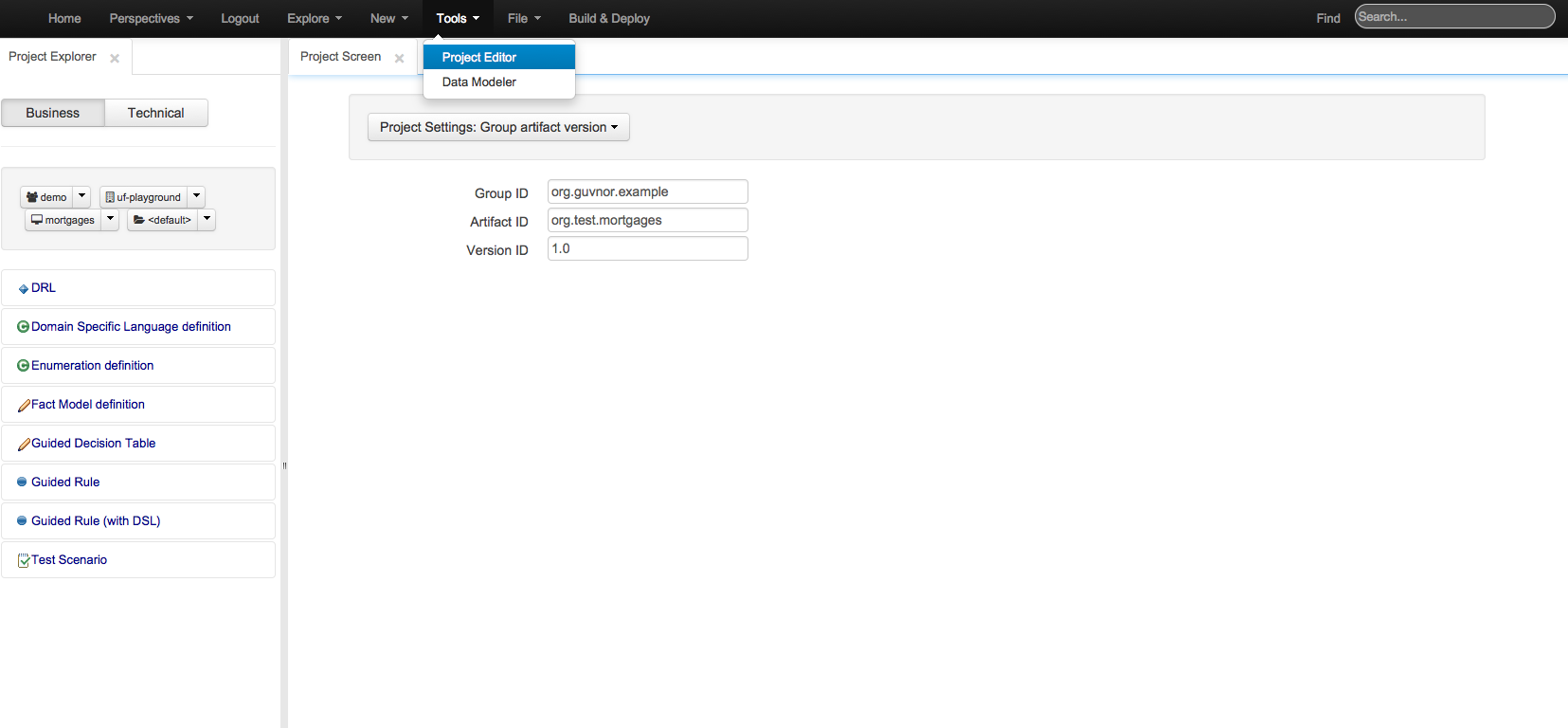

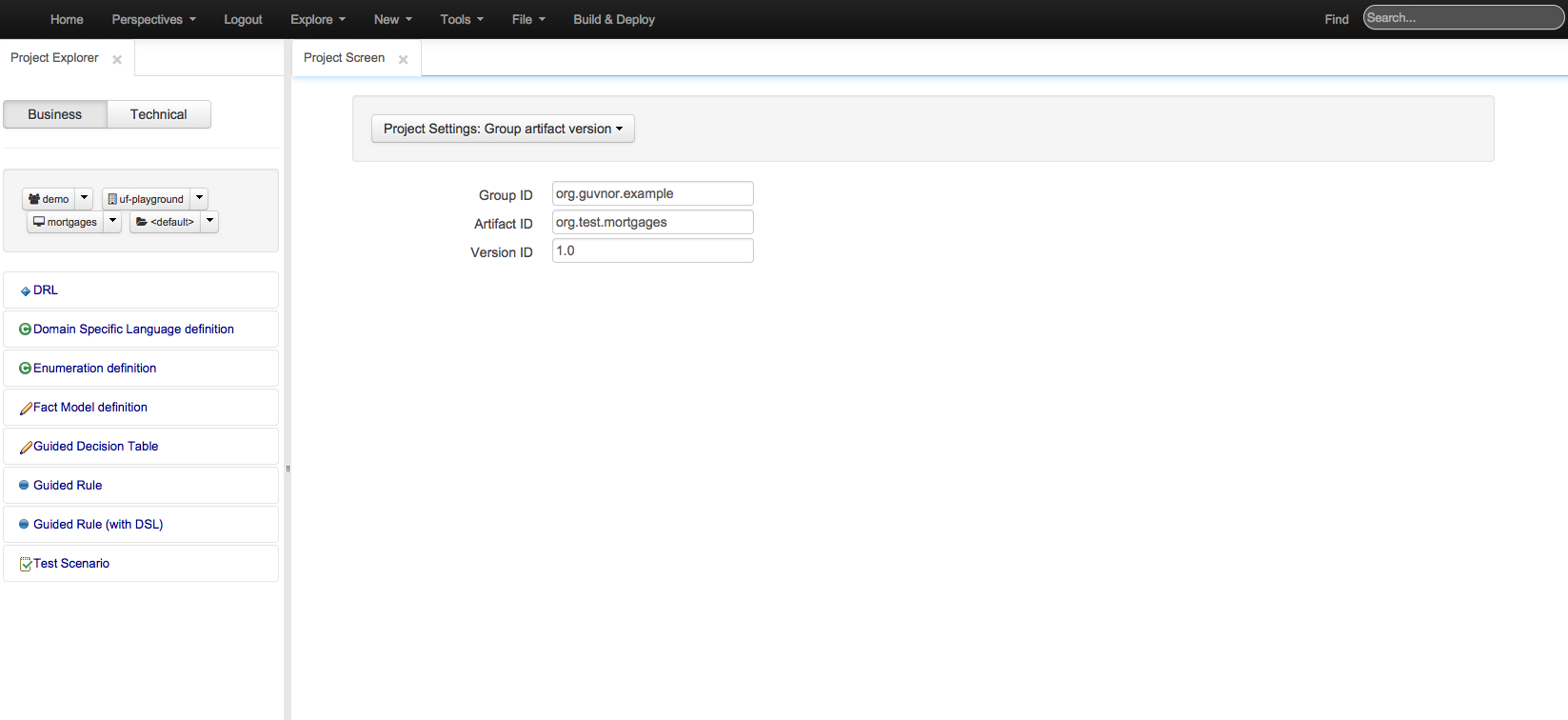

Project editor does what Package Editor did previously. It manages the the KIE projects. You can access the Project Editor from the menu bar Tools->Project Editor. The editor shows the configurations for the current active project and the content changes when you move around in your code repository.

Allows you to set the group, artifact and version id's for the project. Basically this screen is editing a pom.xml since we use Maven to build our KIE projects.

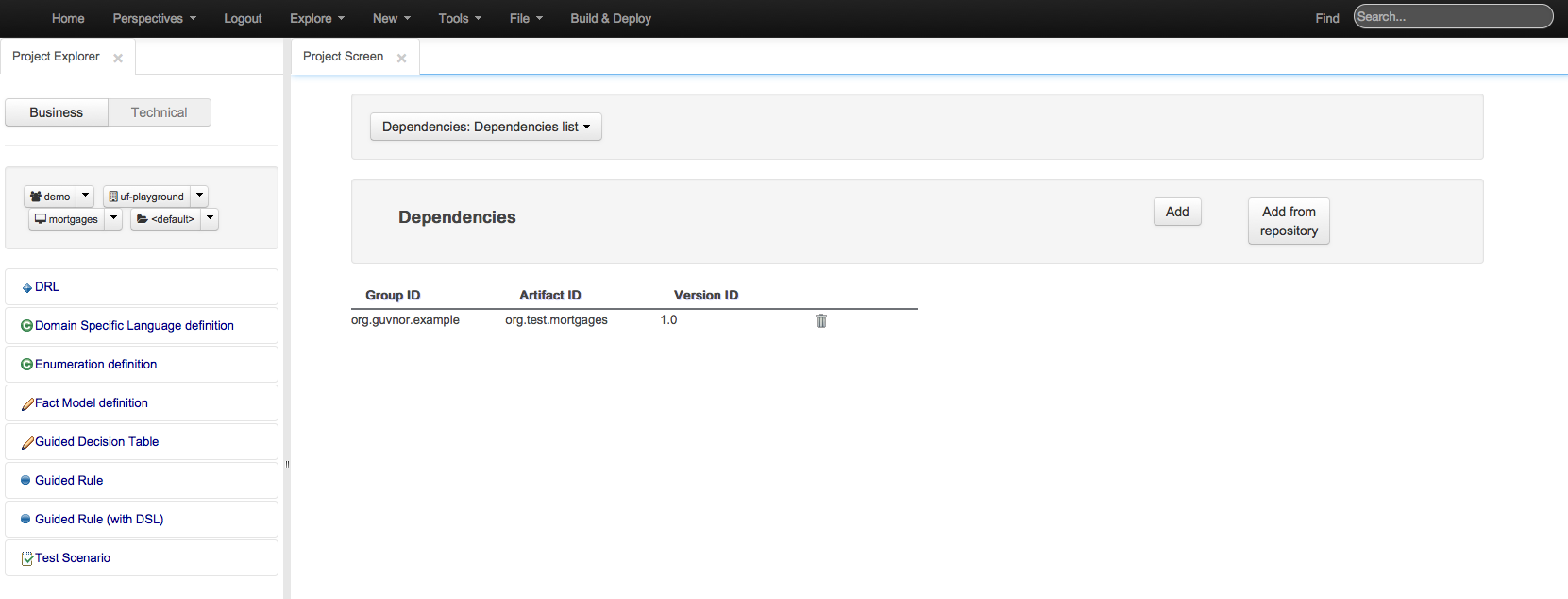

Lets you set the dependencies for the current project. It is possible to pick the the resources from an internal repository.

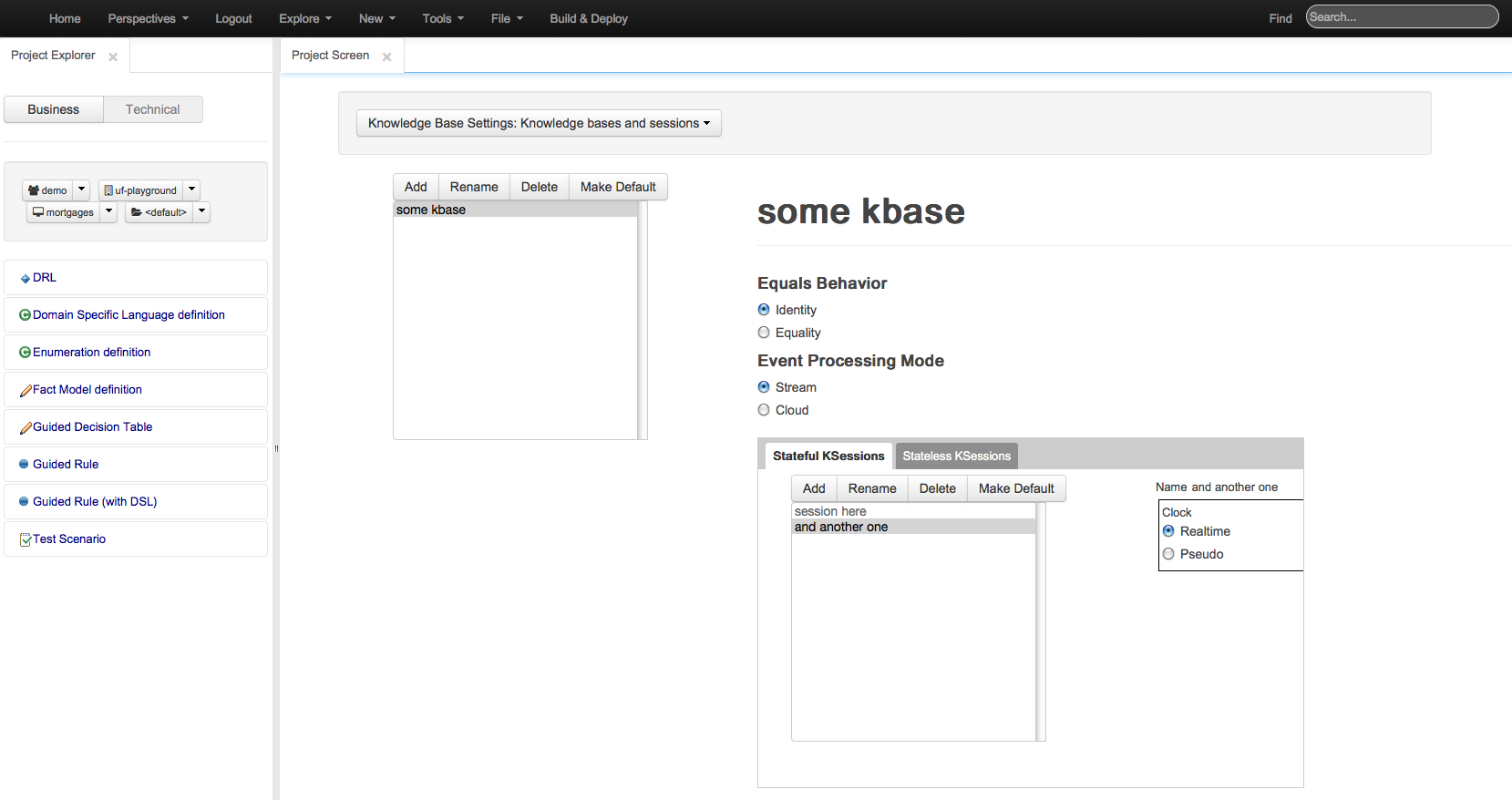

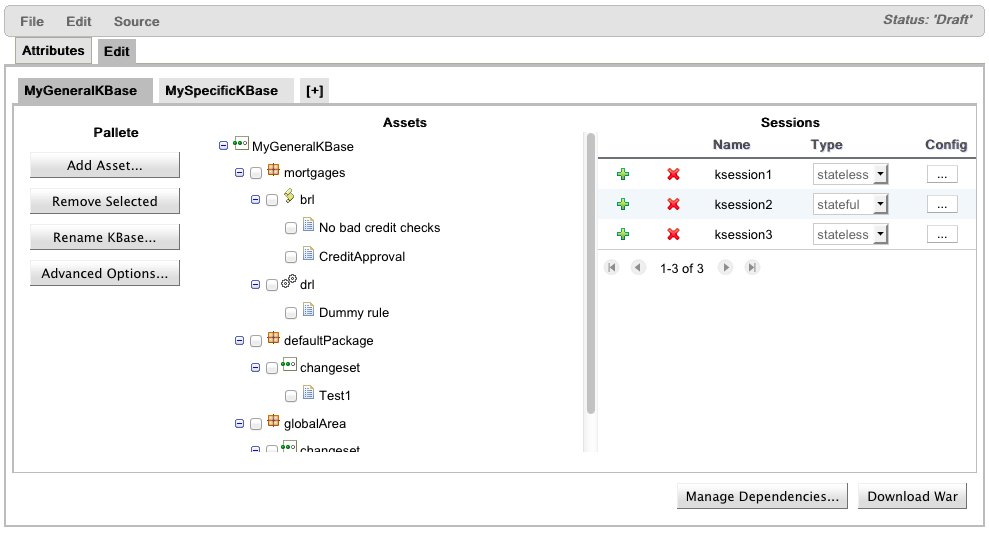

Editor for kmodule.xml explained more detail in the KIE core documentation. Editors for knowledge bases and knowledge session inside.

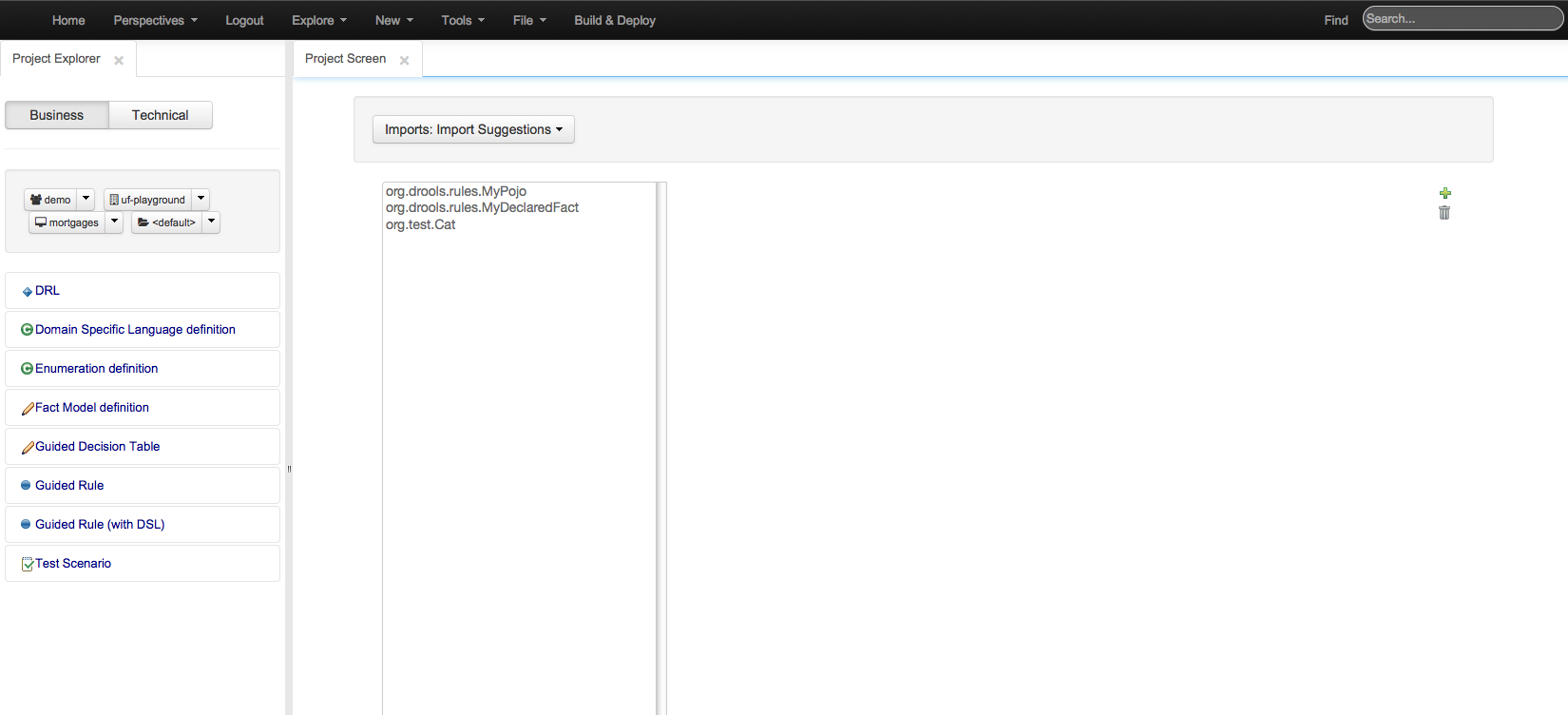

In import suggestions it is possible to specify a set of imports used in the project. Each editor has it's own imports now. Because of this these imports differ from what Guvnor 5.x Package Editor had, the imports are no longer automatically used in each asset, they just make use automated editor in KIE workbench easier and suggest fact types that the user might want to use.

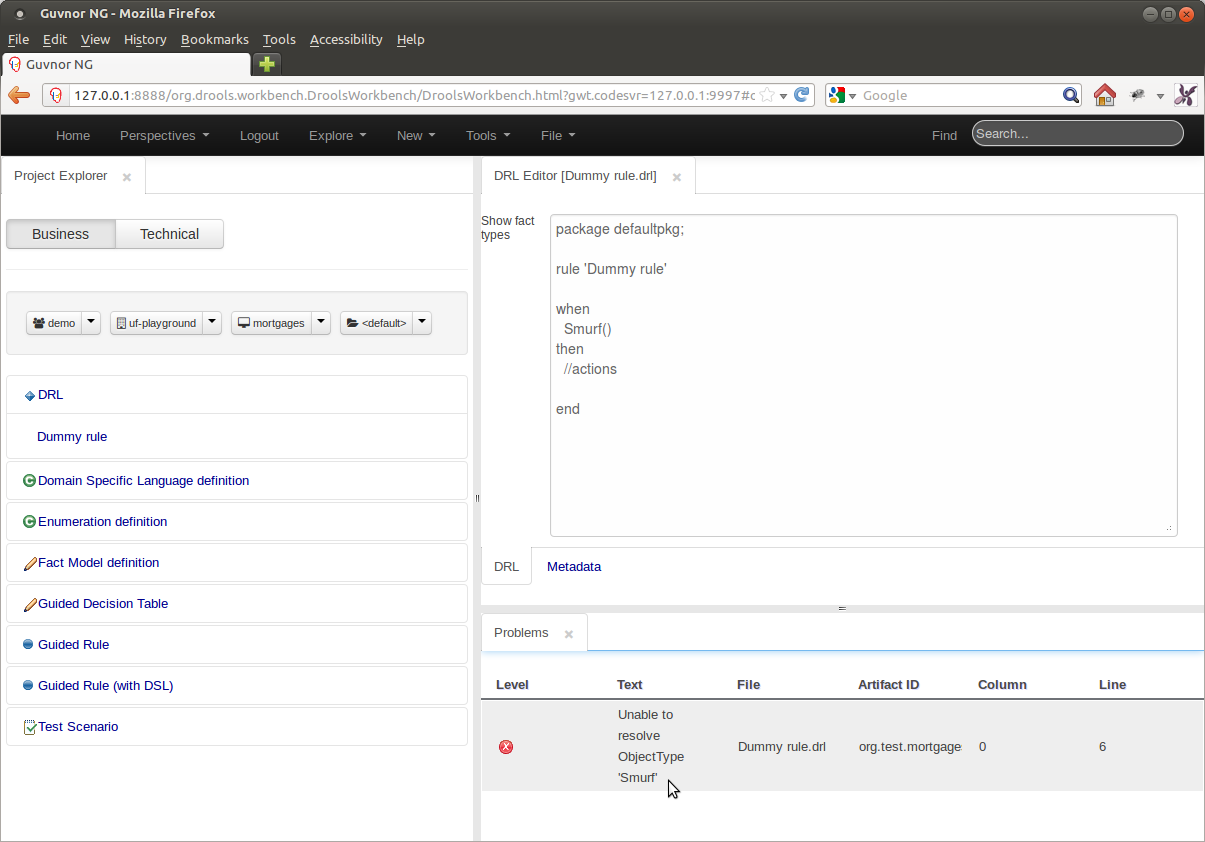

Whenever a Project is built or an asset saved they are validated.

In the example below, the Fact Type "Smurf" has not been imported into the project and hence cannot be resolved.

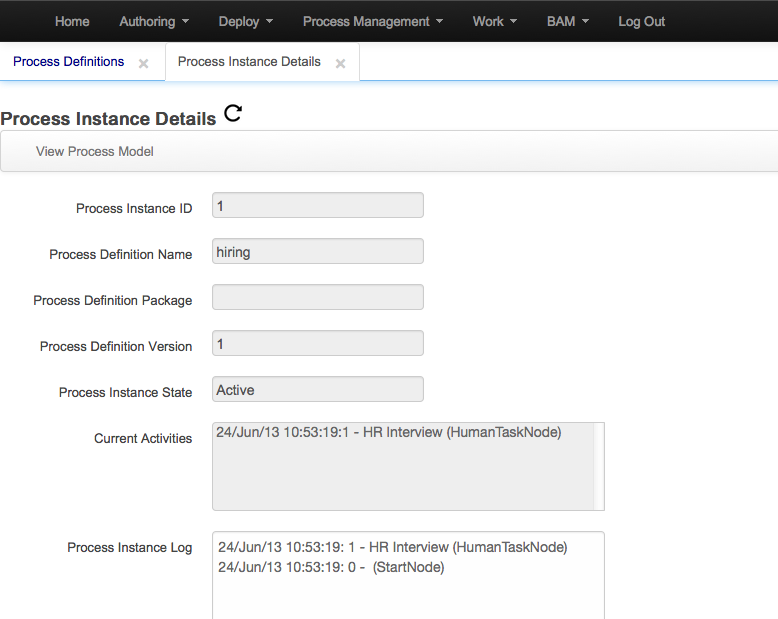

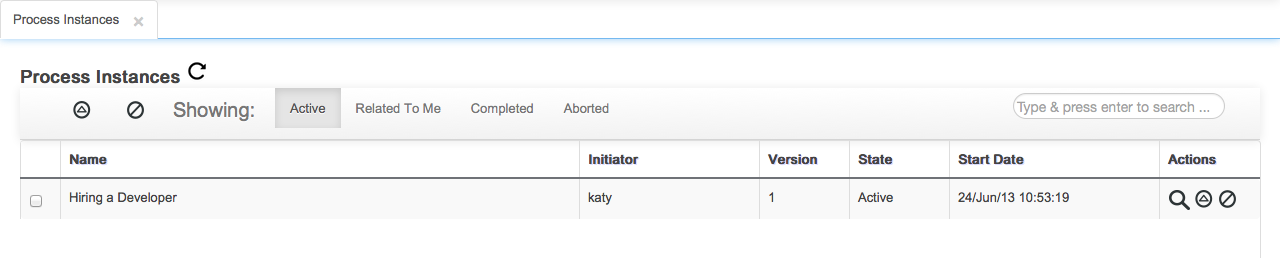

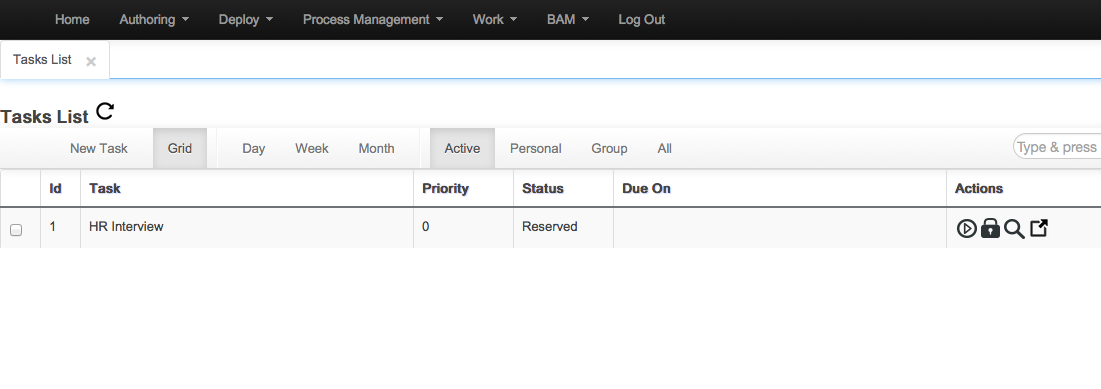

The following sections describe the improvements for the different components provided by the jBPM Console NG or the BPM related screens inside KIE Workbench for Beta4.

Business Oriented access to projects structures

Build and deploy projects containing processes with the standard Kmodule structure

Improvements on security domain configuration

Improvements on single sign on with the Dashboards application

Improved lists with search/filter capabilities

Access to the process model and process instance graph through the Process Designer

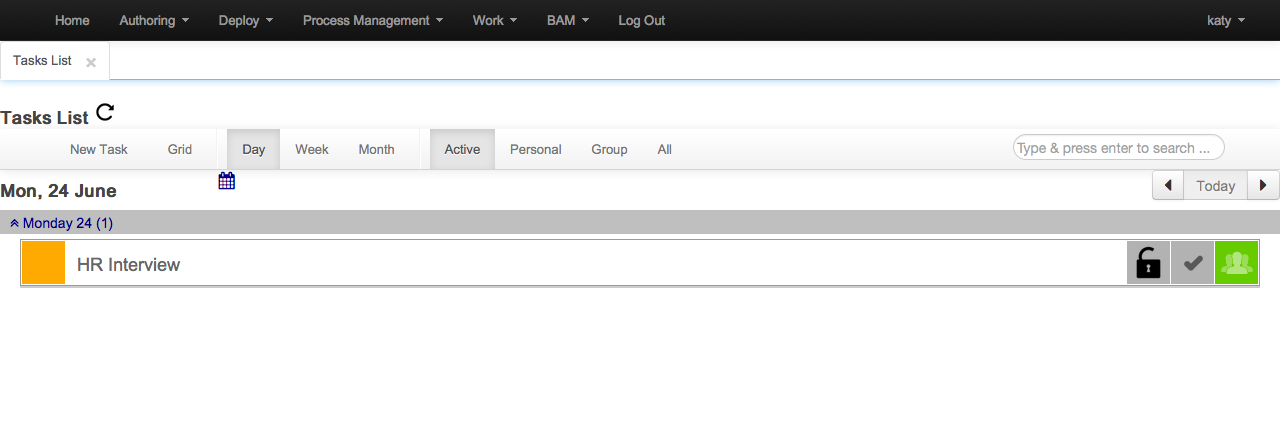

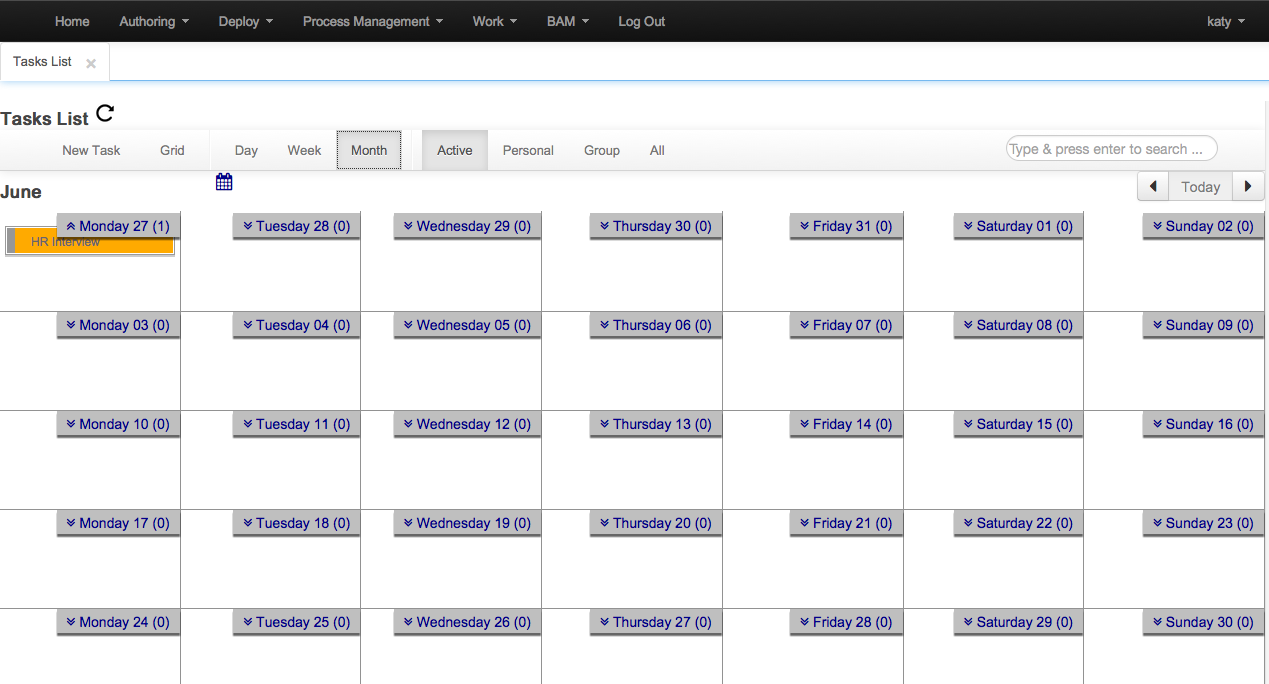

Calendar and Grid View merged into a single view

Filter capabilities improved

Search and filter is now possible

New task creation allows to set up multiple users and groups for the task

Now you can release every task that you have assigned (WS-HT conformance)

Initial version of the assignments tab added

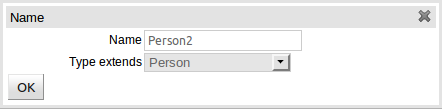

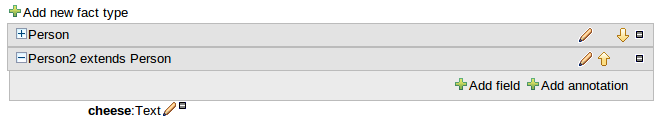

Typically, a business process analyst or data analyst will capture the requirements for a process or appliaction and turn these into a formal set of data structures and their relationships. The new Data Modeller tool enables configuration of such data models (both logical and physical), without the need for explicit coding. Its main goals are to make data models into first class citizens in the process improvement cycle and allow for full process automation using data and forms, without the need for advanced development skills.

Simple data modelling UI

Allows adding conceptual information to the model (such as user friendly labels)

Common tool for both analysts and developers

Automatically generates all assets required for execution

Single representation enables developer roundtrip

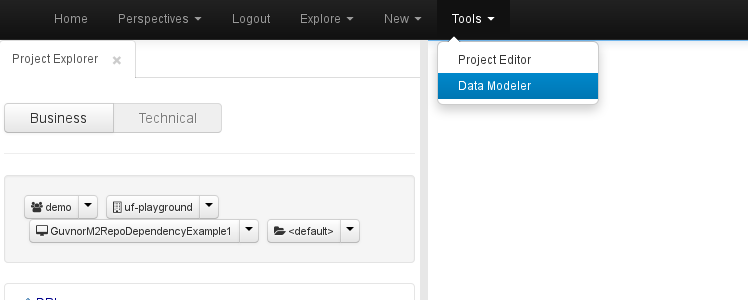

By default, whenever a new project is created, it automatically associates an empty data model to it. The currently active project's data model can be opened from the menu bar Tools-> Data Modeller:

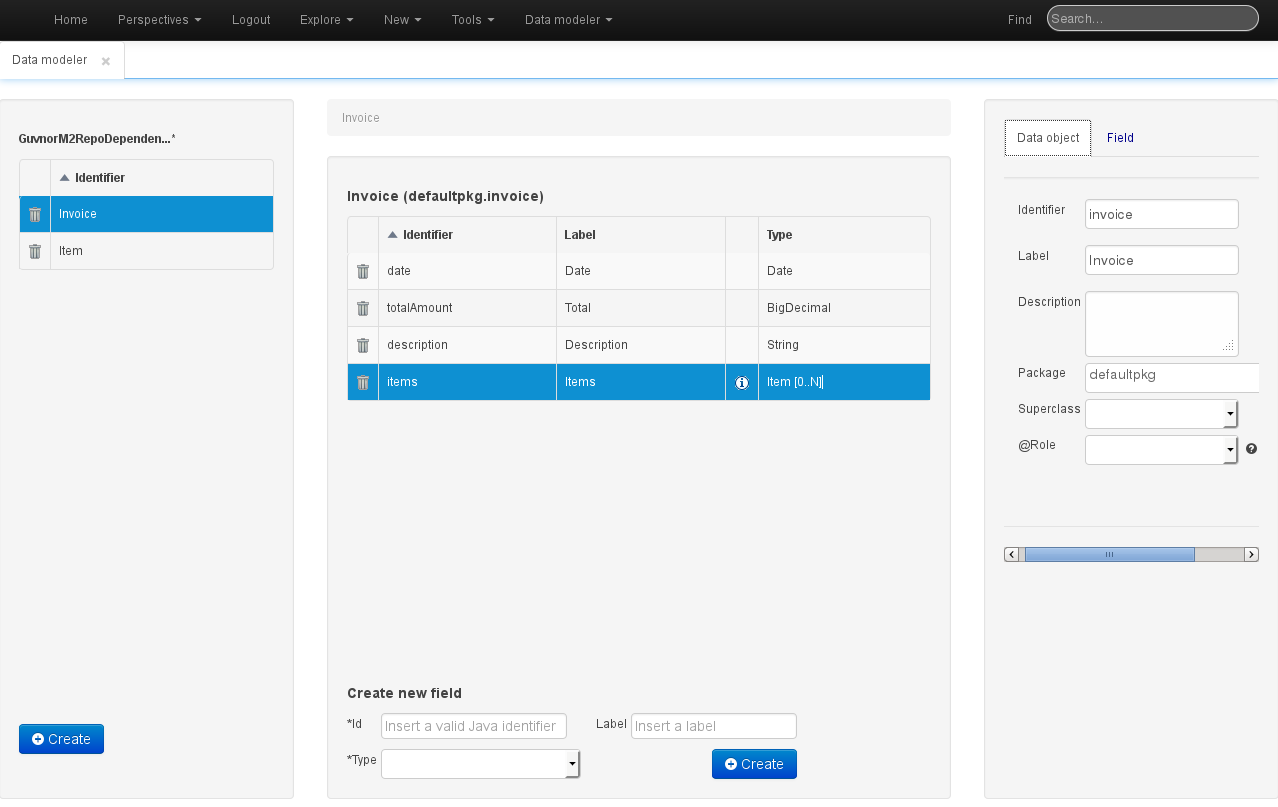

The basic aspect of the Data Modeller is shown in the following screenshot:

The Data Modeller screen is divided into the following sections:

Model browser: the leftmost section, which allows creation of new data objects and where the existing ones are listed.

Object browser: the middle section, which displays a table with the fields of the data object that has been selected in the Model browser. This section also enables creating new attributes for the currently selected object.

Object / Attribute editor: the rightmost section. This is a tabbed editor where either the currently selected object's properties (as currently shown in the screenshot), or a previously selected object attribute's properties, can be modified.

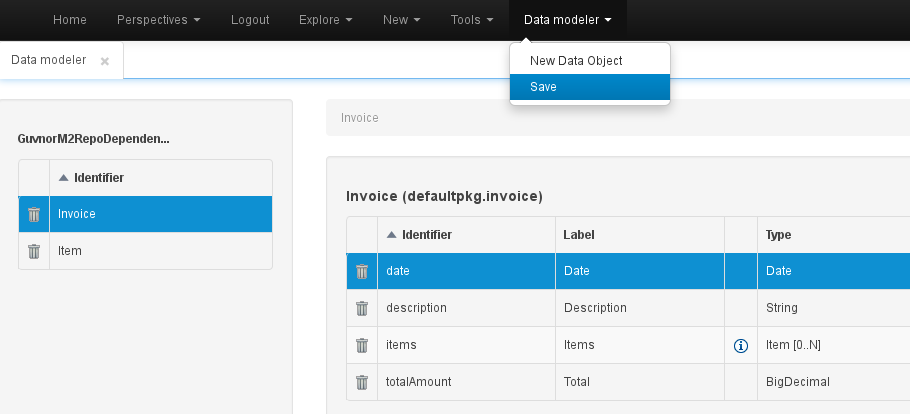

Whenever a data model screen is activated, an additional entry will appear in the top menu, allowing creation of new data objects, as well as saving the model's modifications. Saving the model will also generate all its assets (pojo's), which will then become available to the rest of the tools.

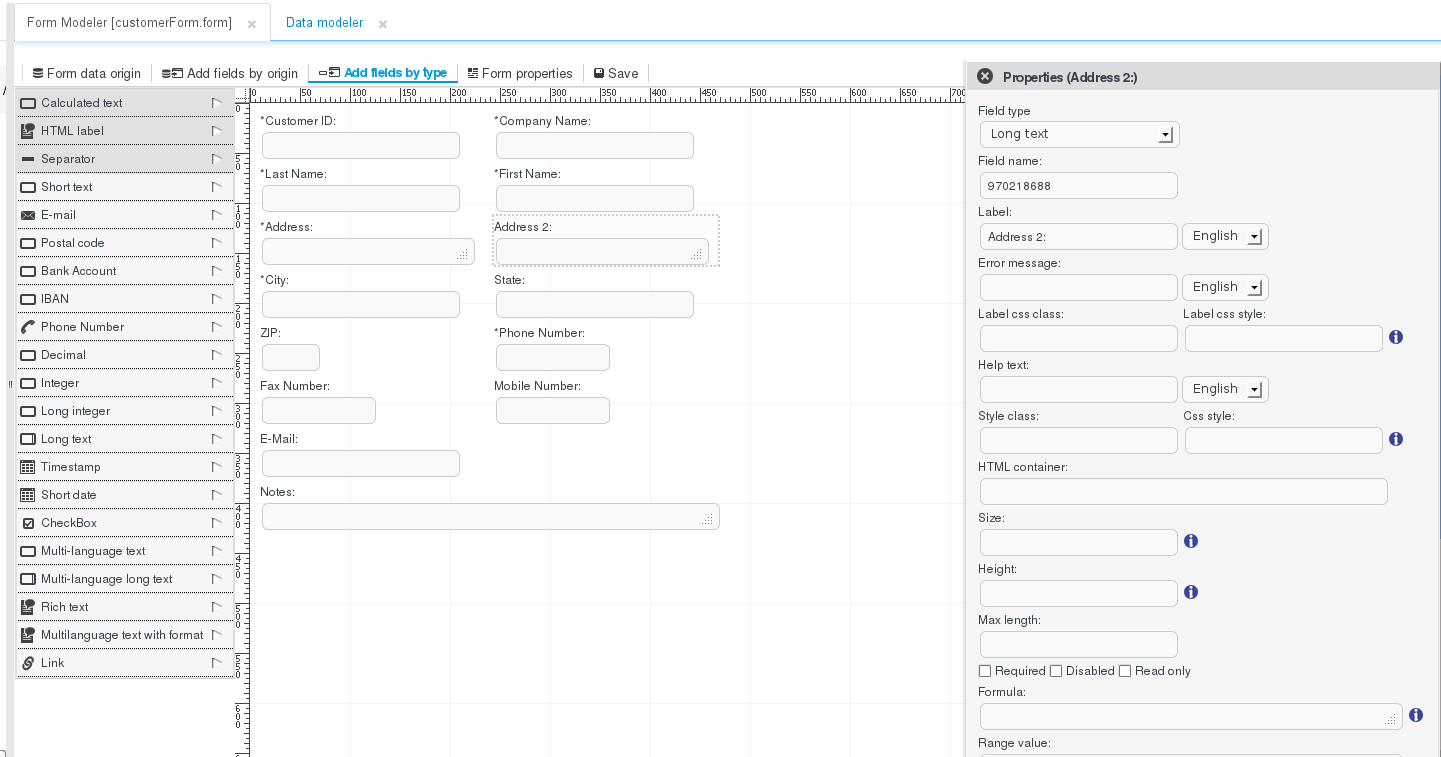

As well as being able to easily define data structures and their internal relationships, which is possible through the new Data Modeller utility, providing a tool that empowers analysts and developers to define the graphical interfaces to those data structures is of equal importance. The form designer tool allows non-technical users to configure complex data forms for data capture and visualization a WYSIWYG fashion, define the relationships between those forms and the underlying data structure(s), and execute those forms inside the context of business processes.

Simple WYSIWYG UI for easy form modelling

Form autogeneration from datamodel/process task or java objects

Data binding for java objects

Customized form layouts

Forms embedding

Process / data analysts. Designing forms.

Developers. Advanced form configuration, such as adding formulas and expressions for dynamic form recognition.

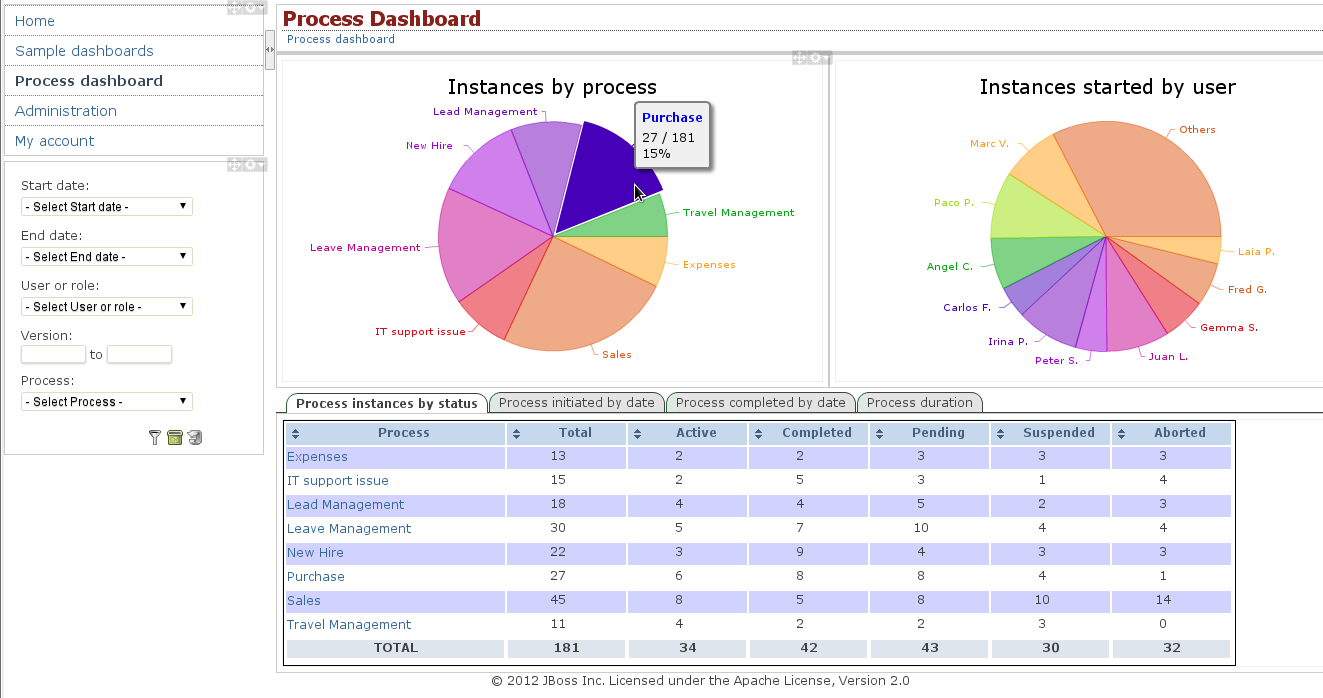

The ability to monitor the status of process instances and tasks, is essential to evaluate the correctness of their design and implementation. With this in mind, the workbench integrates a complete process and task dashboard set, based on the "Dashboard Builder" tool (described below), which provide the views, data providers and key performance indicators that are needed to monitor the status of processes and tasks in real-time.

Visualization of process instances and tasks by process

Visualization of process instances and tasks by user

Visualization of process instances and tasks by date

Visualization of process instances and tasks by duration

Visualization of process instances and tasks by status

Filter by process, process status, process version, task, task status, task start- and end-date

Chart drill-down by process

Chart drill-down by user

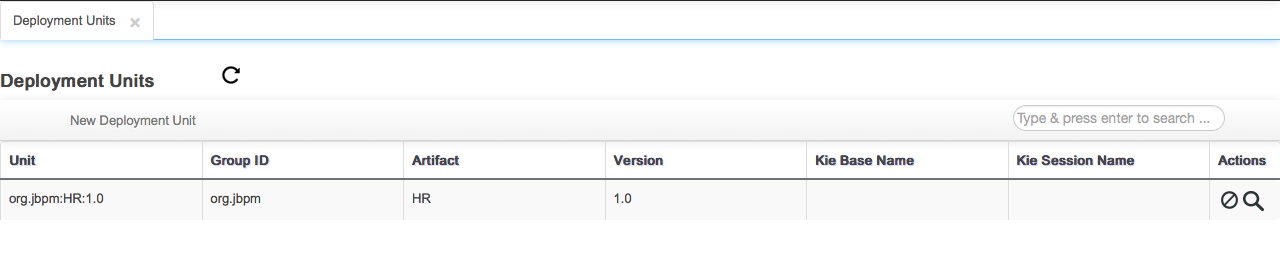

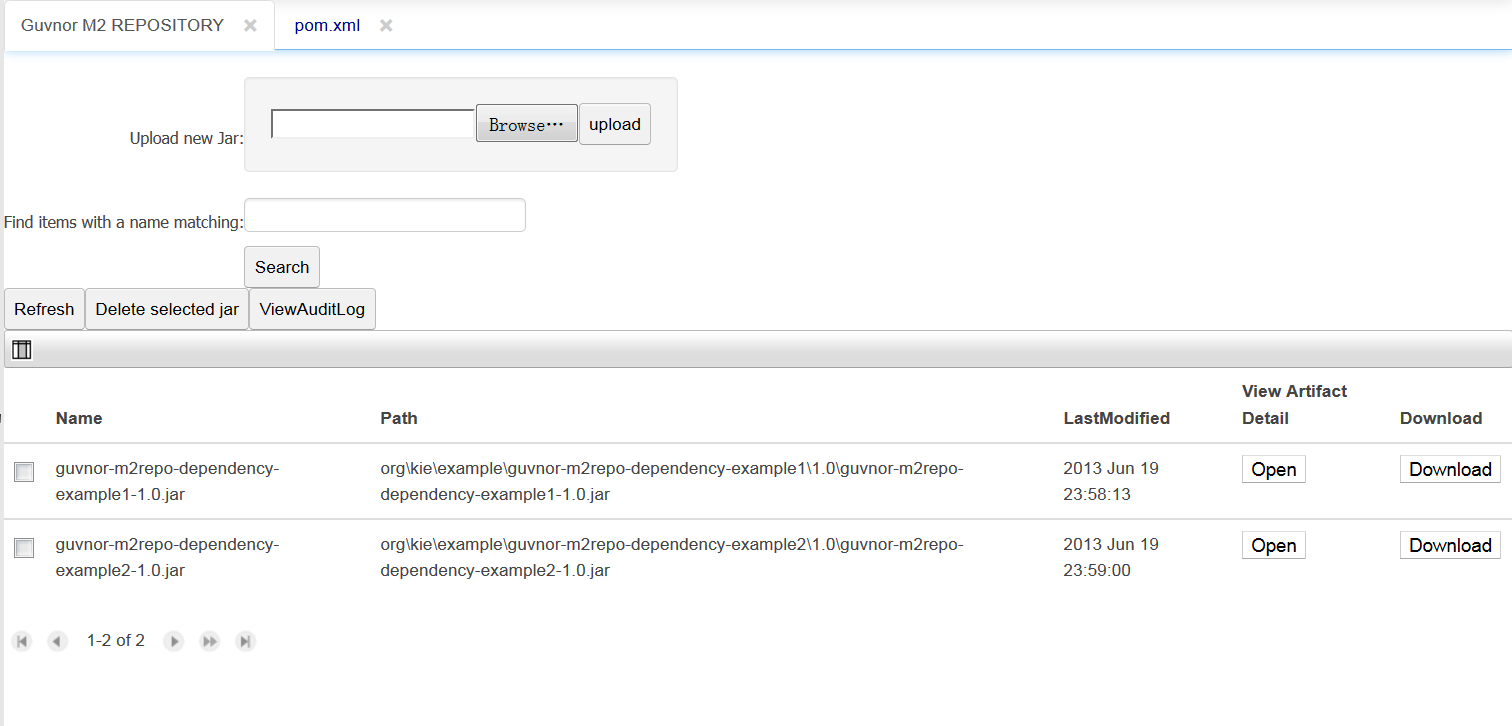

Upload, download and manage KJars with Guvnor M2 repository.

Upload KJars to Guvnor M2 repository:

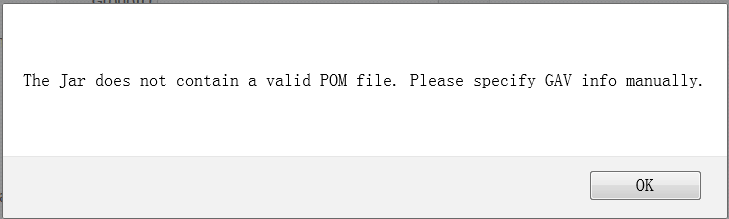

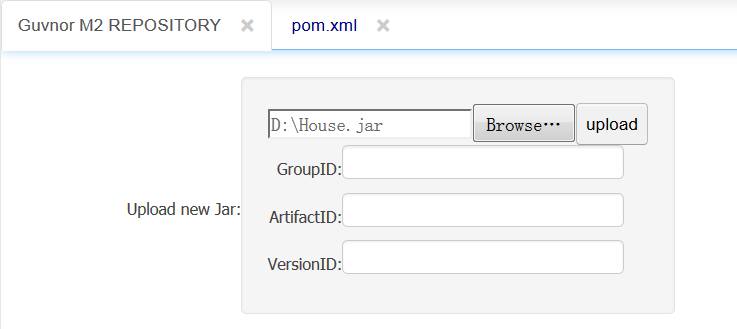

A KJar has a pom.xml (and pom.properties) that define the GAV and POJO model dependencies. If the pom.properties is missing the user is prompted for the GAV and a pom.properties file is appended to the JAR before being uploaded to Guvnor M2 repo.

The Guvnor M2 REPO is exposed via REST with URL pattern http://{ServerName}/{httpPort}/{droolsWBWarFilename}/rest

jcr2vfs is a cli tool that migrates legancys Guvnor repository to 6.0.

runMigration --help

usage: runMigration [options...]

-f,--forceOverwriteOutputVfsRepository Force overwriting the Guvnor 6 VFS repository

-h,--help help for the command.

-i,--inputJcrRepository <arg> The Guvnor 5 JCR repository

-o,--outputVfsRepository <arg> The Guvnor 6 VFS repository

The GuvnorNG Rest API provides access to project "service" resources, i.e., any "service" that is not part of the underlying persistence mechanism (i.e, Git, VFS).

The http address to use as base address is http://{ServerName}/{httpPort}/{droolsWBWarFilename}/rest where ServerName is the host name on the server on which drools-wb is deployed, httpPort the port number (8080 by default development) and droolsWBWarFilename the name of the archived deployed (drools-workbench-6.0.0 for version 6.0) without the extension.

Check this doc for API details: https://app.apiary.io/jervisliu/editor

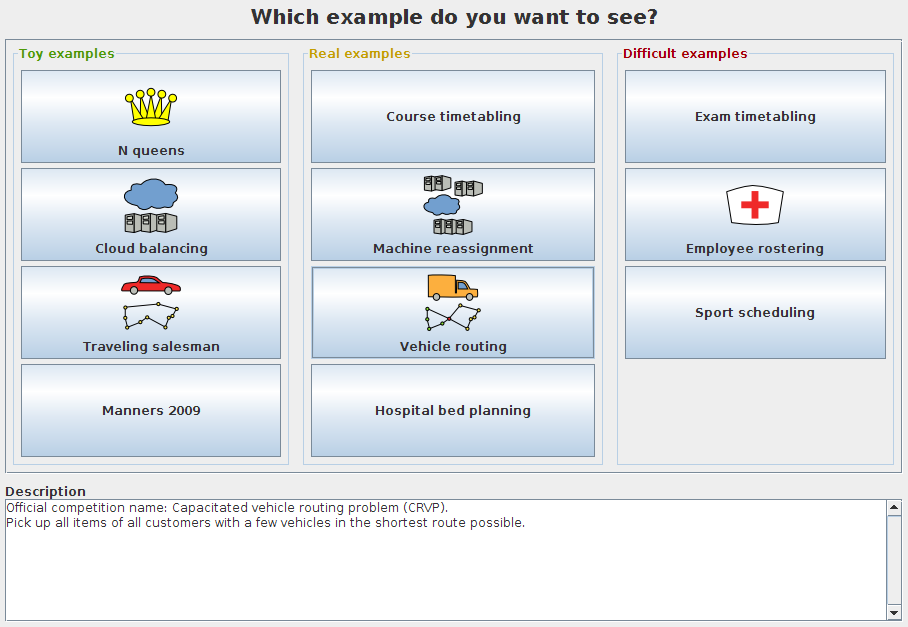

OptaPlanner is the new name for Drools Planner. OptaPlanner is now standalone, but can still be optionally combined with the Drools rule engine for a powerful declarative approach to planning optimization.

OptaPlanner has a new website (http://www.optaplanner.org), a new groupId/artifactId and its own IRC channel. It's a rename, not a fork. It's still the same license (ASL), same team, ...

For more information, see the full announcement.

The new ConstraintMatch system is:

Faster: the average calculate count per seconds increases between 7% and 40% on average per use case.

Easier to read and write

Far less error-prone. It's much harder to cause score corruption in your DRL.

Before:

rule "conflictingLecturesSameCourseInSamePeriod"

when

...

then

insertLogical(new IntConstraintOccurrence("conflictingLecturesSameCourseInSamePeriod", ConstraintType.HARD,

-1,

$leftLecture, $rightLecture));

end

After:

rule "conflictingLecturesSameCourseInSamePeriod"

when

...

then

scoreHolder.addHardConstraintMatch(kcontext, -1);

end

Notice that you don't need to repeat the ruleName or the causes (the lectures) no more. OptaPlanner figures out it itself through the kcontext variable. Drools automatically exposes the kcontext variable in the RHS, so you don't need any extra code for it.

You also no longer need to hack the API's to get a list of all ConstraintOcurrence's: the ConstraintMatch objects (and their totals per constraint) are available directly on the ScoreDirector API.

For more information, see the blog post and the manual.

Implementing the Solution's planning clone method is now optional.

This means there's less boilerplate code to write and it's hard to cause score corruption in your code.

For more information, see the blog post and the manual.

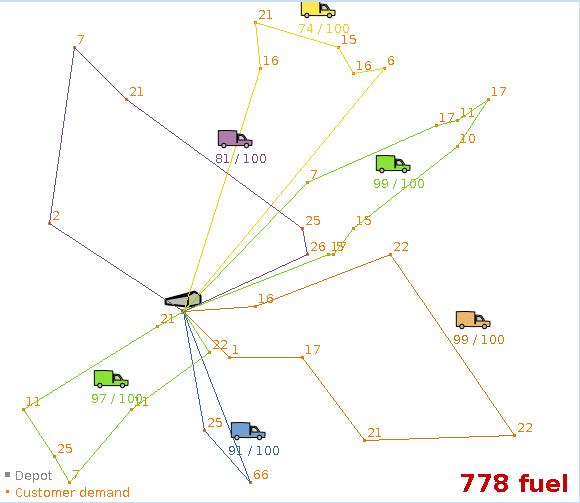

Several improvements have made use cases like TSP and VRP more scalable. The code has been optimized to run

significantly faster. Also ,<subChainSelector> now supports

<maximumSubChainSize> to scale out better.

New build-in score definitions include: HardMediumSoftScore (3 score levels),

BendableScore (configurable number of score levels), HardSoftLongScore,

HardSoftDoubleScore, HardSoftBigDecimalScore, ...

A planning variable can now have a listener which updates a shadow planning variable.

A shadow planning variable is a variable that is never changed directly, but can be calculated based on the state of the genuine planning variables. For example: in VRP with time windows, the arrival time at a customer can be calculated based on the previous customers of that vehicle.

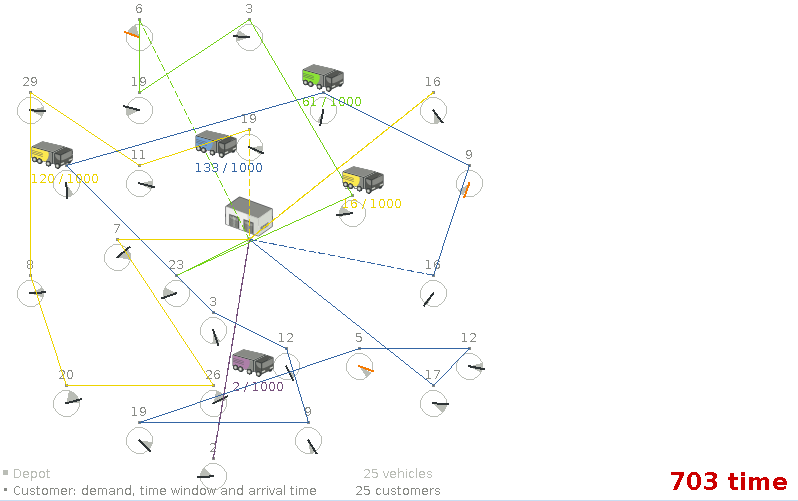

The VRP example can now also handle the capacitated vehicle routing problem with time windows.

Use the import button to import a time windowed dataset.

A shadow planning variable can now be mappedBy a genuine variable. Planner will

automatically update the shadow variable if the genuine variable is changed.

Currently this is only supported for chained variables.

The best solution mutation statistic shows for every new best solution found, how many variables needed to change to improve the last best solution.

The step score statistic shows how the step score evolves over time.

The construction heuristics now use the selector architecture so they support selection filtering, etc. Sorting can be overwritten at a configuration level (very handy for benchmarking).

In cases with multiple planning variables (for example period, room and teacher), you can now switch to a far more scalable configuration.

VRP subchain selection improved if

maximumSubChainSizeis set.The Benchmarker now highlights infeasible solutions with an orange exclamation mark.

The Benchmarker now shows standard deviation per solver configuration (thanks to Miroslav Svitok).

Domain classes that extend/implement a

@PlanningEntityclass or interface can now be used as planning entities.BendableScoreis now compatible with XStream: useXStreamBendableScoreConverter.Late Acceptance improved.

Ratio based entity tabu (thanks to Lukáš Petrovický)

Drools properties can now be optionally specified in the solver configuration XML.

Mimic selection: usefull to create a cartesian product selection of 2 change move selectors that move different variables of the same entity

The Dashboard Builder is a full featured web application which allows non-technical users to visually create business dashboards. Dashboard data can be extracted from heterogeneous sources of information such as JDBC databases or regular text files. It also provides a generic process dashboard for the jBPM Human Task module. Such dashboard can display multiple key performance indicators regarding process instances, tasks and users.

Some ready-to-use sample dashboards are provided as well, for demo and learning purposes.

Visual configuration of dashboards (Drag'n'drop).

Graphical representation of KPIs (Key Performance Indicators).

Configuration of interactive report tables.

Data export to Excel and CSV format.

Filtering and search, both in-memory or SQL based.

Process and tasks dashboards with jBPM.

Data extraction from external systems, through different protocols.

Granular access control for different user profiles.

Look'n'feel customization tools.

Pluggable chart library architecture.

Chart libraries provided: NVD3 & OFC2.

Managers / Business owners. Consumer of dashboards and reports.

IT / System architects. Connectivity and data extraction.

Analysts. Dashboard composition & configuration.

For more info on the Dashboard Builder application, please refer to: Dash Builder Quick Start Guide.

We already allow nested accessors to be used as follows, where address is the nested object:

Person( name == "mark", address.city == "london", address.country == "uk" )

Now these accessors to nested objects can be grouped with a '.(...)' syntax providing more readable rules as it follows:

Person( name== "mark", address.( city == "london", country == "uk") )

Note the '.' prefix, this is necessary to differentiate the nested object constraints from a method call.

When dealing with nested objects, we may need to cast to a subtype. Now it is possible to do that via the # symbol as in:

Person( name=="mark", address#LongAddress.country == "uk" )

This example casts Address to LongAddress, making its getters available. If the cast is not possible (instanceof returns false), the evaluation will be considered false. Also fully qualified names are supported:

Person( name=="mark", address#org.domain.LongAddress.country == "uk" )

It is possible to use multiple inline casts in the same expression:

Person( name == "mark", address#LongAddress.country#DetailedCountry.population > 10000000 )

moreover, since we also support the instanceof operator, if that is used we will infer its results for further uses of that field, within that pattern:

Person( name=="mark", address instanceof LongAddress, address.country == "uk" )

Sometimes the constraint of having one single consequence for each rule can be somewhat limiting and leads to verbose and difficult to be maintained repetitions like in the following example:

rule "Give 10% discount to customers older than 60"

when

$customer : Customer( age > 60 )

then

modify($customer) { setDiscount( 0.1 ) };

end

rule "Give free parking to customers older than 60"

when

$customer : Customer( age > 60 )

$car : Car ( owner == $customer )

then

modify($car) { setFreeParking( true ) };

endIt is already possible to partially overcome this problem by making the second rule extending the first one like in:

rule "Give 10% discount to customers older than 60"

when

$customer : Customer( age > 60 )

then

modify($customer) { setDiscount( 0.1 ) };

end

rule "Give free parking to customers older than 60"

extends "Give 10% discount to customers older than 60"

when

$car : Car ( owner == $customer )

then

modify($car) { setFreeParking( true ) };

endAnyway this feature makes it possible to define more labelled consequences other than the default one in a single rule, so, for example, the 2 former rules can be compacted in only one like it follows:

rule "Give 10% discount and free parking to customers older than 60"

when

$customer : Customer( age > 60 )

do[giveDiscount]

$car : Car ( owner == $customer )

then

modify($car) { setFreeParking( true ) };

then[giveDiscount]

modify($customer) { setDiscount( 0.1 ) };

endThis last rule has 2 consequences, the usual default one, plus another one named "giveDiscount" that is activated, using the keyword do, as soon as a customer older than 60 is found in the knowledge base, regardless of the fact that he owns a car or not. The activation of a named consequence can be also guarded by an additional condition like in this further example:

rule "Give free parking to customers older than 60 and 10% discount to golden ones among them"

when

$customer : Customer( age > 60 )

if ( type == "Golden" ) do[giveDiscount]

$car : Car ( owner == $customer )

then

modify($car) { setFreeParking( true ) };

then[giveDiscount]

modify($customer) { setDiscount( 0.1 ) };

endThe condition in the if statement is always evaluated on the pattern immediately preceding it. In the end this last, a bit more complicated, example shows how it is possible to switch over different conditions using a nested if/else statement:

rule "Give free parking and 10% discount to over 60 Golden customer and 5% to Silver ones"

when

$customer : Customer( age > 60 )

if ( type == "Golden" ) do[giveDiscount10]

else if ( type == "Silver" ) break[giveDiscount5]

$car : Car ( owner == $customer )

then

modify($car) { setFreeParking( true ) };

then[giveDiscount10]

modify($customer) { setDiscount( 0.1 ) };

then[giveDiscount5]

modify($customer) { setDiscount( 0.05 ) };

endHere the purpose is to give a 10% discount AND a free parking to Golden customers over 60, but only a 5% discount (without free parking) to the Silver ones. This result is achieved by activating the consequence named "giveDiscount5" using the keyword break instead of do. In fact do just schedules a consequence in the agenda, allowing the remaining part of the LHS to continue of being evaluated as per normal, while break also blocks any further pattern matching evaluation. Note, of course, that the activation of a named consequence not guarded by any condition with break doesn't make sense (and generates a compile time error) since otherwise the LHS part following it would be never reachable.

Drools now has logging support. It can log to your favorite logger (such as logback, log4j, commons-logging, JDK logger, no logging, ...) through the SFL4J API.

The !. operator allows to derefencing in a null-safe way. More in details the matching algorithm requires the value to the left of the !. operator to be not null in order to give a positive result for pattern matching itself. In other words the pattern:

Person( $streetName : address!.street )

will be internally translated in:

Person( address != null, $streetName : address.street )

More information about the rationals that drove the decisions related to this implementation are available here.

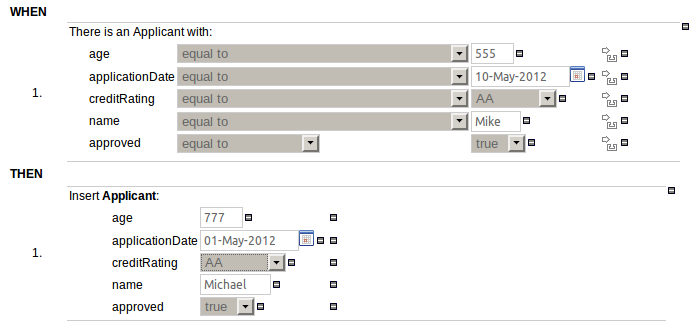

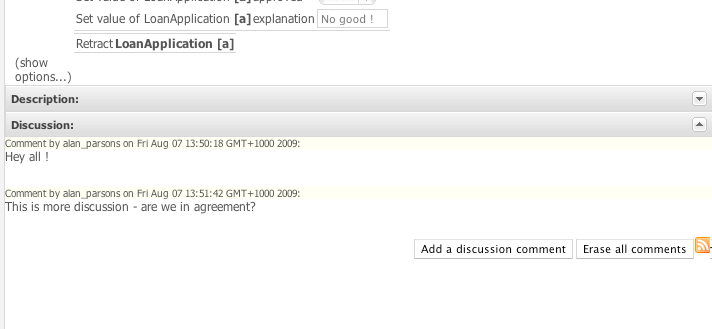

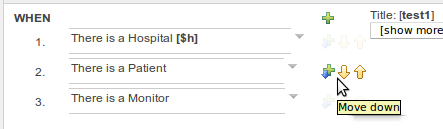

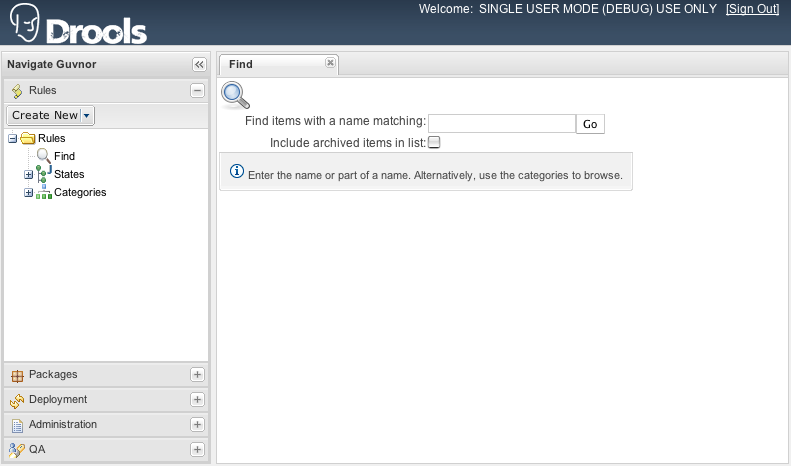

The Guided Rule Editor (BRL) has been made more consistent with the other editors in Guvnor.

The left-hand side (WHEN) part of the rule is now authored in a similar manner to the right-hand side (THEN).

Additional popups for literal values have been removed

Date selectors are consistent with Decision Tables and Rule Templates

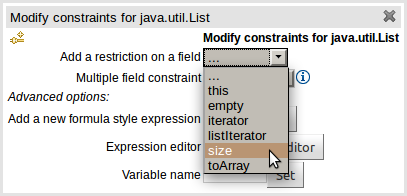

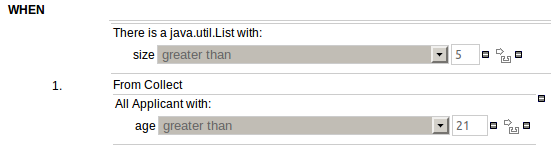

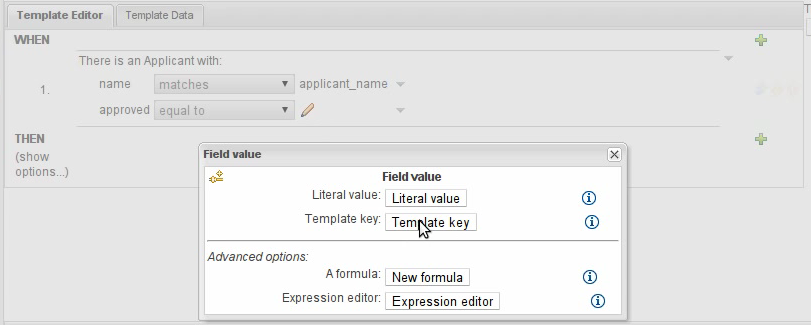

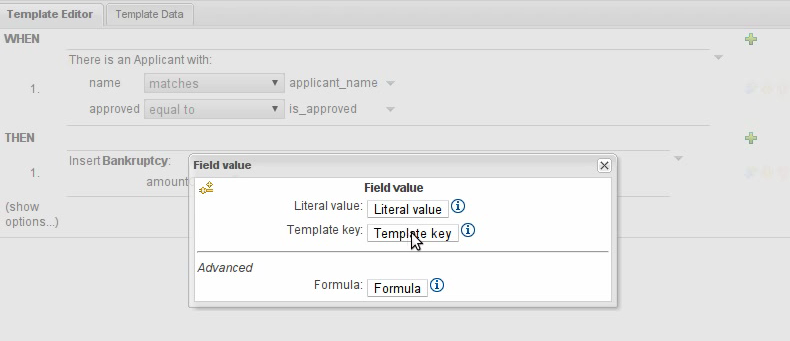

In response to a request (https://issues.jboss.org/browse/GUVNOR-1756) to be able to build a rule that bound a variable to the size of a java.util.List we have now exposed model class members that do not adhere to the Java Bean conventions. i.e. you can now select model class members that are not "getXXX" or "setXXX".

If you import a Java POJO model be prepared to see more members listed than you may have had previously.

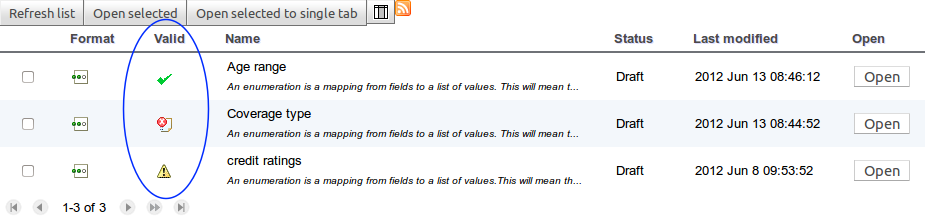

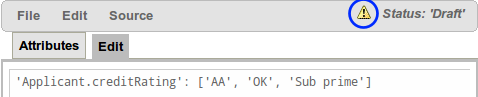

A valid indicator has now been added to the asset lists as well as the asset editors. The indicator shows whether an asset is valid, invalid or if the validity of the asset is undetermined. For already existing assets the validity of the asset will remain undetermined until the next time either validation is run on the asset or the asset has been saved again.

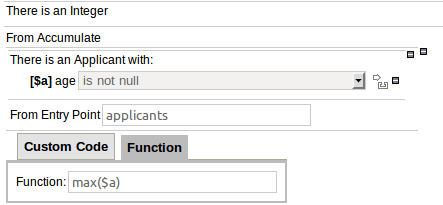

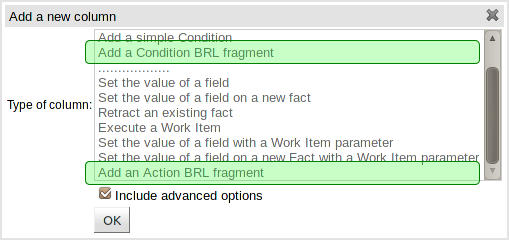

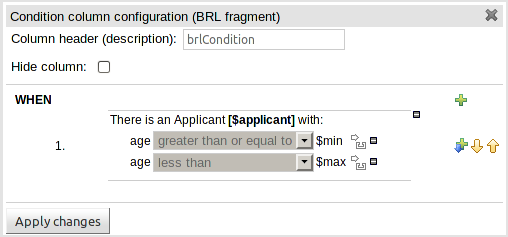

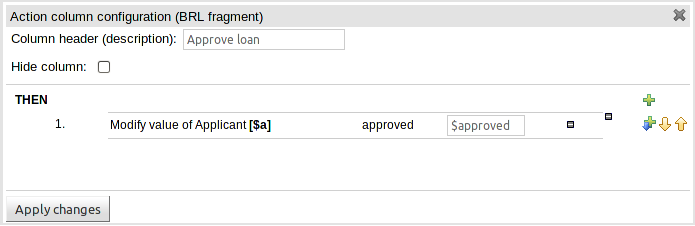

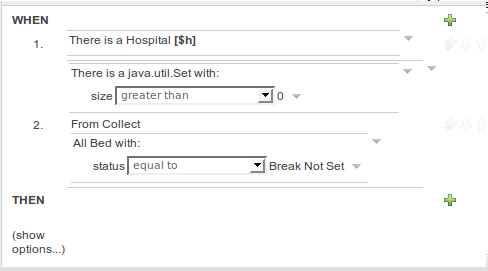

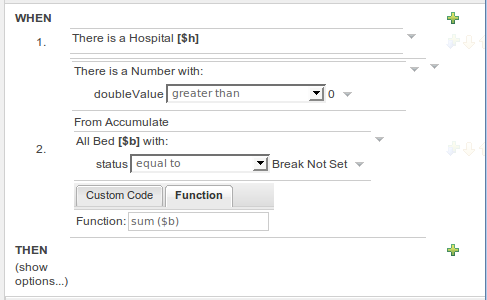

The guided rule editor now supports nesting entry-points in "from accumulate" and "from collect" constructs. Define a "from accumulate" or "from collect" in the usual manner and then choose "from entry-point" when defining the inner pattern. This feature is also available in Rule Templates and the guided web-based Decision Table's BRL fragment columns.

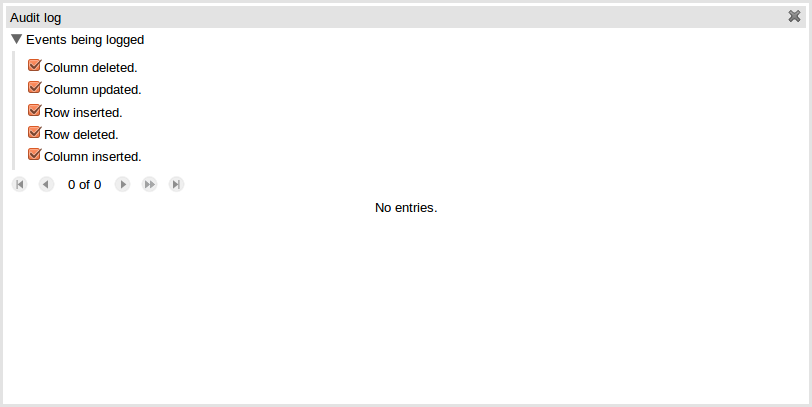

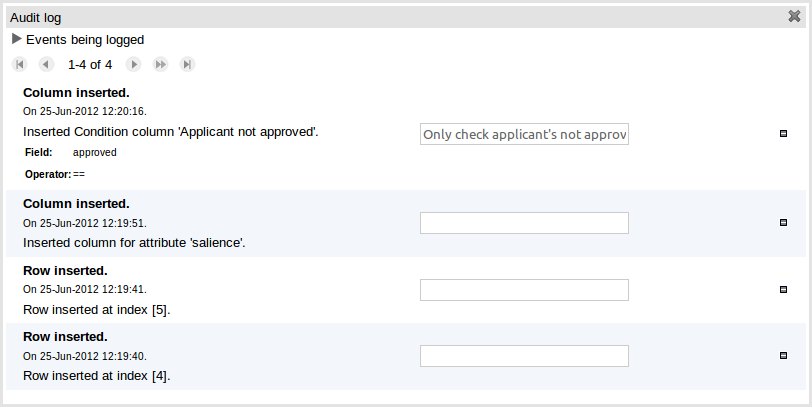

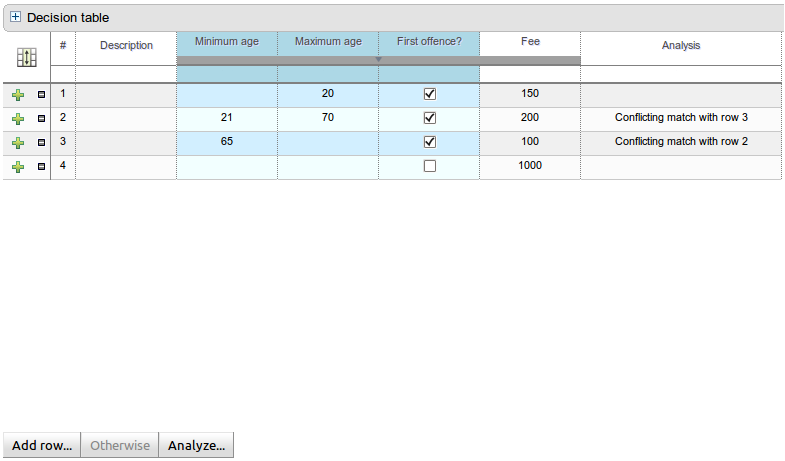

An audit log has been added to the web-guided Decision Table editor to track additions, deletions and modifications.

By default the audit log is not configured to record any events, however, users can easily select the events in which they are interested.

The audit log is persisted whenever the asset is checked in.

Once the capture of events has been enabled all subsequent operations are recorded. Users are able to perform the following:

Record an explanatory note beside each event.

Delete an event from the log. Event details remain in the underlying repository.

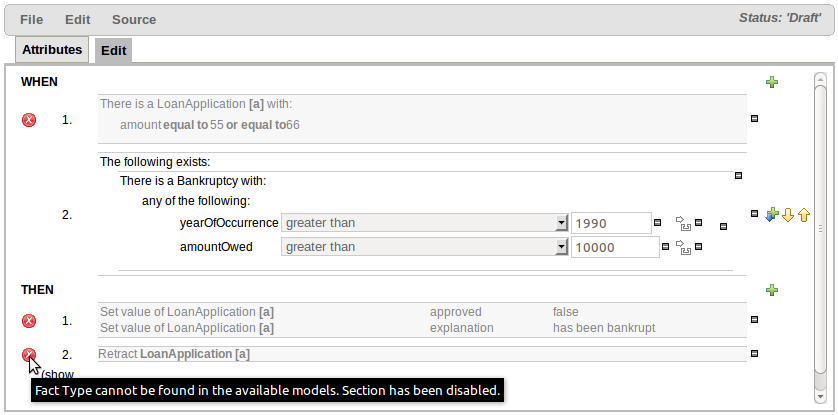

Sections of the Guided Rule Editor can become "frozen" if the Fact Type on which they rely is deleted from the available models, or the entire model is removed. Up until now the frozen section could not be removed and the whole rule had to be deleted and re-created from scratch. With this release frozen sections can be deleted.

The section is also marked with an icon indicating the error.

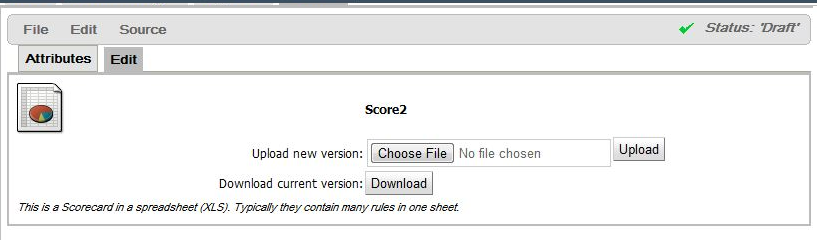

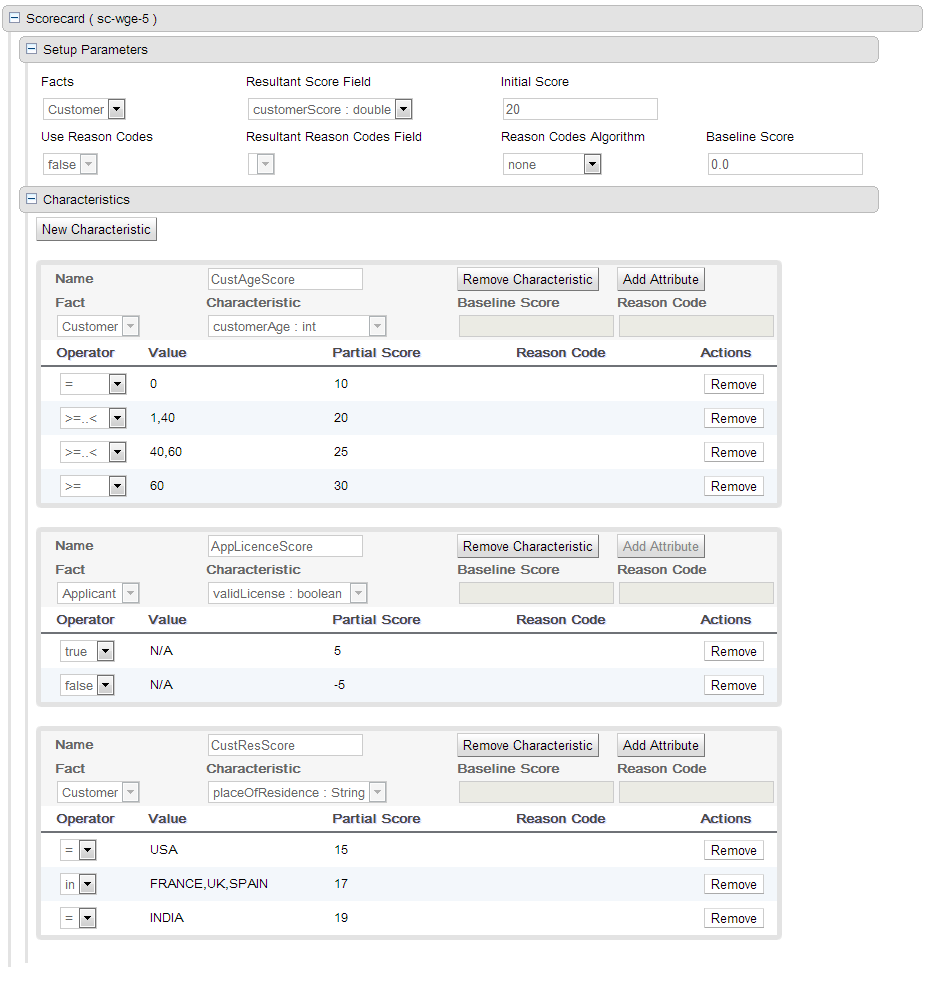

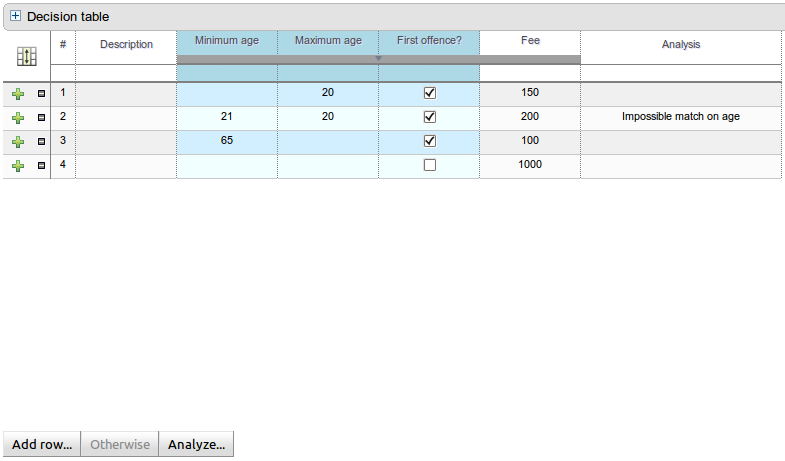

A scorecard is a graphical representation of a formula used to calculate an overall score. A scorecard can be used to predict the likelihood or probability of a certain outcome. Drools now supports additive scorecards. An additive scorecard calculates an overall score by adding all partial scores assigned to individual rule conditions.

Additionally, Drools Scorecards will allows for reason codes to be set, which help in identifying the specific rules (buckets) that have contributed to the overall score. Drools Scorecards will be based on the PMML 4.1 Standard.

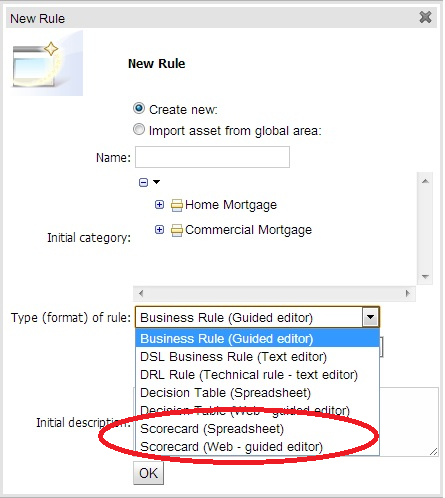

The New Rule Wizard now allows for creation of scorecard assets.

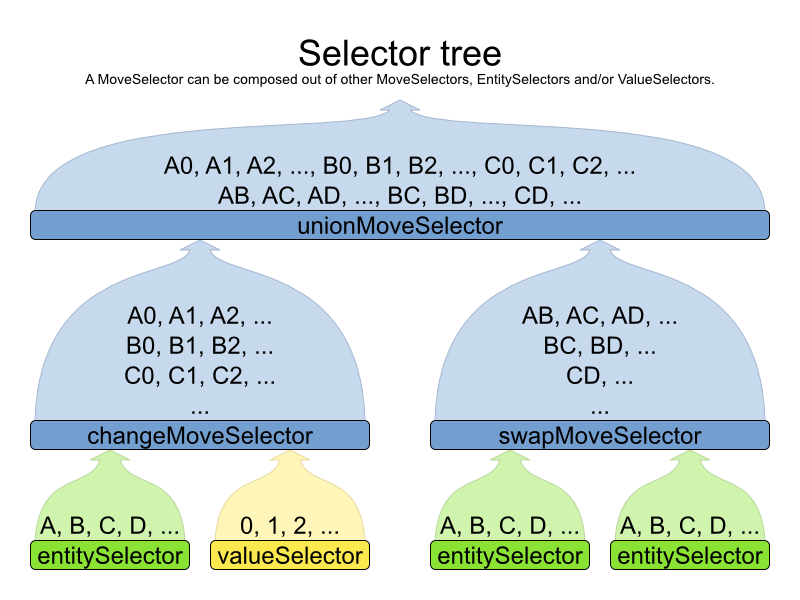

The new selector architecture is far more flexible and powerful. It works great out-of-the-box, but it can also be easily tailored to your use case for additional gain.

<unionMoveSelector>

<cacheType>JUST_IN_TIME</cacheType>

<selectionOrder>RANDOM</selectionOrder>

<changeMoveSelector/>

<swapMoveSelector/>

...

</unionMoveSelector>

Custom move factories are often no longer needed (but still supported). For now, the new selector architecture is only applied on local search (tabu search, simulated annealing, ...), but it will be applied on construction heuristics too.

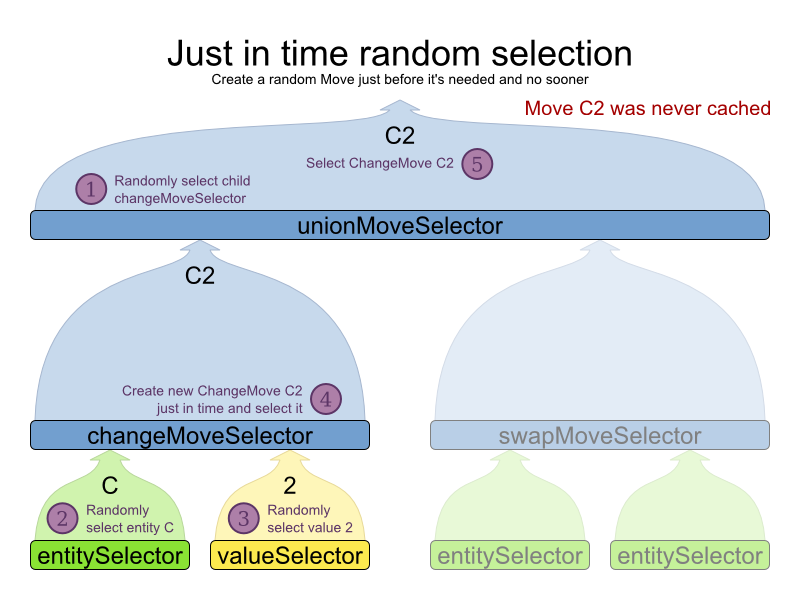

The new selector architecture allows for Just In Time random selection. For very large use cases, this configuration uses far less memory and is several times faster in performance than the older configurations. Every move is generated just in time and there's no move list that needs shuffling:

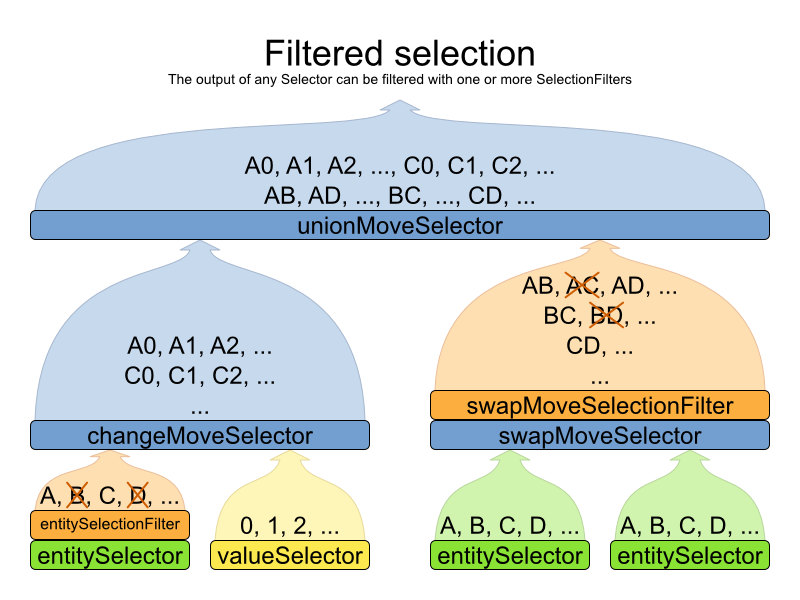

Filtered selection allows you to discard specific moves easily, such as a pointless move that swaps 2 lectures of the same course:

Probability selection allows you to favor certain moves more than others.

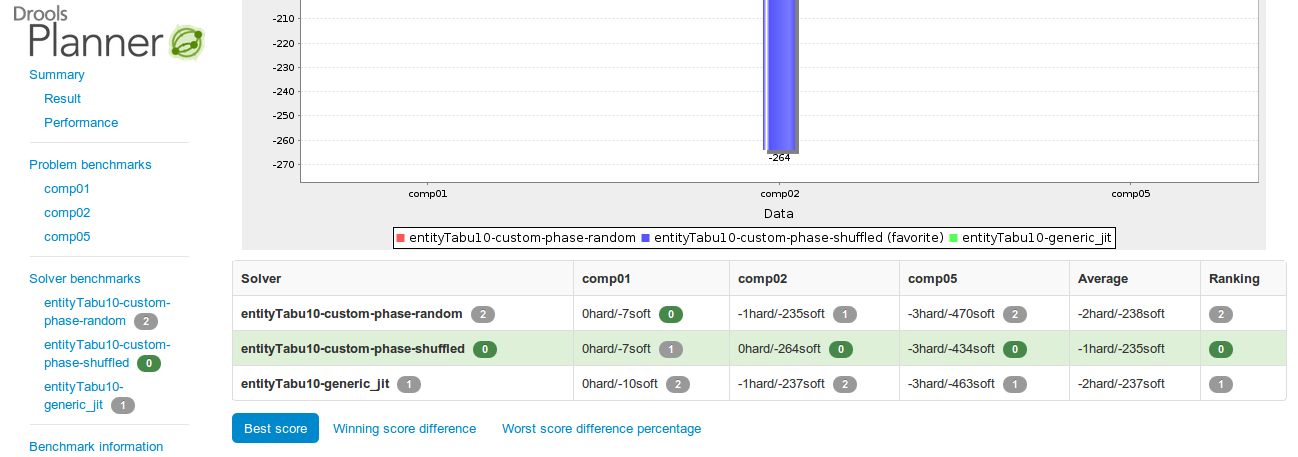

The benchmarker report has been improved, both visually:

... and in content:

New chart and table: Worst score difference percentage

Every chart that shows a score, now exists for every score level, so there is now a hard score chart too.

Each chart now has a table with the data too. This is especially useful for matrix benchmarks when the graphs become cluttered due to too much data.

Each solver configuration is now shown in the report.

General benchmark information (such as warm up time) is now also recorded.

The favorite solver configuration is clearly marked in charts.

The benchmarker now supports matrix benchmarking based on a template, which allows you to benchmark a combination of ranges of values easily.

And if you have multiple CPU's in your computer, the benchmarker can now take advantage of them thanks to parallel benchmarking.

It's now possible to prevent a planning entity from being changed at all. This is very useful for continuous planning where last week's entities shouldn't change.

@PlanningEntity(movableEntitySelectionFilter = MovableShiftAssignmentSelectionFilter.class)

public class ShiftAssignment {

...

}drools-spring module now supports declarative definition of Environment (org.drools.core.runtime.Environment)

<drools:environment id="...">

<drools:entity-manager-factory ref=".."/>

<drools:transaction-manager ref=".."/>

<drools:globals ref=".."/>

<drools:object-marshalling-strategies>

...

</drools:object-marshalling-strategies>

<drools:scoped-entity-manager scope="app|cmd" ref="..."/>

</drools:environment>

Traits were introduced in the 5.3 release, and details on them can be found in the N&N for there. This release adds an example so that people have something simple to run, to help them understand. In the drools-examples source project open the classes and drl for the namespace "/org/drools/examples/traits". There you will find an example around classifications of students and workers.

rule "Students and Workers" no-loop when

$p : Person( $name : name,

$age : age < 25,

$weight : weight )

then

IWorker w = don( $p, IWorker.class, true );

w.setWage( 1200 );

update( w );

IStudent s = don( $p, IStudent.class, true );

s.setSchool( "SomeSchool" );

update( s );

end

rule "Working Students" salience -10 when

$s : IStudent( $school : school,

$name : name,

this isA IWorker,

$wage : fields[ "wage" ] )

then

System.out.println( $name + " : I have " + $wage + " to pay the fees at " + $school );

endA two part detailed article has been written up at a blog, which will later be improved and rolled into the main documentation. For now you can read them here.

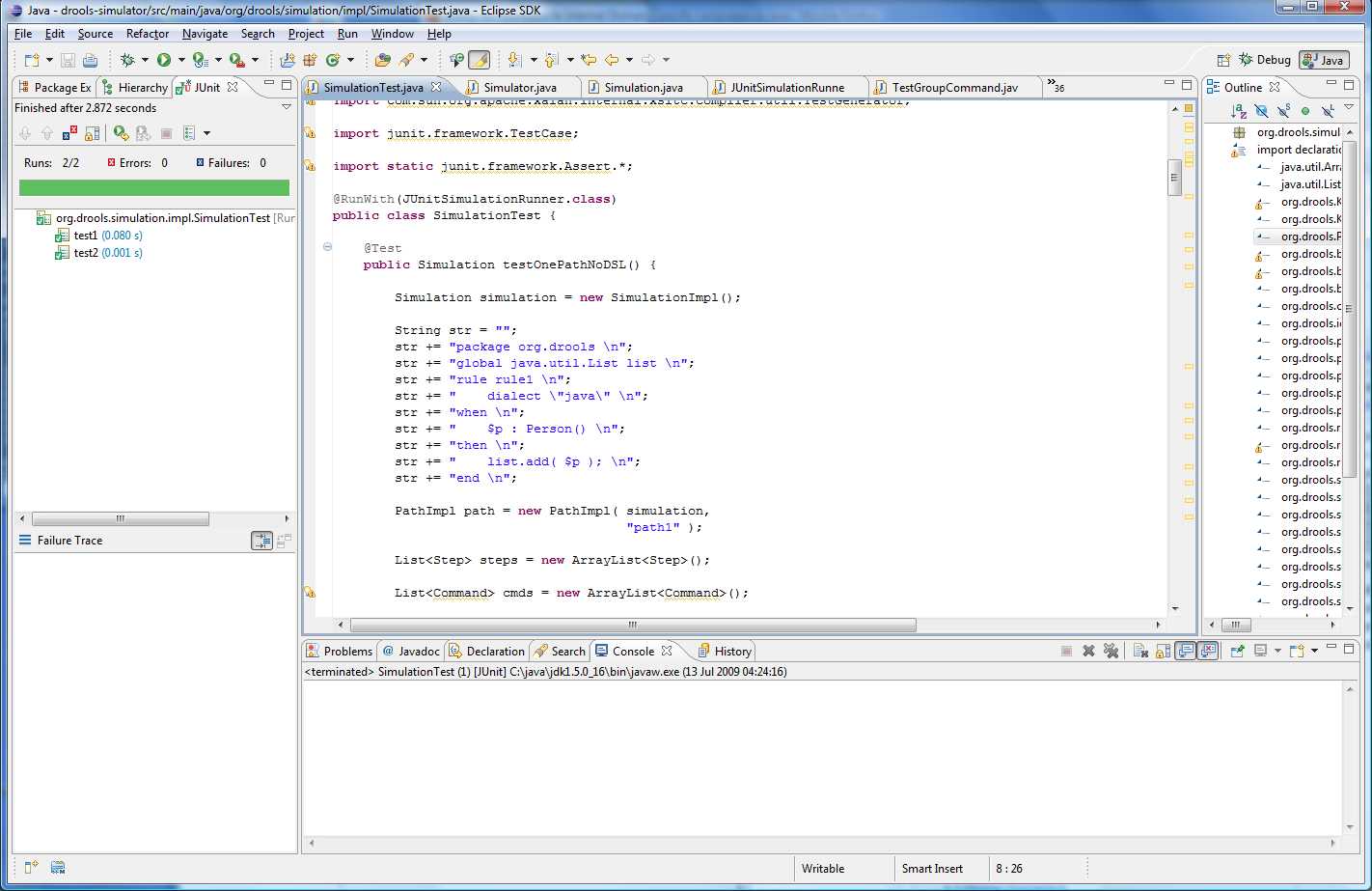

The simulation project that was first started in 2009, http://blog.athico.com/2009/07/drools-simulation-and-test-framework.html,

has undergone an over haul and is now in a usable state. We have not yet promoted this to

knowledge-api, so it's considered unstable and will change during the beta process. For now

though, the adventurous people can take a look at the unit tests and start playing.

The Simulator runs the Simulation. The Simulation is your scenario definition. The Simulation consists of 1

to n Paths, you can think of a Path as a sort of Thread. The Path is a chronological line on which Steps are

specified at given temporal distances from the start. You don't specify a time unit for the Step, say 12:00am,

instead it is always a relative time distance from the start of the Simulation (note: in Beta2 this will be

relative time distance from the last step in the same path). Each Step contains one or more Commands, i.e. create

a StatefulKnowledgeSession or insert an object or start a process. These are the very same

commands that you would use to script a knowledge session using the batch execution, so it's re-using existing

concepts.

1.1 Simulation

1..n Paths

1..n Steps

1..n Commands

All the steps, from all paths, are added to a priority queue which is ordered by the temporal distance, and allows us to incrementally execute the engine using a time slicing approach. The simulator pops of the steps from the queue in turn. For each Step it increments the engine clock and then executes all the Step's Commands.

Here is an example Command (notice it uses the same Commands as used by the CommandExecutor):

new InsertObjectCommand( new Person( "darth", 97 ) )

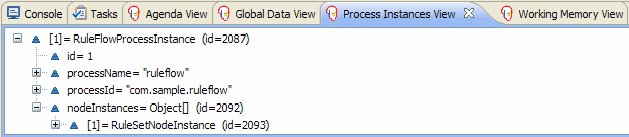

Commands can be grouped together, especially Assertion commands, via test groups. The test groups are mapped to JUnit "test methods", so as they pass or fail using a specialised JUnit Runner the Eclipse GUI is updated - as illustrated in the above image, showing two passed test groups named "test1" and "test2".

Using the JUnit integration is trivial. Just annotate the class with @RunWith(JUnitSimulationRunner.class). Then any method that is annotated with @Test and returns a Simulation instance will be invoked executing the returned Simulation instance in the Simulator. As test groups are executed the JUnit GUI is updated.

When executing any commands on a KnowledgeBuilder, KnowledgeBase or StatefulKnowledgeSession the system assumes a "register" approach. To get a feel for this look at the org.drools.simulation.impl.SimulationTest at github (path may change over time).

cmds.add( new NewKnowledgeBuilderCommand( null ) );

cmds.add( new SetVariableCommandFromLastReturn( "path1",

KnowledgeBuilder.class.getName() ) );

cmds.add( new KnowledgeBuilderAddCommand( ResourceFactory.newByteArrayResource( str.getBytes() ),

ResourceType.DRL, null ) );Notice the set command. "path1" is the context, each path has it's own variable context. All paths inherit from a "root" context. "KnowledgeBuilder.class.getName() " is the name that we are setting the return value of the last command. As mentioned before we consider the class names of those classes as registers, any further commands that attempt to operate on a knowledge builder will use what ever is assigned to that, as in the case of KnowledgeBuilderAddCommand. This allows multiple kbuilders, kbases and ksessions to exist in one context under different variable names, but only the one assigned to the register name is the one that is currently executed on.

The code below show the rough outline used in SimulationTest:

Simulation simulation = new SimulationImpl();

PathImpl path = new PathImpl( simulation,

"path1" );

simulation.getPaths().put( "path1",

path );

List<Step> steps = new ArrayList<Step>();

path.setSteps( steps );

List<Command> cmds = new ArrayList<Command>();

.... add commands to step here ....

// create a step at temporal distance of 2000ms from start

steps.add( new StepImpl( path,

cmds,

2000 ) ); We know the above looks quite verbose. SimulationTest just shows our low level canonical model, the idea is that high level representations are built ontop of this. As this is a builder API we are currently focusing on two sets of fluents, compact and standard. We will also work on a spreadsheet UI for building these, and eventually a dedicated textual dsl.

The compact fluent is designed to provide the absolute minimum necessary to run against a single ksession. A good place to start is org.drools.simulation.impl.CompactFluentTest, a snippet of which is shown below. Notice we set "yoda" to "y" and can then assert on that. Currently inside of the test string it executes using mvel. The eventual goal is to build out a set of hamcrest matchers that will allow assertions against the state of the engine, such as what rules have fired and optionally with with data.

FluentCompactSimulation f = new FluentCompactSimulationImpl();

f.newStatefulKnowledgeSession()

.getKnowledgeBase()

.addKnowledgePackages( ResourceFactory.newByteArrayResource( str.getBytes() ),

ResourceType.DRL )

.end()

.newStep( 100 ) // increases the time 100ms

.insert( new Person( "yoda",

150 ) ).set( "y" )

.fireAllRules()

// show testing inside of ksession execution

.test( "y.name == 'yoda'" )

.test( "y.age == 160" );Note that the test is not executing at build time, it's building a script to be executed later. The script underneath matches what you saw in SimulationTest. Currently the way to run a simulation manually is shown below. Although you already saw in SimulationTest that JUnit will execute these automatically. We'll improve this over time.

SimulationImpl sim = (SimulationImpl) ((FluentCompactSimulationImpl) f).getSimulation();

Simulator simulator = new Simulator( sim,

new Date().getTime() );

simulator.run();The standard fluent is almost a 1 to 1 mapping to the canonical path, step and command structure in SimulationTest- just more compact. Start by looking in org.drools.simulation.impl.StandardFluentTest. This fluent allows you to run any number of paths and steps, along with a lot more control over multiple kbuilders, kbases and ksessions.

FluentStandardSimulation f = new FluentStandardSimulationImpl();

f.newPath("init")

.newStep( 0 )

// set to ROOT, as I want paths to share this

.newKnowledgeBuilder()

.add( ResourceFactory.newByteArrayResource( str.getBytes() ),

ResourceType.DRL )

.end(ContextManager.ROOT, KnowledgeBuilder.class.getName() )

.newKnowledgeBase()

.addKnowledgePackages()

.end(ContextManager.ROOT, KnowledgeBase.class.getName() )

.end()

.newPath( "path1" )

.newStep( 1000 )

.newStatefulKnowledgeSession()

.insert( new Person( "yoda", 150 ) ).set( "y" )

.fireAllRules()

.test( "y.name == 'yoda'" )

.test( "y.age == 160" )

.end()

.end()

.newPath( "path2" )

.newStep( 800 )

.newStatefulKnowledgeSession()

.insert( new Person( "darth", 70 ) ).set( "d" )

.fireAllRules()

.test( "d.name == 'darth'" )

.test( "d.age == 80" )

.end()

.end()

.endThere is still an awful lot to do, this is designed to eventually provide a unified simulation and testing environment for rules, workflow and event processing over time, and eventually also over distributed architectures.

Flesh out the api to support more commands, and also to encompass jBPM commands

Improve out of the box usability, including moving interfaces to knowledge-api and hiding "new" constructors with factory methods

Commands are already marshallable to json and xml. They should be updated to allow full round tripping from java api commands and json/xml documents.

Develop hamcrest matchers for testing state

What rule(s) fired, including optionally what data was used with the executing rule (Drools)

What rules are active for a given fact

What rules activated and de-activated for a given fact change

Process variable state (jBPM)

Wait node states (jBPM)

Design and build tabular authoring tools via spreadsheet, targeting the web with round tripping to excel.

Design and develop textual DSL for authoring - maybe part of DRL (long term task).

Multi-function accumulate now supports inline constraints. The simplified EBNF is:

lhsAccumulate := ACCUMULATE LEFT_PAREN lhsAnd (COMMA|SEMICOLON)

accumulateFunctionBinding (COMMA accumulateFunctionBinding)*

(SEMICOLON constraints)?

RIGHT_PAREN SEMICOLON?E.g.:

rule "Accumulate example"

when

accumulate( Cheese( $price : price );

$a1 : average( $price ),

$m1 : min( $price ),

$M1 : max( $price ); // a semicolon, followed by inline constraints

$a1 > 10 && $M1 <= 100, // inline constraint

$m1 == 5 // inline constraint

)

then

// do something

end

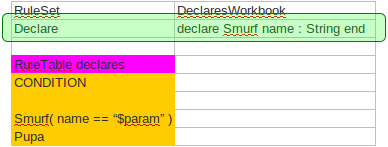

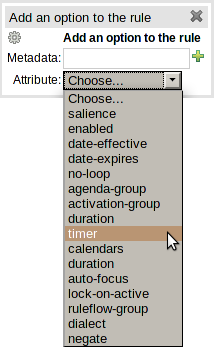

A new RuleSet property has been added called "Declare".

This provides a slot in the RuleSet definition to define declared types.

In essence the slot provides a discrete location to add type declarations where, previously, they may have been added to a Queries or Functions definition.

A working version of Wumpus World, an AI example covered in in the book "Artificial Intelligence : A Modern Approach", is now available among the other examples. A more detailed overview of Wumpus World can be found here

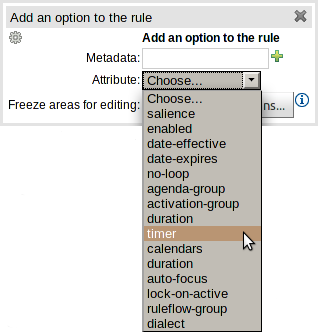

Support has been added for the "timer" and "calendar" attributes.

Table 4.1. New attributes

| Keyword | Initial | Value |

|---|---|---|

| TIMER | T | A timer definition. See "Timers and Calendars". |

| CALENDARS | E | A calendars definition. See "Timers and Calendars". |

KnowledgeBuilder has a new batch mode, with a fluent interface, that allows to build multiple DRLs at once as in the following example:

KnowledgeBuilder kbuilder = KnowledgeBuilderFactory.newKnowledgeBuilder();

kbuilder.batch()

.add(ResourceFactory.newByteArrayResource(rules1.getBytes()), ResourceType.DRL)

.add(ResourceFactory.newByteArrayResource(rules2.getBytes()), ResourceType.DRL)

.add(ResourceFactory.newByteArrayResource(declarations.getBytes()), ResourceType.DRL)

.build();In this way it is no longer necessary to build the DRLs files in the right order (e.g. first the DRLs containing the type declarations and then the ones with the rules using them) and it will also be possible to have circular references among them.

Moreover the KnowledgeBuilder (regardless if you are using the batch mode or not) also allows to discard what has been added with the last DRL(s) building. This can be useful to recover from having added a wrong DRL to the KnowledgeBuilder as it follows:

kbuilder.add(ResourceFactory.newByteArrayResource(wrongDrl.getBytes()), ResourceType.DRL);

if ( kbuilder.hasErrors() ) {

kbuilder.undo();

}Currently when in a RHS you invoke update() or modify() on a given object it will trigger a revaluation of all patterns of the matching object type in the knowledge base. As some have experienced, this can be a problem that often can lead to unwanted and useless evaluations and in the worst cases to infinite recursions. The only workaround to avoid it was to split up your objects into smaller ones having a 1 to 1 relationship with the original object.

This new feature allows the pattern matching to only react to modification of properties actually constrained or bound inside of a given pattern. That will help with performance and recursion and avoid artificial object splitting. The implementation is bit mask based, so very efficient. When the engine executes a modify statement it uses a bit mask of fields being changed, the pattern will only respond if it has an overlapping bit mask. This does not work for update(), and is one of the reason why we promote modify() as it encapsulates the field changes within the statement.

By default this feature is off in order to make the behavior of the rule engine backward compatible with the former releases. When you want to activate it on a specific bean you have to annotate it with @propertyReactive. This annotation works both on drl type declarations:

declare Person

@propertyReactive

firstName : String

lastName : String

endand on Java classes:

@PropertyReactive

public static class Person {

private String firstName;

private String lastName;

}In this way, for instance, if you have a rule like the following:

rule "Every person named Mario is a male" when

$person : Person( firstName == "Mario" )

then

modify ( $person ) { setMale( true ) }

endyou won't have to add the no-loop attribute to it in order to avoid an infinite recursion because the engine recognizes that the pattern matching is done on the 'firstName' property while the RHS of the rule modifies the 'male' one. Note that this feature does not work for update(), and this is one of the reasons why we promote modify() since it encapsulates the field changes within the statement. Moreover, on Java classes, you can also annotate any method to say that its invocation actually modifies other properties. For instance in the former Person class you could have a method like:

@Modifies( { "firstName", "lastName" } )

public void setName(String name) {

String[] names = name.split("\\s");

this.firstName = names[0];

this.lastName = names[1];

}That means that if a rule has a RHS like the following:

modify($person) { setName("Mario Fusco") }it will correctly recognize that the values of both properties 'firstName' and 'lastName' could have potentially been modified and act accordingly, not missing of reevaluating the patterns constrained on them. At the moment the usage of @Modifies is not allowed on fields but only on methods. This is coherent with the most common scenario where the @Modifies will be used for methods that are not related with a class field as in the Person.setName() in the former example. Also note that @Modifies is not transitive, meaning that if another method internally invokes the Person.setName() one it won't be enough to annotate it with @Modifies( { "name" } ), but it is necessary to use @Modifies( { "firstName", "lastName" } ) even on it. Very likely @Modifies transitivity will be implemented in the next release.

For what regards nested accessors, the engine will be notified only for top level fields. In other words a pattern matching like:

Person ( address.city.name == "London )

will be reevaluated only for modification of the 'address' property of a Person object. In the same way the constraints analysis is currently strictly limited to what there is inside a pattern. Another example could help to clarify this. An LHS like the following:

$p : Person( ) Car( owner = $p.name )

will not listen on modifications of the person's name, while this one will do:

Person( $name : name ) Car( owner = $name )

To overcome this problem it is possible to annotate a pattern with @watch as it follows:

$p : Person( ) @watch ( name ) Car( owner = $p.name )

Indeed, annotating a pattern with @watch allows you to modify the inferred set of properties for which that pattern will react. Note that the properties named in the @watch annotation are actually added to the ones automatically inferred, but it is also possible to explicitly exclude one or more of them prepending their name with a ! and to make the pattern to listen for all or none of the properties of the type used in the pattern respectively with the wildcards * and !*. So, for example, you can annotate a pattern in the LHS of a rule like:

// listens for changes on both firstName (inferred) and lastName Person( firstName == $expectedFirstName ) @watch( lastName ) // listens for all the properties of the Person bean Person( firstName == $expectedFirstName ) @watch( * ) // listens for changes on lastName and explicitly exclude firstName Person( firstName == $expectedFirstName ) @watch( lastName, !firstName ) // listens for changes on all the properties except the age one Person( firstName == $expectedFirstName ) @watch( *, !age )

Since doesn't make sense to use this annotation on a pattern using a type not annotated with @PropertyReactive the rule compiler will raise a compilation error if you try to do so. Also the duplicated usage of the same property in @watch (for example like in: @watch( firstName, ! firstName ) ) will end up in a compilation error. In a next release we will make the automatic detection of the properties to be listened smarter by doing analysis even outside of the pattern.

It also possible to enable this feature by default on all the types of your model or to completely disallow it by using on option of the KnowledgeBuilderConfiguration. In particular this new PropertySpecificOption can have one of the following 3 values:

- DISABLED => the feature is turned off and all the other related annotations are just ignored - ALLOWED => this is the default behavior: types are not property reactive unless they are not annotated with @PropertySpecific - ALWAYS => all types are property reactive by default

So, for example, to have a KnowledgeBuilder generating property reactive types by default you could do:

KnowledgeBuilderConfiguration config = KnowledgeBuilderFactory.newKnowledgeBuilderConfiguration(); config.setOption(PropertySpecificOption.ALWAYS); KnowledgeBuilder kbuilder = KnowledgeBuilderFactory.newKnowledgeBuilder(config);

In this last case it will be possible to disable the property reactivity feature on a specific type by annotating it with @ClassReactive.

This API is experimental: future backwards incompatible changes are possible.

Using the new fluent simulation testing, you can test your rules in unit tests more easily:

@Test

public void rejectMinors() {

SimulationFluent simulationFluent = new DefaultSimulationFluent();

Driver john = new Driver("John", "Smith", new LocalDate().minusYears(10));

Car mini = new Car("MINI-01", CarType.SMALL, false, new BigDecimal("10000.00"));

PolicyRequest johnMiniPolicyRequest = new PolicyRequest(john, mini);

johnMiniPolicyRequest.addCoverageRequest(new CoverageRequest(CoverageType.COLLISION));

johnMiniPolicyRequest.addCoverageRequest(new CoverageRequest(CoverageType.COMPREHENSIVE));

simulationFluent

.newKnowledgeBuilder()

.add(ResourceFactory.newClassPathResource("org/drools/examples/carinsurance/rule/policyRequestApprovalRules.drl"),

ResourceType.DRL)

.end()

.newKnowledgeBase()

.addKnowledgePackages()

.end()

.newStatefulKnowledgeSession()

.insert(john).set("john")

.insert(mini).set("mini")

.insert(johnMiniPolicyRequest).set("johnMiniPolicyRequest")

.fireAllRules()

.test("johnMiniPolicyRequest.automaticallyRejected == true")

.test("johnMiniPolicyRequest.rejectedMessageList.size() == 1")

.end()

.runSimulation();

}

You can even test your CEP rules in unit tests without suffering from slow tests:

@Test

public void lyingAboutAge() {

SimulationFluent simulationFluent = new DefaultSimulationFluent();

Driver realJohn = new Driver("John", "Smith", new LocalDate().minusYears(10));

Car realMini = new Car("MINI-01", CarType.SMALL, false, new BigDecimal("10000.00"));

PolicyRequest realJohnMiniPolicyRequest = new PolicyRequest(realJohn, realMini);

realJohnMiniPolicyRequest.addCoverageRequest(new CoverageRequest(CoverageType.COLLISION));

realJohnMiniPolicyRequest.addCoverageRequest(new CoverageRequest(CoverageType.COMPREHENSIVE));

realJohnMiniPolicyRequest.setAutomaticallyRejected(true);

realJohnMiniPolicyRequest.addRejectedMessage("Too young.");

Driver fakeJohn = new Driver("John", "Smith", new LocalDate().minusYears(30));

Car fakeMini = new Car("MINI-01", CarType.SMALL, false, new BigDecimal("10000.00"));

PolicyRequest fakeJohnMiniPolicyRequest = new PolicyRequest(fakeJohn, fakeMini);

fakeJohnMiniPolicyRequest.addCoverageRequest(new CoverageRequest(CoverageType.COLLISION));

fakeJohnMiniPolicyRequest.addCoverageRequest(new CoverageRequest(CoverageType.COMPREHENSIVE));

fakeJohnMiniPolicyRequest.setAutomaticallyRejected(false);

simulationFluent