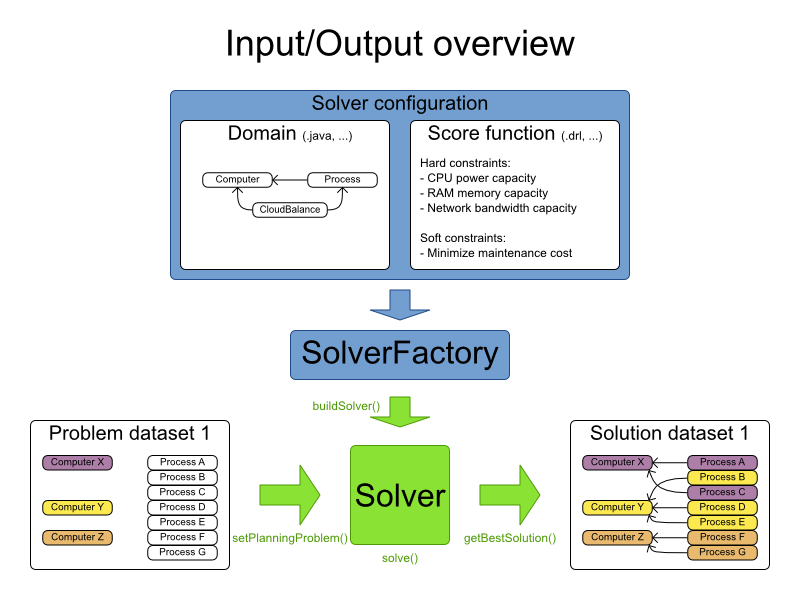

Solving a planning problem with OptaPlanner consists out of 5 steps:

Model your planning problem as a class that implements the interface

Solution, for example the classNQueens.Configure a

Solver, for example a first fit and tabu search solver for anyNQueensinstance.Load a problem data set from your data layer, for example a 4 Queens instance. Set it as the planning problem on the

SolverwithSolver.setPlanningProblem(...).Solve it with

Solver.solve().Get the best solution found by the

SolverwithSolver.getBestSolution().

You can build a Solver instance with the XmlSolverFactory. Configure

it with a solver configuration XML file:

XmlSolverFactory solverFactory = new XmlSolverFactory(

"/org/optaplanner/examples/nqueens/solver/nqueensSolverConfig.xml");

Solver solver = solverFactory.buildSolver();

A solver configuration file looks something like this:

<?xml version="1.0" encoding="UTF-8"?>

<solver>

<!-- Define the model -->

<solutionClass>org.optaplanner.examples.nqueens.domain.NQueens</solutionClass>

<planningEntityClass>org.optaplanner.examples.nqueens.domain.Queen</planningEntityClass>

<!-- Define the score function -->

<scoreDirectorFactory>

<scoreDefinitionType>SIMPLE</scoreDefinitionType>

<scoreDrl>/org/optaplanner/examples/nqueens/solver/nQueensScoreRules.drl</scoreDrl>

</scoreDirectorFactory>

<!-- Configure the optimization algorithm(s) -->

<termination>

...

</termination>

<constructionHeuristic>

...

</constructionHeuristic>

<localSearch>

...

</localSearch>

</solver>

Notice the 3 parts in it:

Define the model

Define the score function

Configure the optimization algorithm(s)

We'll explain these various parts of a configuration later in this manual.

OptaPlanner makes it relatively easy to switch optimization algorithm(s) just by

changing the configuration. There's even a Benchmark utility which allows you to

play out different configurations against each other and report the most appropriate configuration for your

problem. You could for example play out tabu search versus simulated annealing, on 4 queens and 64 queens.

As an alternative to the XML file, a solver configuration can also be configured with the

SolverConfig API:

SolverConfig solverConfig = new SolverConfig();

solverConfig.setSolutionClass(NQueens.class);

solverConfig.setPlanningEntityClassSet(Collections.<Class<?>>singleton(Queen.class));

ScoreDirectorFactoryConfig scoreDirectorFactoryConfig = new ScoreDirectorFactoryConfig();

scoreDirectorFactoryConfig.setScoreDefinitionType(ScoreDirectorFactoryConfig.ScoreDefinitionType.SIMPLE);

scoreDirectorFactoryConfig.setScoreDrlList(

Arrays.asList("/org/optaplanner/examples/nqueens/solver/nQueensScoreRules.drl"));

solverConfig.setScoreDirectorFactoryConfig(scoreDirectorFactoryConfig);

TerminationConfig terminationConfig = new TerminationConfig();

// ...

solverConfig.setTerminationConfig(terminationConfig);

List<SolverPhaseConfig> solverPhaseConfigList = new ArrayList<SolverPhaseConfig>();

ConstructionHeuristicSolverPhaseConfig constructionHeuristicSolverPhaseConfig

= new ConstructionHeuristicSolverPhaseConfig();

// ...

solverPhaseConfigList.add(constructionHeuristicSolverPhaseConfig);

LocalSearchSolverPhaseConfig localSearchSolverPhaseConfig = new LocalSearchSolverPhaseConfig();

// ...

solverPhaseConfigList.add(localSearchSolverPhaseConfig);

solverConfig.setSolverPhaseConfigList(solverPhaseConfigList);

Solver solver = solverConfig.buildSolver();

It is highly recommended to configure by XML file instead of this API. To

dynamically configure a value at runtime, use the XML file as a template and extract the

SolverConfig class with getSolverConfig() to configure the dynamic value at

runtime:

XmlSolverFactory solverFactory = new XmlSolverFactory();

"/org/optaplanner/examples/nqueens/solver/nqueensSolverConfig.xml");

SolverConfig solverConfig = solverFactory.getSolverConfig();

solverConfig.getTerminationConfig().setMaximumMinutesSpend(userInput);

Solver solver = solverConfig.buildSolver();

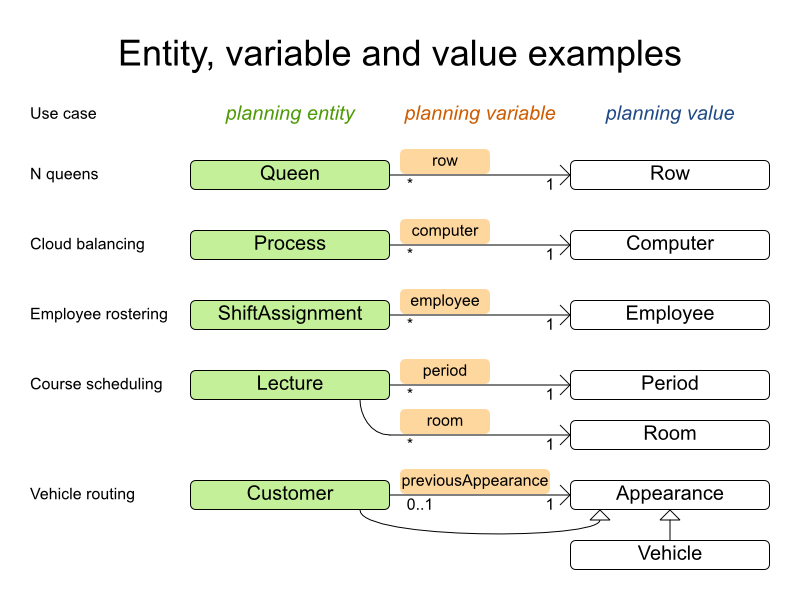

Look at a dataset of your planning problem. You 'll recognize domain classes in there, each of which is one of these:

A unrelated class: not used by any of the score constraints. From a planning standpoint, this data is obsolete.

A problem fact class: used by the score constraints, but does NOT change during planning (as long as the problem stays the same). For example:

Bed,Room,Shift,Employee,Topic,Period, ...A planning entity class: used by the score constraints and changes during planning. For example:

BedDesignation,ShiftAssignment,Exam, ...

Ask yourself: What class changes during planning? Which class has variables

that I want the Solver to change for me? That class is a planning entity. Most use

cases have only 1 planning entity class.

Note

In real-time planning, problem facts can change during planning, because the problem itself changes. However, that doesn't make them planning entities.

A good model can greatly improve the success of your planning implementation. For inspiration, take a look at how the examples modeled their domain:

When in doubt, it's usually the many side of a many to one relationship that is the planning entity. For

example in employee rostering, the planning entity class is ShiftAssignment, not

Employee. Vehicle routing is special, because it uses a chained planning variable.

In OptaPlanner all problems facts and planning entities are plain old JavaBeans (POJO's). You can load them from a database (JDBC/JPA/JDO), an XML file, a data repository, a noSQL cloud, ...: OptaPlanner doesn't care.

A problem fact is any JavaBean (POJO) with getters that does not change during planning. Implementing the

interface Serializable is recommended (but not required). For example in n queens, the columns

and rows are problem facts:

public class Column implements Serializable {

private int index;

// ... getters

}

public class Row implements Serializable {

private int index;

// ... getters

}

A problem fact can reference other problem facts of course:

public class Course implements Serializable {

private String code;

private Teacher teacher; // Other problem fact

private int lectureSize;

private int minWorkingDaySize;

private List<Curriculum> curriculumList; // Other problem facts

private int studentSize;

// ... getters

}

A problem fact class does not require any Planner specific code. For example, you can reuse your domain classes, which might have JPA annotations.

Note

Generally, better designed domain classes lead to simpler and more efficient score constraints. Therefore,

when dealing with a messy legacy system, it can sometimes be worth it to convert the messy domain set into a

planner specific POJO set first. For example: if your domain model has 2 Teacher instances

for the same teacher that teaches at 2 different departments, it's hard to write a correct score constraint that

constrains a teacher's spare time.

Alternatively, you can sometimes also introduce a cached problem fact to enrich the domain model for planning only.

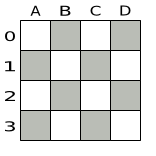

A planning entity is a JavaBean (POJO) that changes during solving, for example a Queen

that changes to another row. A planning problem has multiple planning entities, for example for a single n

queens problem, each Queen is a planning entity. But there's usually only 1 planning entity

class, for example the Queen class.

A planning entity class needs to be annotated with the @PlanningEntity

annotation.

Each planning entity class has 1 or more planning variables. It usually also has 1 or

more defining properties. For example in n queens, a Queen is defined by

its Column and has a planning variable Row. This means that a Queen's

column never changes during solving, while its row does change.

@PlanningEntity

public class Queen {

private Column column;

// Planning variables: changes during planning, between score calculations.

private Row row;

// ... getters and setters

}

A planning entity class can have multiple planning variables. For example, a Lecture is

defined by its Course and its index in that course (because 1 course has multiple lectures).

Each Lecture needs to be scheduled into a Period and a

Room so it has 2 planning variables (period and room). For example: the course Mathematics

has 8 lectures per week, of which the first lecture is Monday morning at 08:00 in room 212.

@PlanningEntity

public class Lecture {

private Course course;

private int lectureIndexInCourse;

// Planning variables: changes during planning, between score calculations.

private Period period;

private Room room;

// ...

}

The solver configuration also needs to be made aware of each planning entity class:

<solver>

...

<planningEntityClass>org.optaplanner.examples.nqueens.domain.Queen</planningEntityClass>

...

</solver>

Some uses cases have multiple planning entity classes. For example: route freight and trains into railway network arcs, where each freight can use multiple trains over its journey and each train can carry multiple freights per arc. Having multiple planning entity classes directly raises the implementation complexity of your use case.

Note

Do not create unnecessary planning entity classes. This leads to difficult

Move implementations and slower score calculation.

For example, do not create a planning entity class to hold the total free time of a teacher, which needs

to be kept up to date as the Lecture planning entities change. Instead, calculate the free

time in the score constraints and put the result per teacher into a logically inserted score object.

If historic data needs to be considered too, then create problem fact to hold the historic data up to, but not including, the planning window (so it doesn't change when a planning entity changes) and let the score constraints take it into account.

Some optimization algorithms work more efficiently if they have an estimation of which planning entities are more difficult to plan. For example: in bin packing bigger items are harder to fit, in course scheduling lectures with more students are more difficult to schedule and in n queens the middle queens are more difficult to fit on the board.

Therefore, you can set a difficultyComparatorClass to the

@PlanningEntity annotation:

@PlanningEntity(difficultyComparatorClass = CloudProcessDifficultyComparator.class)

public class CloudProcess {

// ...

}

public class CloudProcessDifficultyComparator implements Comparator<CloudProcess> {

public int compare(CloudProcess a, CloudProcess b) {

return new CompareToBuilder()

.append(a.getRequiredMultiplicand(), b.getRequiredMultiplicand())

.append(a.getId(), b.getId())

.toComparison();

}

}

Alternatively, you can also set a difficultyWeightFactoryClass to the

@PlanningEntity annotation, so you have access to the rest of the problem facts from the

Solution too:

@PlanningEntity(difficultyWeightFactoryClass = QueenDifficultyWeightFactory.class)

public class Queen {

// ...

}

See Sorted Selection for more information.

Important

Difficulty should be implemented ascending: easy entities are lower, difficult entities are higher. For example in bin packing: small item < medium item < big item.

Even though some algorithms start with the more difficult entities first, they just reverse the ordering.

None of the current planning variable state should be used to compare planning entity

difficult. During construction heuristics, those variables are likely to be null

anyway. For example, a Queen's row variable should not be used.

A planning variable is a property (including getter and setter) on a planning entity. It points to a

planning value, which changes during planning. For example, a Queen's row

property is a planning variable. Note that even though a Queen's row

property changes to another Row during planning, no Row instance itself is

changed.

A planning variable getter needs to be annotated with the @PlanningVariable annotation.

Furthermore, it needs a @ValueRange annotation too.

@PlanningEntity

public class Queen {

private Row row;

// ...

@PlanningVariable

@ValueRange(type = ValueRangeType.FROM_SOLUTION_PROPERTY, solutionProperty = rowList")

public Row getRow() {

return row;

}

public void setRow(Row row) {

this.row = row;

}

}

By default, an initialized planning variable cannot be null, so an initialized solution

will never use null for any of its planning variables. In over-constrained use case, this can

be contra productive. For example: in task assignment with too many tasks for the workforce, we would rather

leave low priority tasks unassigned instead of assigning them to an overloaded worker.

To allow an initialized planning variable to be null, set nullable

to true:

@PlanningVariable(nullable = true)

@ValueRange(...)

public Worker getWorker() {

return worker;

}

Important

Planner will automatically add the value null to the value range. There is no need to

add null in a collection used by a ValueRange.

Note

Using a nullable planning variable implies that your score calculation is responsible for punishing (or even rewarding) variables with a null value.

Repeated planning (especially real-time planning) does not mix well with a nullable planning variable: every

time the Solver starts or a problem fact change is made, the construction heuristics will try to initialize all

the null variables again, which can be a huge waste of time. One way to deal with this, is to change when a

planning entity should be reinitialized with an reinitializeVariableEntityFilter:

@PlanningVariable(nullable = true, reinitializeVariableEntityFilter = ReinitializeTaskFilter.class)

@ValueRange(...)

public Worker getWorker() {

return worker;

}

A planning variable is considered initialized if its value is not null or if the

variable is nullable. So a nullable variable is always considered initialized, even when a

custom reinitializeVariableEntityFilter triggers a reinitialization.

A planning entity is initialized if all of its planning variables are initialized.

A Solution is initialized if all of its planning entities are initialized.

A planning value is a possible value for a planning variable. Usually, a planning value is a problem fact, but it can also be any object, for example a double. It can even be another planning entity or even a interface implemented by a planning entity and a problem fact.

A planning value range is the set of possible planning values for a planning variable. This set can be a

discrete (for example row 1, 2, 3 or

4) or continuous (for example any double between 0.0

and 1.0). Continuous planning variables are currently undersupported and require the use of

custom moves.

There are several ways to define the value range of a planning variable with the

@ValueRange annotation.

All instances of the same planning entity class share the same set of possible planning values for that planning variable. This is the most common way to configure a value range.

The Solution implementation has property which returns a

Collection. Any value from that Collection is a possible planning value

for this planning variable.

@PlanningVariable

@ValueRange(type = ValueRangeType.FROM_SOLUTION_PROPERTY, solutionProperty = "rowList")

public Row getRow() {

return row;

}

public class NQueens implements Solution<SimpleScore> {

// ...

public List<Row> getRowList() {

return rowList;

}

}

That Collection must not contain the value null, even for a nullable planning variable.

Each planning entity has its own set of possible planning values for a planning variable. For example, if a teacher can never teach in a room that does not belong to his department, lectures of that teacher can limit their room value range to the rooms of his department.

@PlanningVariable

@ValueRange(type = ValueRangeType.FROM_PLANNING_ENTITY_PROPERTY, planningEntityProperty = "possibleRoomList")

public Room getRoom() {

return room;

}

public List<Room> getPossibleRoomList() {

return getCourse().getTeacher().getPossibleRoomList();

}

Never use this to enforce a soft constraint (or even a hard constraint when the problem might not have a feasible solution). For example: Unless there is no other way, a teacher can not teach in a room that does not belong to his department. In this case, the teacher should not be limited in his room value range (because sometimes there is no other way).

Note

By limiting the value range specifically of 1 planning entity, you are effectively making a build-in hard constraint. This can be a very good thing, as the number of possible solutions is severely lowered. But this can also be a bad thing because it takes away the freedom of the optimization algorithms to temporarily break that constraint in order to escape a local optima.

A planning entity should not use other planning entities to determinate its value range. That would only try to make it solve the planning problem itself and interfere with the optimization algorithms.

This value range is not compatible with a chained variable.

Leaves the value range undefined. Most optimization algorithms do not support this value range.

@PlanningVariable

@ValueRange(type = ValueRangeType.UNDEFINED)

public Row getRow() {

return row;

}

Value ranges can be combined, for example:

@PlanningVariable(...)

@ValueRanges({

@ValueRange(type = ValueRangeType.FROM_SOLUTION_PROPERTY, solutionProperty = "companyCarList"),

@ValueRange(type = ValueRangeType.FROM_PLANNING_ENTITY_PROPERTY, planningEntityProperty = "personalCarList"})

public Car getCar() {

return car;

}

In some cases (such as in chaining), the planning value itself is sometimes another planning entity. In such cases, it's often required that a planning entity is only eligible as a planning value if it's initialized:

@PlanningVariable

@ValueRange(type = ValueRangeType.FROM_SOLUTION_PROPERTY, solutionProperty = "copList", excludeUninitializedPlanningEntity = true)

public Cop getPartner() {

return partner;

}

TODO: this is likely to change in the future (jira), as it should support specific planning variable initialization too.

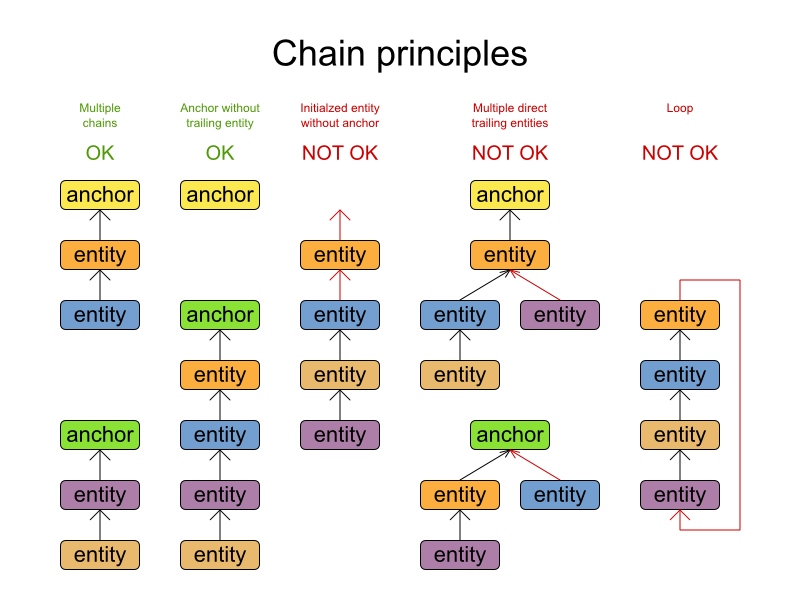

Some use cases, such as TSP and Vehicle Routing, require chaining. This means the planning entities point to each other and form a chain.

A planning variable that is chained either:

Directly points to a planning fact, which is called an anchor.

Points to another planning entity with the same planning variable, which recursively points to an anchor.

Here are some example of valid and invalid chains:

Every initialized planning entity is part of an open-ended chain that begins from an anchor. A valid model means that:

A chain is never a loop. The tail is always open.

Every chain always has exactly 1 anchor. The anchor is a problem fact, never a planning entity.

A chain is never a tree, it is always a line. Every anchor or planning entity has at most 1 trailing planning entity.

Every initialized planning entity is part of a chain.

An anchor with no planning entities pointing to it, is also considered a chain.

Warning

A planning problem instance given to the Solver must be valid.

Note

If your constraints dictate a closed chain, model it as an open-ended chain (which is easier to persist in a database) and implement a score constraint for the last entity back to the anchor.

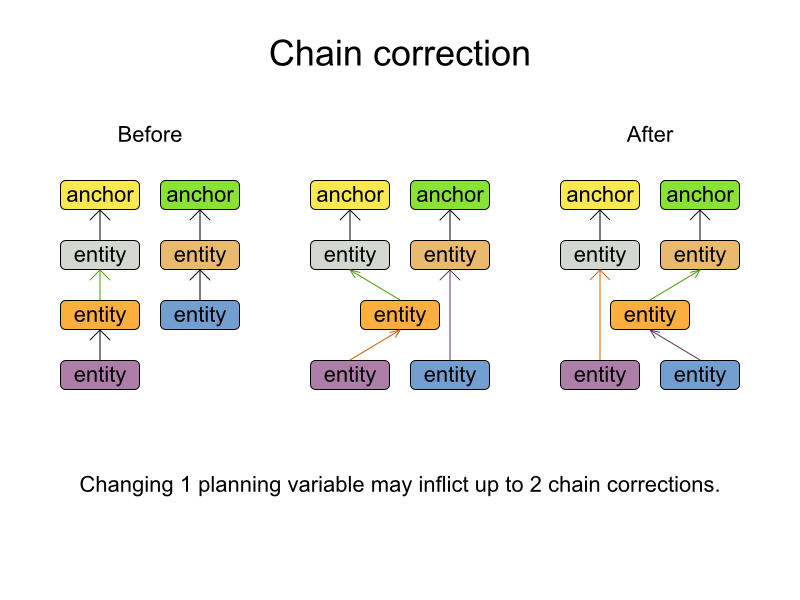

The optimization algorithms and build-in MoveFactory's do chain correction to guarantee

that the model stays valid:

Warning

A custom Move implementation must leave the model in a valid state.

For example, in TSP the anchor is a Domicile (in vehicle routing it is the

vehicle):

public class Domicile ... implements Appearance {

...

public City getCity() {...}

}

The anchor (which is a problem fact) and the planning entity implement a common interface, for example

TSP's Appearance:

public interface Appearance {

City getCity();

}

That interface is the return type of the planning variable. Furthermore, the planning variable is chained.

For example TSP's Visit (in vehicle routing it is the customer):

@PlanningEntity

public class Visit ... implements Appearance {

...

public City getCity() {...}

@PlanningVariable(chained = true)

@ValueRanges({

@ValueRange(type = ValueRangeType.FROM_SOLUTION_PROPERTY, solutionProperty = "domicileList"),

@ValueRange(type = ValueRangeType.FROM_SOLUTION_PROPERTY, solutionProperty = "visitList",

excludeUninitializedPlanningEntity = true)})

public Appearance getPreviousAppearance() {

return previousAppearance;

}

public void setPreviousAppearance(Appearance previousAppearance) {

this.previousAppearance = previousAppearance;

}

}

Notice how 2 value ranges need to be combined:

The value range which holds the anchors, for example

domicileList.The value range which holds the initialized planning entities, for example

visitList. This always requires an enabledexcludeUninitializedPlanningEntity, because an initialized entity should never point to an uninitialized entity: that would break the principle that every chain must have an anchor.

Some optimization algorithms work more efficiently if they have an estimation of which planning values are stronger, which means they are more likely to satisfy a planning entity. For example: in bin packing bigger containers are more likely to fit an item and in course scheduling bigger rooms are less likely to break the student capacity constraint.

Therefore, you can set a strengthComparatorClass to the

@PlanningVariable annotation:

@PlanningVariable(strengthComparatorClass = CloudComputerStrengthComparator.class)

// ...

public CloudComputer getComputer() {

// ...

}

public class CloudComputerStrengthComparator implements Comparator<CloudComputer> {

public int compare(CloudComputer a, CloudComputer b) {

return new CompareToBuilder()

.append(a.getMultiplicand(), b.getMultiplicand())

.append(b.getCost(), a.getCost()) // Descending (but this is debatable)

.append(a.getId(), b.getId())

.toComparison();

}

}

Note

If you have multiple planning value classes in the same value range, the

strengthComparatorClass needs to implement a Comparator of a common

superclass (for example Comparator<Object>) and be able to handle comparing instances

of those different classes.

Alternatively, you can also set a strengthWeightFactoryClass to the

@PlanningVariable annotation, so you have access to the rest of the problem facts from the

solution too:

@PlanningVariable(strengthWeightFactoryClass = RowStrengthWeightFactory.class)

// ...

public Row getRow() {

// ...

}

See Sorted Selection for more information.

Important

Strength should be implemented ascending: weaker values are lower, stronger values are higher. For example in bin packing: small container < medium container < big container.

None of the current planning variable state in any of the planning entities should be used to

compare planning values. During construction heuristics, those variables are likely to be

null anyway. For example, none of the row variables of any

Queen may be used to determine the strength of a Row.

A dataset for a planning problem needs to be wrapped in a class for the Solver to

solve. You must implement this class. For example in n queens, this in the NQueens class

which contains a Column list, a Row list and a Queen

list.

A planning problem is actually a unsolved planning solution or - stated differently - an uninitialized

Solution. Therefor, that wrapping class must implement the Solution

interface. For example in n queens, that NQueens class implements

Solution, yet every Queen in a fresh NQueens class is

not yet assigned to a Row (their row property is null).

So it's not a feasible solution. It's not even a possible solution. It's an uninitialized solution.

You need to present the problem as a Solution instance to the

Solver. So you need to have a class that implements the Solution

interface:

public interface Solution<S extends Score> {

S getScore();

void setScore(S score);

Collection<? extends Object> getProblemFacts();

}

For example, an NQueens instance holds a list of all columns, all rows and all

Queen instances:

public class NQueens implements Solution<SimpleScore> {

private int n;

// Problem facts

private List<Column> columnList;

private List<Row> rowList;

// Planning entities

private List<Queen> queenList;

// ...

}

A Solution requires a score property. The score property is null if

the Solution is uninitialized or if the score has not yet been (re)calculated. The

score property is usually typed to the specific Score implementation you

use. For example, NQueens uses a SimpleScore:

public class NQueens implements Solution<SimpleScore> {

private SimpleScore score;

public SimpleScore getScore() {

return score;

}

public void setScore(SimpleScore score) {

this.score = score;

}

// ...

}

Most use cases use a HardSoftScore instead:

public class CourseSchedule implements Solution<HardSoftScore> {

private HardSoftScore score;

public HardSoftScore getScore() {

return score;

}

public void setScore(HardSoftScore score) {

this.score = score;

}

// ...

}

See the Score calculation section for more information on the Score

implementations.

The method is only used if Drools is used for score calculation. Other score directors do not use it.

All objects returned by the getProblemFacts() method will be asserted into the Drools

working memory, so the score rules can access them. For example, NQueens just returns all

Column and Row instances.

public Collection<? extends Object> getProblemFacts() {

List<Object> facts = new ArrayList<Object>();

facts.addAll(columnList);

facts.addAll(rowList);

// Do not add the planning entity's (queenList) because that will be done automatically

return facts;

}

All planning entities are automatically inserted into the Drools working memory. Do

not add them in the method getProblemFacts().

Note

A common mistake is to use facts.add(...) instead of

fact.addAll(...) for a Collection, which leads to score rules failing to

match because the elements of that Collection aren't in the Drools working memory.

The method getProblemFacts() is not called much: at most only once per solver phase per

solver thread.

A cached problem fact is a problem fact that doesn't exist in the real domain model, but is calculated

before the Solver really starts solving. The method getProblemFacts() has

the chance to enrich the domain model with such cached problem facts, which can lead to simpler and faster score

constraints.

For example in examination, a cached problem fact TopicConflict is created for every 2

Topic's which share at least 1 Student.

public Collection<? extends Object> getProblemFacts() {

List<Object> facts = new ArrayList<Object>();

// ...

facts.addAll(calculateTopicConflictList());

// ...

return facts;

}

private List<TopicConflict> calculateTopicConflictList() {

List<TopicConflict> topicConflictList = new ArrayList<TopicConflict>();

for (Topic leftTopic : topicList) {

for (Topic rightTopic : topicList) {

if (leftTopic.getId() < rightTopic.getId()) {

int studentSize = 0;

for (Student student : leftTopic.getStudentList()) {

if (rightTopic.getStudentList().contains(student)) {

studentSize++;

}

}

if (studentSize > 0) {

topicConflictList.add(new TopicConflict(leftTopic, rightTopic, studentSize));

}

}

}

}

return topicConflictList;

}

Any score constraint that needs to check if no 2 exams have a topic which share a student are being

scheduled close together (depending on the constraint: at the same time, in a row or in the same day), can

simply use the TopicConflict instance as a problem fact, instead of having to combine every 2

Student instances.

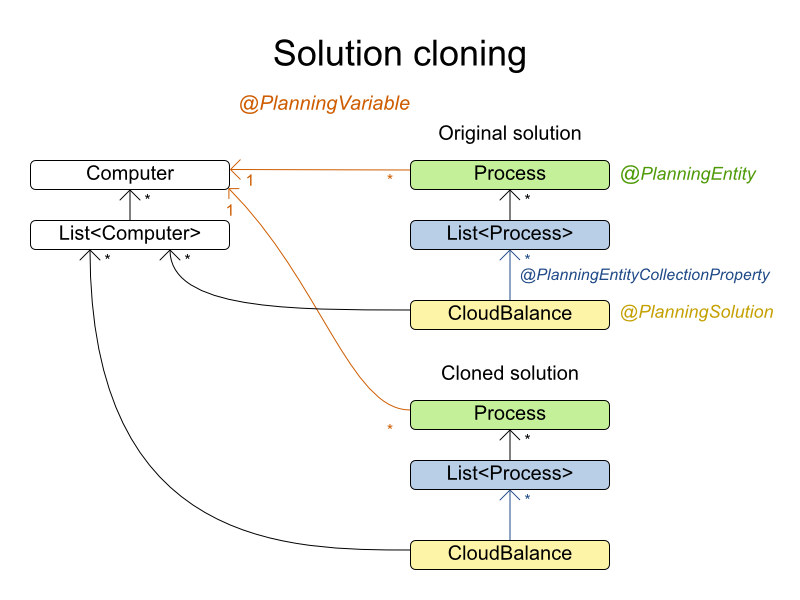

Most (if not all) optimization algorithms clone the solution each time they encounter a new best solution (so they can recall it later) or to work with multiple solutions in parallel.

Note

There are many ways to clone, such as a shallow clone, deep clone, ... This context focuses on a planning clone.

A planning clone of a Solution must fulfill these requirements:

The clone must represent the same planning problem. Usually it reuses the same instances of the problem facts and problem fact collections as the original.

The clone must use different, cloned instances of the entities and entity collections. Changes to an original

Solution's entity's variables must not effect its clone.

Implementing a planning clone method is hard, therefore you don't need to implement it.

This SolutionCloner is used by default. It works for the majority of use

cases.

Warning

When the FieldAccessingSolutionCloner clones your entity collection, it might not

recognize the implementation and replace it with ArrayList,

LinkedHashSet or TreeSet (whichever is more applicable). It recognizes

most of the common JDK Collection implementations.

If your Solution implements PlanningCloneable, Planner will automatically choose to clone it by calling

the method planningClone().

public interface PlanningCloneable<T> {

T planningClone();

}

For example: If NQueens implements PlanningCloneable, it would

only deep clone all Queen instances. When the original solution is changed during planning,

by changing a Queen, the clone stays the same.

public class NQueens implements Solution<...>, PlanningCloneable<NQueens> {

...

/**

* Clone will only deep copy the {@link #queenList}.

*/

public NQueens planningClone() {

NQueens clone = new NQueens();

clone.id = id;

clone.n = n;

clone.columnList = columnList;

clone.rowList = rowList;

List<Queen> clonedQueenList = new ArrayList<Queen>(queenList.size());

for (Queen queen : queenList) {

clonedQueenList.add(queen.planningClone());

}

clone.queenList = clonedQueenList;

clone.score = score;

return clone;

}

}

The planningClone() method should only deep clone the planning

entities. Notice that the problem facts, such as Column and

Row are normally not cloned: even their List

instances are not cloned. If you were to clone the problem facts too, then you'd have to

make sure that the new planning entity clones also refer to the new problem facts clones used by the solution.

For example, if you would clone all Row instances, then each Queen clone

and the NQueens clone itself should refer to those new Row

clones.

Warning

Cloning an entity with a chained variable is devious: a variable of an entity A might point to another entity B. If A is cloned, then it's variable must point to the clone of B, not the original B.

Build a Solution instance to represent your planning problem, so you can set it on the

Solver as the planning problem to solve. For example in n queens, an

NQueens instance is created with the required Column and

Row instances and every Queen set to a different column

and every row set to null.

private NQueens createNQueens(int n) {

NQueens nQueens = new NQueens();

nQueens.setId(0L);

nQueens.setN(n);

nQueens.setColumnList(createColumnList(nQueens));

nQueens.setRowList(createRowList(nQueens));

nQueens.setQueenList(createQueenList(nQueens));

return nQueens;

}

private List<Queen> createQueenList(NQueens nQueens) {

int n = nQueens.getN();

List<Queen> queenList = new ArrayList<Queen>(n);

long id = 0;

for (Column column : nQueens.getColumnList()) {

Queen queen = new Queen();

queen.setId(id);

id++;

queen.setColumn(column);

// Notice that we leave the PlanningVariable properties on null

queenList.add(queen);

}

return queenList;

}

Usually, most of this data comes from your data layer, and your Solution implementation

just aggregates that data and creates the uninitialized planning entity instances to plan:

private void createLectureList(CourseSchedule schedule) {

List<Course> courseList = schedule.getCourseList();

List<Lecture> lectureList = new ArrayList<Lecture>(courseList.size());

for (Course course : courseList) {

for (int i = 0; i < course.getLectureSize(); i++) {

Lecture lecture = new Lecture();

lecture.setCourse(course);

lecture.setLectureIndexInCourse(i);

// Notice that we leave the PlanningVariable properties (period and room) on null

lectureList.add(lecture);

}

}

schedule.setLectureList(lectureList);

}

A Solver implementation will solve your planning problem.

public interface Solver {

void setPlanningProblem(Solution planningProblem);

void solve();

Solution getBestSolution();

// ...

}

A Solver can only solve 1 planning problem instance at a time. A

Solver should only be accessed from a single thread, except for the methods that are

specifically javadocced as being thread-safe. It's build with a SolverFactory, do not implement

or build it yourself.

Solving a problem is quite easy once you have:

A

Solverbuild from a solver configurationA

Solutionthat represents the planning problem instance

Just set the planning problem, solve it and extract the best solution:

solver.setPlanningProblem(planningProblem);

solver.solve();

Solution bestSolution = solver.getBestSolution();

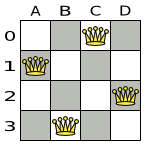

For example in n queens, the method getBestSolution() will return an

NQueens instance with every Queen assigned to a

Row.

The solve() method can take a long time (depending on the problem size and the solver

configuration). The Solver will remember (actually clone) the best solution it encounters

during its solving. Depending on a number factors (including problem size, how much time the

Solver has, the solver configuration, ...), that best solution will be a feasible or even an

optimal solution.

Note

The Solution instance given to the method setPlanningProblem() will

be changed by the Solver, but it do not mistake it for the best solution.

The Solution instance returned by the method getBestSolution() will

most likely be a clone of the instance given to the method setPlanningProblem(), which means

it's a different instance.

Note

The Solution instance given to the method setPlanningProblem() does

not need to be uninitialized. It can be partially or fully initialized, which is likely to be the case in repeated planning.

The environment mode allows you to detect common bugs in your implementation. It does not affect the logging level.

You can set the environment mode in the solver configuration XML file:

<solver>

<environmentMode>FAST_ASSERT</environmentMode>

...

</solver>

A solver has a single Random instance. Some solver configurations use the

Random instance a lot more than others. For example simulated annealing depends highly on

random numbers, while tabu search only depends on it to deal with score ties. The environment mode influences the

seed of that Random instance.

There are 4 environment modes:

The FULL_ASSERT mode is reproducible (see the reproducible mode) and also turns on all assertions (such as assert that the incremental score calculation is uncorrupted) to fail-fast on rule engine bugs.

The FULL_ASSERT mode is very slow (because it doesn't rely on delta based score calculation).

The FAST_ASSERT mode is reproducible (see the reproducible mode) and also turns on most assertions (such as assert that an undo Move's score is the same as before the Move) to fail-fast on a bug in your Move implementation, your score rule, ...

The FAST_ASSERT mode is slow.

It's recommended to write a test case which does a short run of your planning problem with the FAST_ASSERT mode on.

The reproducible mode is the default mode because it is recommended during development. In this mode, 2 runs in the same Planner version will execute the same code in the same order. Those 2 runs will have the same result, except if the note below applies . This allows you to consistently reproduce bugs. It also allows you to benchmark certain refactorings (such as a score constraint optimization) fairly across runs.

Note

Despite the reproducible mode, your application might still not be fully reproducible because of:

Use of

HashSet(or anotherCollectionwhich has an inconsistent order between JVM runs) for collections of planning entities or planning values (but not normal problem facts), especially in theSolutionimplementation. Replace it withLinkedHashSet.Combining a time gradient dependent algorithms (most notably simulated annealing) together with time spend termination. A sufficiently large difference in allocated CPU time will influence the time gradient values. Replace simulated annealing with late acceptance. Or instead, replace time spend termination with step count termination.

The reproducible mode is not much slower than the production mode. If your production environment requires reproducibility, use it in production too.

In practice, this mode uses the default random seed, and it also disables certain concurrency optimizations (such as work stealing).

The production mode is the fastest and the most robust, but not reproducible. It is recommended for a production environment.

The random seed is different on every run, which makes it more robust against an unlucky random seed. An unlucky random seed gives a bad result on a certain data set with a certain solver configuration. Note that in most use cases the impact of the random seed is relatively low on the result (even with simulated annealing). An occasional bad result is far more likely to be caused by another issue (such as a score trap).

The best way to illuminate the black box that is a Solver, is to play with the logging

level:

error: Log errors, except those that are thrown to the calling code as a

RuntimeException.Note

If an error happens, Planner normally fails fast: it throws a subclass of

RuntimeExceptionwith a detailed message to the calling code. It does not log it as an error itself to avoid duplicate log messages. Except if the calling code explicitly catches and eats thatRuntimeException, aThread's defaultExceptionHandlerwill log it as an error anyway. Meanwhile, the code is disrupted from doing further harm or obfuscating the error.warn: Log suspicious circumstances.

info: Log every phase and the solver itself. See scope overview.

debug: Log every step of every phase. See scope overview.

trace: Log every move of every step of every phase. See scope overview.

Note

Turning on

tracelogging, will slow down performance considerably: it's often 4 times slower. However, it's invaluable during development to discover a bottleneck.

For example, set it to debug logging, to see when the phases end and how fast steps are

taken:

INFO Solving started: time spend (0), score (null), new best score (null), random seed (0). DEBUG Step index (0), time spend (1), score (0), initialized planning entity (col2@row0). DEBUG Step index (1), time spend (3), score (0), initialized planning entity (col1@row2). DEBUG Step index (2), time spend (4), score (0), initialized planning entity (col3@row3). DEBUG Step index (3), time spend (5), score (-1), initialized planning entity (col0@row1). INFO Phase (0) constructionHeuristic ended: step total (4), time spend (6), best score (-1). DEBUG Step index (0), time spend (10), score (-1), best score (-1), accepted/selected move count (12/12) for picked step (col1@row2 => row3). DEBUG Step index (1), time spend (12), score (0), new best score (0), accepted/selected move count (12/12) for picked step (col3@row3 => row2). INFO Phase (1) localSearch ended: step total (2), time spend (13), best score (0). INFO Solving ended: time spend (13), best score (0), average calculate count per second (4846).

All time spends are in milliseconds.

Everything is logged to SLF4J, which is a simple logging facade which delegates every log message to Logback, Apache Commons Logging, Log4j or java.util.logging. Add a dependency to the logging adaptor for your logging framework of choice.

If you're not using any logging framework yet, use Logback by adding this Maven dependency (there is no need to add an extra bridge dependency):

<dependency>

<groupId>ch.qos.logback</groupId>

<artifactId>logback-classic</artifactId>

<version>1.x</version>

</dependency>

Configure the logging level on the package org.optaplanner in your

logback.xml file:

<configuration>

<logger name="org.optaplanner" level="debug"/>

...

<configuration>

If instead, you're still using Log4J (and you don't want to switch to its faster successor, Logback), add the bridge dependency:

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.x</version>

</dependency>

And configure the logging level on the package org.optaplanner in your

log4j.xml file:

<log4j:configuration xmlns:log4j="http://jakarta.apache.org/log4j/">

<category name="org.optaplanner">

<priority value="debug" />

</category>

...

</log4j:configuration>